Calculate Gradient Numerically . Then you could approximate the partial derivatives directly, and assemble them into the gradient vector. In this post, we'll explore. A way better approach (numerically more stable, no issue of choosing the perturbation hyperparameter, accurate up to machine. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. For a function of two variables, f (x, y), the gradient is. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. I know the gradient of a function t on a cartesian grid: I know t for the center pillar:. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. It is based on the fact that the gradient gives the steepest. The only way to calculate gradient is calculus. One of its many useful features is the ability to calculate numerical gradients of functions using the gradient function. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. G (xi, yj, zk) = ∇t(xi, yj, zk) g → (x i, y j, z k) = ∇ t (x i, y j, z k).

from www.showme.com

I know the gradient of a function t on a cartesian grid: A way better approach (numerically more stable, no issue of choosing the perturbation hyperparameter, accurate up to machine. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. In this post, we'll explore. G (xi, yj, zk) = ∇t(xi, yj, zk) g → (x i, y j, z k) = ∇ t (x i, y j, z k). ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. The only way to calculate gradient is calculus. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. One of its many useful features is the ability to calculate numerical gradients of functions using the gradient function.

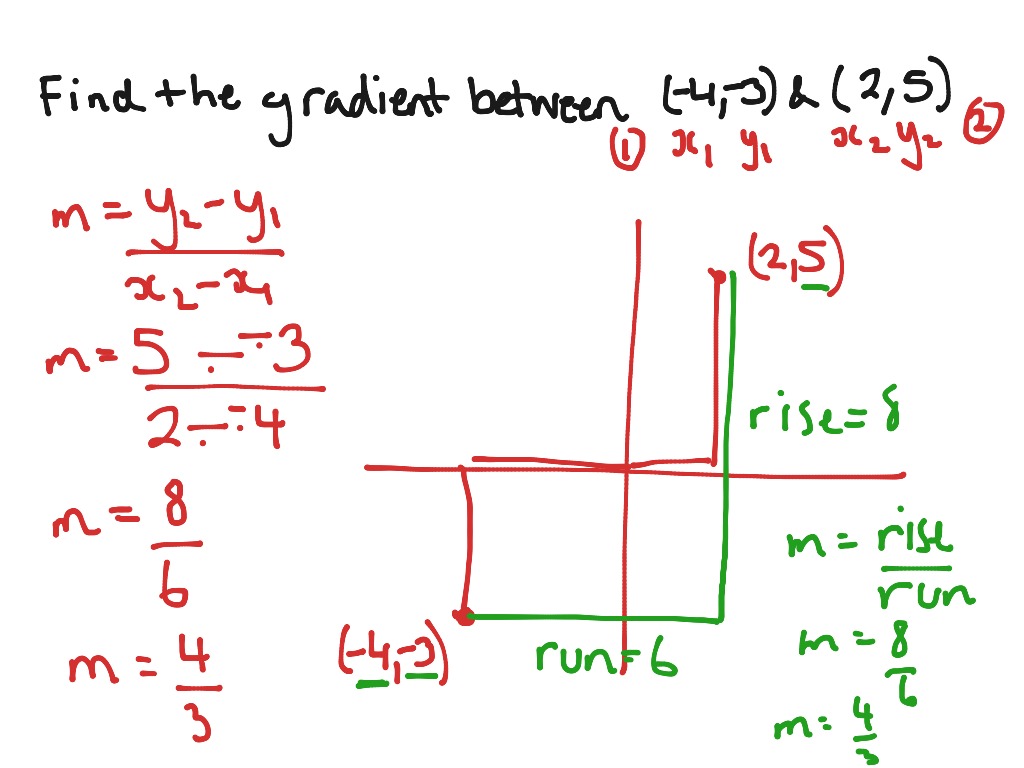

Finding the gradient between two points Math ShowMe

Calculate Gradient Numerically ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. I know the gradient of a function t on a cartesian grid: Then you could approximate the partial derivatives directly, and assemble them into the gradient vector. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. G (xi, yj, zk) = ∇t(xi, yj, zk) g → (x i, y j, z k) = ∇ t (x i, y j, z k). The only way to calculate gradient is calculus. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. A way better approach (numerically more stable, no issue of choosing the perturbation hyperparameter, accurate up to machine. One of its many useful features is the ability to calculate numerical gradients of functions using the gradient function. In this post, we'll explore. For a function of two variables, f (x, y), the gradient is. It is based on the fact that the gradient gives the steepest. I know t for the center pillar:. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y.

From www.youtube.com

Form 5 Maths 6.1 Gradient of a graph Part 2 (Distancetime graph Calculate Gradient Numerically Then you could approximate the partial derivatives directly, and assemble them into the gradient vector. In this post, we'll explore. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. For a function of two variables, f. Calculate Gradient Numerically.

From www.youtube.com

CALCULATING GRADIENT AND INTERCEPTS YouTube Calculate Gradient Numerically One of its many useful features is the ability to calculate numerical gradients of functions using the gradient function. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. Then you could approximate the partial. Calculate Gradient Numerically.

From www.youtube.com

Calculating gradient between two given points YouTube Calculate Gradient Numerically I know t for the center pillar:. For a function of two variables, f (x, y), the gradient is. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. In this post, we'll explore. G (xi, yj, zk) = ∇t(xi, yj, zk) g. Calculate Gradient Numerically.

From www.chegg.com

Solved Calculate the gradient of the following scalar Calculate Gradient Numerically The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. A way better approach (numerically more stable, no issue of choosing the perturbation hyperparameter, accurate up to machine. For a function of two variables, f (x, y), the gradient is. One of its many useful features is the ability to calculate numerical gradients of functions. Calculate Gradient Numerically.

From www.youtube.com

How To Calculate Gradient YouTube Calculate Gradient Numerically It is based on the fact that the gradient gives the steepest. I know t for the center pillar:. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. For a function of two variables, f (x, y), the gradient is. In this post, we'll explore. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j.. Calculate Gradient Numerically.

From www.youtube.com

Numerical Optimization Gradient Descent YouTube Calculate Gradient Numerically It is based on the fact that the gradient gives the steepest. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. In this post, we'll explore. I know t for the center pillar:. One of its many useful features is the ability to calculate numerical gradients of functions using the gradient function. G(x, y). Calculate Gradient Numerically.

From www.bbc.co.uk

How to find the gradient of a straight line in maths BBC Bitesize Calculate Gradient Numerically The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. A way better approach (numerically more stable, no issue of choosing the perturbation hyperparameter, accurate up to machine. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. The only way to. Calculate Gradient Numerically.

From www.bbc.co.uk

How to find the gradient of a straight line in maths BBC Bitesize Calculate Gradient Numerically ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. The only way to calculate gradient is calculus. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. For a function of two variables, f (x, y), the gradient is. In this post, we'll explore. Then you could approximate the partial derivatives directly, and assemble them. Calculate Gradient Numerically.

From www.youtube.com

Calculating gradient Physics help YouTube Calculate Gradient Numerically ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. One of its many useful features is the ability to calculate numerical gradients of functions using. Calculate Gradient Numerically.

From www.showme.com

Finding the gradient between two points Math ShowMe Calculate Gradient Numerically In this post, we'll explore. For a function of two variables, f (x, y), the gradient is. It is based on the fact that the gradient gives the steepest. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. I know t for the center pillar:.. Calculate Gradient Numerically.

From www.youtube.com

lines gradient and equation YouTube Calculate Gradient Numerically I know t for the center pillar:. The only way to calculate gradient is calculus. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. I know the gradient of a function t on a cartesian grid: G (xi, yj, zk) = ∇t(xi, yj, zk) g → (x i, y j, z. Calculate Gradient Numerically.

From www.youtube.com

CALCULATE THE GRADIENT OF A FUNCTION AT A POINT (Ep.2 of 5) Calculate Gradient Numerically A way better approach (numerically more stable, no issue of choosing the perturbation hyperparameter, accurate up to machine. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. The only way to calculate gradient is calculus. It is based on the fact that the gradient gives the steepest. I know t for. Calculate Gradient Numerically.

From www.youtube.com

How to calculate the gradient of a linear relation using rise over run Calculate Gradient Numerically A way better approach (numerically more stable, no issue of choosing the perturbation hyperparameter, accurate up to machine. The only way to calculate gradient is calculus. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. I know t for the center pillar:.. Calculate Gradient Numerically.

From slideplayer.com

Calculating gradients ppt download Calculate Gradient Numerically Then you could approximate the partial derivatives directly, and assemble them into the gradient vector. I know the gradient of a function t on a cartesian grid: One of its many useful features is the ability to calculate numerical gradients of functions using the gradient function. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors. Calculate Gradient Numerically.

From www.youtube.com

Coordinate Geometry Midpoint, Length and Gradient with Formulas YouTube Calculate Gradient Numerically G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values. Calculate Gradient Numerically.

From narodnatribuna.info

Straight Line Graph Demonstration How To Find Gradients Of Calculate Gradient Numerically It is based on the fact that the gradient gives the steepest. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. One of its many useful features is the ability to calculate numerical gradients of functions using the gradient function. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. G (xi,. Calculate Gradient Numerically.

From www.firstinarchitecture.co.uk

How to calculate slopes and gradients Calculate Gradient Numerically It is based on the fact that the gradient gives the steepest. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. In this post, we'll explore. The gradient can be thought of as a collection of vectors pointing in the direction of. Calculate Gradient Numerically.

From www.youtube.com

Calculating a gradient YouTube Calculate Gradient Numerically Then you could approximate the partial derivatives directly, and assemble them into the gradient vector. It is based on the fact that the gradient gives the steepest. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. A way better approach (numerically more stable, no issue of choosing the perturbation hyperparameter, accurate up to machine.. Calculate Gradient Numerically.

From www.researchgate.net

(Color online) Numerically calculated intensity gradient in the Calculate Gradient Numerically Then you could approximate the partial derivatives directly, and assemble them into the gradient vector. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. It is based on the fact that the gradient gives the steepest. One of its many useful features is the ability to calculate numerical gradients. Calculate Gradient Numerically.

From www.youtube.com

Physics class 11, 12 Gradient, Numerical 1(engineering Physics) YouTube Calculate Gradient Numerically In this post, we'll explore. For a function of two variables, f (x, y), the gradient is. G (xi, yj, zk) = ∇t(xi, yj, zk) g → (x i, y j, z k) = ∇ t (x i, y j, z k). G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y.. Calculate Gradient Numerically.

From www.slideserve.com

PPT Distance, time and speed PowerPoint Presentation, free download Calculate Gradient Numerically It is based on the fact that the gradient gives the steepest. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. One of its many useful features is the ability to. Calculate Gradient Numerically.

From www.youtube.com

NCEA Maths L2 Calculus Gradient at a Point YouTube Calculate Gradient Numerically One of its many useful features is the ability to calculate numerical gradients of functions using the gradient function. G (xi, yj, zk) = ∇t(xi, yj, zk) g → (x i, y j, z k) = ∇ t (x i, y j, z k). The only way to calculate gradient is calculus. The gradient can be thought of as a. Calculate Gradient Numerically.

From www.youtube.com

Gradient Vector Gradient of a Function Multivariable Calculus Calculate Gradient Numerically The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. The steepest (or gradient) descent method is one such iterative procedure to approximate extreme values. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. The only way to calculate gradient is. Calculate Gradient Numerically.

From engineeringdiscoveries.com

How To Calculate Slopes And Gradients Engineering Discoveries Calculate Gradient Numerically One of its many useful features is the ability to calculate numerical gradients of functions using the gradient function. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. I know t for the center pillar:. I know the gradient of a function t on a cartesian grid: For a. Calculate Gradient Numerically.

From www.bbc.co.uk

How to find the gradient of a straight line in maths BBC Bitesize Calculate Gradient Numerically It is based on the fact that the gradient gives the steepest. G (xi, yj, zk) = ∇t(xi, yj, zk) g → (x i, y j, z k) = ∇ t (x i, y j, z k). ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. Then you could approximate the partial derivatives directly, and assemble them into the gradient. Calculate Gradient Numerically.

From www.baeldung.com

What Is Gradient Orientation and Gradient Magnitude? Baeldung on Calculate Gradient Numerically G (xi, yj, zk) = ∇t(xi, yj, zk) g → (x i, y j, z k) = ∇ t (x i, y j, z k). For a function of two variables, f (x, y), the gradient is. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. A way better. Calculate Gradient Numerically.

From www.youtube.com

Numerical Techniques 1 How to Calculate the Gradient of a Curve at a Calculate Gradient Numerically Then you could approximate the partial derivatives directly, and assemble them into the gradient vector. In this post, we'll explore. For a function of two variables, f (x, y), the gradient is. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. It is based on the fact that the gradient gives the steepest. I know the gradient of a function. Calculate Gradient Numerically.

From slideplayer.com

Calculating gradients ppt download Calculate Gradient Numerically Then you could approximate the partial derivatives directly, and assemble them into the gradient vector. It is based on the fact that the gradient gives the steepest. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. G (xi, yj, zk) = ∇t(xi,. Calculate Gradient Numerically.

From bossmaths.com

A15a Calculating or estimating gradients of graphs Calculate Gradient Numerically I know t for the center pillar:. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. A way better approach (numerically more stable, no issue of choosing the perturbation hyperparameter, accurate up to machine. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in. Calculate Gradient Numerically.

From www.youtube.com

Calculating Gradient from 2 Coordinates Example 2 YouTube Calculate Gradient Numerically ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. I know t for the center pillar:. A way better approach (numerically more stable, no issue of choosing the perturbation hyperparameter, accurate up to machine. The only way to calculate gradient is calculus. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y.. Calculate Gradient Numerically.

From www.bbc.co.uk

How to find the gradient of a straight line in maths BBC Bitesize Calculate Gradient Numerically One of its many useful features is the ability to calculate numerical gradients of functions using the gradient function. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. I know t. Calculate Gradient Numerically.

From www.youtube.com

How To Calculate The Gradient of a Straight Line YouTube Calculate Gradient Numerically The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. I know t for the center pillar:. Then you could approximate the partial derivatives directly, and assemble them into the gradient vector. G(x, y) = df/dx i + df/dy j where. Calculate Gradient Numerically.

From www.slideshare.net

Gradient & area under a graph Calculate Gradient Numerically I know t for the center pillar:. The only way to calculate gradient is calculus. G (xi, yj, zk) = ∇t(xi, yj, zk) g → (x i, y j, z k) = ∇ t (x i, y j, z k). ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. It is based on the fact that the gradient gives the. Calculate Gradient Numerically.

From www.youtube.com

Calculate the Gradient of a Line YouTube Calculate Gradient Numerically One of its many useful features is the ability to calculate numerical gradients of functions using the gradient function. I know the gradient of a function t on a cartesian grid: The gradient can be thought of as a collection of vectors pointing in the direction of increasing values of f. I know t for the center pillar:. It is. Calculate Gradient Numerically.

From www.youtube.com

Calculating the Gradient of a Line WORKED EXAMPLE GCSE Physics Calculate Gradient Numerically Then you could approximate the partial derivatives directly, and assemble them into the gradient vector. In this post, we'll explore. ∇f = ∂f ∂xˆ i +∂f ∂ yˆ j. G(x, y) = df/dx i + df/dy j where (i, j) are unit vectors in x and y. A way better approach (numerically more stable, no issue of choosing the perturbation. Calculate Gradient Numerically.