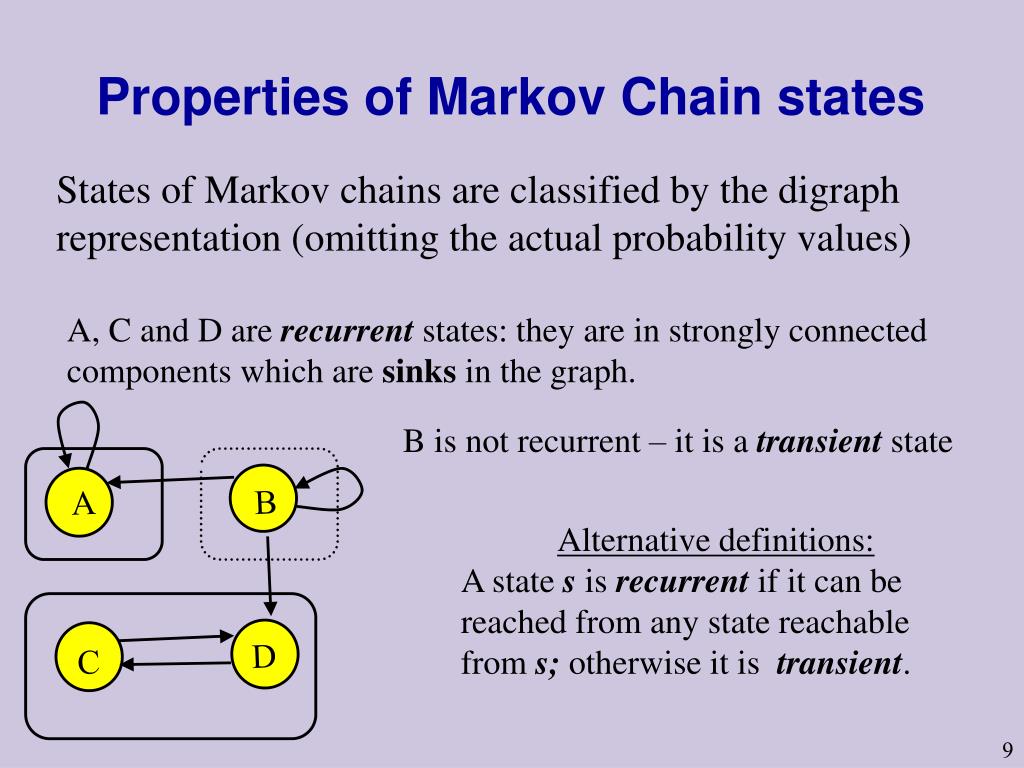

What Is A Transient State In Markov Chain . For example, s = {1,2,3,4,5,6,7}. The changes are not completely predictable, but rather are governed by probability. “recurrent” states and “transient” states. Let s have size n (possibly infinite). a markov chain describes a system whose state changes over time. in general, a markov chain might consist of several transient classes as well as several recurrent classes. Use the transition matrix and the initial state vector to find the state vector. The state space of a markov chain, s, is the set of values that each x t can take. otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. transience and recurrence issues are central to the study of markov chains and help describe the markov chain's overall. a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. write transition matrices for markov chain problems.

from www.slideserve.com

transience and recurrence issues are central to the study of markov chains and help describe the markov chain's overall. The changes are not completely predictable, but rather are governed by probability. in general, a markov chain might consist of several transient classes as well as several recurrent classes. “recurrent” states and “transient” states. The state space of a markov chain, s, is the set of values that each x t can take. write transition matrices for markov chain problems. Let s have size n (possibly infinite). For example, s = {1,2,3,4,5,6,7}. a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. a markov chain describes a system whose state changes over time.

PPT Markov Chains Lecture 5 PowerPoint Presentation, free download

What Is A Transient State In Markov Chain The state space of a markov chain, s, is the set of values that each x t can take. “recurrent” states and “transient” states. The state space of a markov chain, s, is the set of values that each x t can take. a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. Use the transition matrix and the initial state vector to find the state vector. write transition matrices for markov chain problems. Let s have size n (possibly infinite). The changes are not completely predictable, but rather are governed by probability. in general, a markov chain might consist of several transient classes as well as several recurrent classes. transience and recurrence issues are central to the study of markov chains and help describe the markov chain's overall. otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. a markov chain describes a system whose state changes over time. For example, s = {1,2,3,4,5,6,7}.

From www.slideserve.com

PPT Markov Chains PowerPoint Presentation, free download ID6008214 What Is A Transient State In Markov Chain otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. Let s have size n (possibly infinite). write transition matrices for markov chain problems. “recurrent” states and “transient” states. The changes are not completely predictable, but rather are governed by probability. For example, s = {1,2,3,4,5,6,7}. Use the transition. What Is A Transient State In Markov Chain.

From www.youtube.com

Markov Chains nstep Transition Matrix Part 3 YouTube What Is A Transient State In Markov Chain Use the transition matrix and the initial state vector to find the state vector. For example, s = {1,2,3,4,5,6,7}. otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. in general, a markov chain might consist of several transient classes as well as several recurrent classes. Let s have. What Is A Transient State In Markov Chain.

From www.slideserve.com

PPT Markov Chains Lecture 5 PowerPoint Presentation, free download What Is A Transient State In Markov Chain The state space of a markov chain, s, is the set of values that each x t can take. “recurrent” states and “transient” states. Use the transition matrix and the initial state vector to find the state vector. write transition matrices for markov chain problems. otherwise, the state is transient, which means that if the chain starts out. What Is A Transient State In Markov Chain.

From www.youtube.com

Transient, recurrent states, and irreducible, closed sets in the Markov What Is A Transient State In Markov Chain write transition matrices for markov chain problems. “recurrent” states and “transient” states. a markov chain describes a system whose state changes over time. For example, s = {1,2,3,4,5,6,7}. The changes are not completely predictable, but rather are governed by probability. a state is known as recurrent or transient depending upon whether or not the markov chain will. What Is A Transient State In Markov Chain.

From www.slideserve.com

PPT Markov Chains Lecture 5 PowerPoint Presentation, free download What Is A Transient State In Markov Chain Let s have size n (possibly infinite). a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. Use the transition matrix and the initial state vector to find the state vector. “recurrent” states and “transient” states. write transition matrices for markov chain problems. For example, s =. What Is A Transient State In Markov Chain.

From gregorygundersen.com

Ergodic Markov Chains What Is A Transient State In Markov Chain “recurrent” states and “transient” states. a markov chain describes a system whose state changes over time. The changes are not completely predictable, but rather are governed by probability. transience and recurrence issues are central to the study of markov chains and help describe the markov chain's overall. in general, a markov chain might consist of several transient. What Is A Transient State In Markov Chain.

From www.analyticsvidhya.com

A Comprehensive Guide on Markov Chain Analytics Vidhya What Is A Transient State In Markov Chain Use the transition matrix and the initial state vector to find the state vector. a markov chain describes a system whose state changes over time. write transition matrices for markov chain problems. a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. Let s have size. What Is A Transient State In Markov Chain.

From gregorygundersen.com

A Romantic View of Markov Chains What Is A Transient State In Markov Chain The changes are not completely predictable, but rather are governed by probability. a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. “recurrent” states and “transient” states. a markov chain describes a system whose state changes over time. write transition matrices for markov chain problems. The. What Is A Transient State In Markov Chain.

From www.slideserve.com

PPT Markov Chain Part 1 PowerPoint Presentation, free download ID What Is A Transient State In Markov Chain Use the transition matrix and the initial state vector to find the state vector. For example, s = {1,2,3,4,5,6,7}. Let s have size n (possibly infinite). “recurrent” states and “transient” states. The changes are not completely predictable, but rather are governed by probability. in general, a markov chain might consist of several transient classes as well as several recurrent. What Is A Transient State In Markov Chain.

From www.slideserve.com

PPT Markov Chains Lecture 5 PowerPoint Presentation, free download What Is A Transient State In Markov Chain in general, a markov chain might consist of several transient classes as well as several recurrent classes. write transition matrices for markov chain problems. The changes are not completely predictable, but rather are governed by probability. The state space of a markov chain, s, is the set of values that each x t can take. a markov. What Is A Transient State In Markov Chain.

From www.youtube.com

Finite Math Markov Transition Diagram to Matrix Practice YouTube What Is A Transient State In Markov Chain otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. transience and recurrence issues are central to the study of markov chains and help describe the markov chain's overall. in general, a markov chain might consist of several transient classes as well as several recurrent classes. write. What Is A Transient State In Markov Chain.

From www.chegg.com

Solved 12.40Consider the threestate Markov chain shown in What Is A Transient State In Markov Chain Let s have size n (possibly infinite). otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. a markov chain describes a system whose state changes over time. For example, s = {1,2,3,4,5,6,7}. The changes are not completely predictable, but rather are governed by probability. Use the transition matrix. What Is A Transient State In Markov Chain.

From www.chegg.com

Solved Consider the Markov chain with transition matrix What Is A Transient State In Markov Chain a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. Use the transition matrix and the initial state vector to find the state vector. For example, s = {1,2,3,4,5,6,7}. write transition matrices for markov chain problems. in general, a markov chain might consist of several transient. What Is A Transient State In Markov Chain.

From www.researchgate.net

Markov chain for a CBR composed of four nodes (N = 2). Transient states What Is A Transient State In Markov Chain transience and recurrence issues are central to the study of markov chains and help describe the markov chain's overall. Use the transition matrix and the initial state vector to find the state vector. a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. The changes are not. What Is A Transient State In Markov Chain.

From www.slideserve.com

PPT Markov Chains Lecture 5 PowerPoint Presentation, free download What Is A Transient State In Markov Chain Let s have size n (possibly infinite). in general, a markov chain might consist of several transient classes as well as several recurrent classes. The changes are not completely predictable, but rather are governed by probability. The state space of a markov chain, s, is the set of values that each x t can take. otherwise, the state. What Is A Transient State In Markov Chain.

From www.youtube.com

Markov Chain Theory Episode 2 Recurrent and Transient States What Is A Transient State In Markov Chain a markov chain describes a system whose state changes over time. The changes are not completely predictable, but rather are governed by probability. write transition matrices for markov chain problems. For example, s = {1,2,3,4,5,6,7}. a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. Let. What Is A Transient State In Markov Chain.

From www.geeksforgeeks.org

Finding the probability of a state at a given time in a Markov chain What Is A Transient State In Markov Chain Let s have size n (possibly infinite). “recurrent” states and “transient” states. a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. a markov chain describes a system whose state changes over time. in general, a markov chain might consist of several transient classes as well. What Is A Transient State In Markov Chain.

From www.slideserve.com

PPT Markov Chain Part 1 PowerPoint Presentation, free download ID What Is A Transient State In Markov Chain Use the transition matrix and the initial state vector to find the state vector. The changes are not completely predictable, but rather are governed by probability. transience and recurrence issues are central to the study of markov chains and help describe the markov chain's overall. Let s have size n (possibly infinite). “recurrent” states and “transient” states. otherwise,. What Is A Transient State In Markov Chain.

From www.chegg.com

Consider the following Markov chain diagram for the What Is A Transient State In Markov Chain The changes are not completely predictable, but rather are governed by probability. For example, s = {1,2,3,4,5,6,7}. transience and recurrence issues are central to the study of markov chains and help describe the markov chain's overall. Use the transition matrix and the initial state vector to find the state vector. Let s have size n (possibly infinite). write. What Is A Transient State In Markov Chain.

From www.youtube.com

What is a transient state in Markov chain? YouTube What Is A Transient State In Markov Chain a markov chain describes a system whose state changes over time. write transition matrices for markov chain problems. The state space of a markov chain, s, is the set of values that each x t can take. Let s have size n (possibly infinite). For example, s = {1,2,3,4,5,6,7}. Use the transition matrix and the initial state vector. What Is A Transient State In Markov Chain.

From www.researchgate.net

Markov chains a, Markov chain for L = 1. States are represented by What Is A Transient State In Markov Chain a markov chain describes a system whose state changes over time. Use the transition matrix and the initial state vector to find the state vector. The state space of a markov chain, s, is the set of values that each x t can take. in general, a markov chain might consist of several transient classes as well as. What Is A Transient State In Markov Chain.

From www.youtube.com

L25.5 Recurrent and Transient States Review YouTube What Is A Transient State In Markov Chain a markov chain describes a system whose state changes over time. “recurrent” states and “transient” states. otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. The state space of a markov chain, s, is the set of values that each x t can take. a state is. What Is A Transient State In Markov Chain.

From www.slideserve.com

PPT Chapter 17 Markov Chains PowerPoint Presentation ID309355 What Is A Transient State In Markov Chain Use the transition matrix and the initial state vector to find the state vector. transience and recurrence issues are central to the study of markov chains and help describe the markov chain's overall. “recurrent” states and “transient” states. Let s have size n (possibly infinite). For example, s = {1,2,3,4,5,6,7}. write transition matrices for markov chain problems. . What Is A Transient State In Markov Chain.

From www.coursehero.com

[Solved] Transition Probability 2. A Markov chain with state space {1 What Is A Transient State In Markov Chain The changes are not completely predictable, but rather are governed by probability. Use the transition matrix and the initial state vector to find the state vector. write transition matrices for markov chain problems. otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. in general, a markov chain. What Is A Transient State In Markov Chain.

From www.slideserve.com

PPT Markov Chain Models PowerPoint Presentation, free download ID What Is A Transient State In Markov Chain The state space of a markov chain, s, is the set of values that each x t can take. The changes are not completely predictable, but rather are governed by probability. transience and recurrence issues are central to the study of markov chains and help describe the markov chain's overall. For example, s = {1,2,3,4,5,6,7}. otherwise, the state. What Is A Transient State In Markov Chain.

From www.chegg.com

Solved In this Markov chain all 1 transitions with nonzero What Is A Transient State In Markov Chain For example, s = {1,2,3,4,5,6,7}. in general, a markov chain might consist of several transient classes as well as several recurrent classes. The changes are not completely predictable, but rather are governed by probability. Let s have size n (possibly infinite). a markov chain describes a system whose state changes over time. The state space of a markov. What Is A Transient State In Markov Chain.

From www.researchgate.net

Twostate Markov chains. State transition diagrams (A) and example What Is A Transient State In Markov Chain “recurrent” states and “transient” states. For example, s = {1,2,3,4,5,6,7}. a markov chain describes a system whose state changes over time. a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. otherwise, the state is transient, which means that if the chain starts out at i,. What Is A Transient State In Markov Chain.

From manuallistaeschylus.z14.web.core.windows.net

Transition Diagram Markov Chain What Is A Transient State In Markov Chain write transition matrices for markov chain problems. The state space of a markov chain, s, is the set of values that each x t can take. The changes are not completely predictable, but rather are governed by probability. a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to. What Is A Transient State In Markov Chain.

From www.slideserve.com

PPT Markov Chain Models PowerPoint Presentation, free download ID What Is A Transient State In Markov Chain a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. Use the transition matrix and the initial state vector to find the state vector. otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. a markov chain. What Is A Transient State In Markov Chain.

From www.researchgate.net

State transition diagram for a threestate Markov chain Download What Is A Transient State In Markov Chain The state space of a markov chain, s, is the set of values that each x t can take. “recurrent” states and “transient” states. a markov chain describes a system whose state changes over time. transience and recurrence issues are central to the study of markov chains and help describe the markov chain's overall. Let s have size. What Is A Transient State In Markov Chain.

From www.ml-science.com

Markov Chains — The Science of Machine Learning & AI What Is A Transient State In Markov Chain otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. Use the transition matrix and the initial state vector to find the state vector. Let s have size n (possibly infinite). transience and recurrence issues are central to the study of markov chains and help describe the markov chain's. What Is A Transient State In Markov Chain.

From www.slideserve.com

PPT Markov Chains Lecture 5 PowerPoint Presentation, free download What Is A Transient State In Markov Chain Use the transition matrix and the initial state vector to find the state vector. Let s have size n (possibly infinite). “recurrent” states and “transient” states. a markov chain describes a system whose state changes over time. The state space of a markov chain, s, is the set of values that each x t can take. write transition. What Is A Transient State In Markov Chain.

From www.chegg.com

Solved c) For the Markov chain shown below identify the What Is A Transient State In Markov Chain The changes are not completely predictable, but rather are governed by probability. otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. a state is known as recurrent or transient depending upon whether or not the markov chain will eventually return to it. Use the transition matrix and the. What Is A Transient State In Markov Chain.

From www.researchgate.net

State diagram of the Markov chain representing the states of the What Is A Transient State In Markov Chain write transition matrices for markov chain problems. Use the transition matrix and the initial state vector to find the state vector. otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. For example, s = {1,2,3,4,5,6,7}. a state is known as recurrent or transient depending upon whether or. What Is A Transient State In Markov Chain.

From www.researchgate.net

A Markov chain with five states, where states 3 and 5 are absorbing What Is A Transient State In Markov Chain otherwise, the state is transient, which means that if the chain starts out at i, there is a positive. The changes are not completely predictable, but rather are governed by probability. write transition matrices for markov chain problems. Let s have size n (possibly infinite). a state is known as recurrent or transient depending upon whether or. What Is A Transient State In Markov Chain.