Unlocking Data Potential with Data Lake House Architecture

In today’s data-driven world, organizations need flexible yet robust systems to manage vast amounts of diverse data. Data Lake House architecture emerges as a transformative solution, merging the scalability of data lakes with the consistency and performance of data warehouses. This unified approach enables seamless analytics, governance, and operational efficiency, empowering businesses to unlock actionable insights faster than ever before.

The Essential Guide to a Data Lakehouse | AltexSoft

Source: www.altexsoft.com

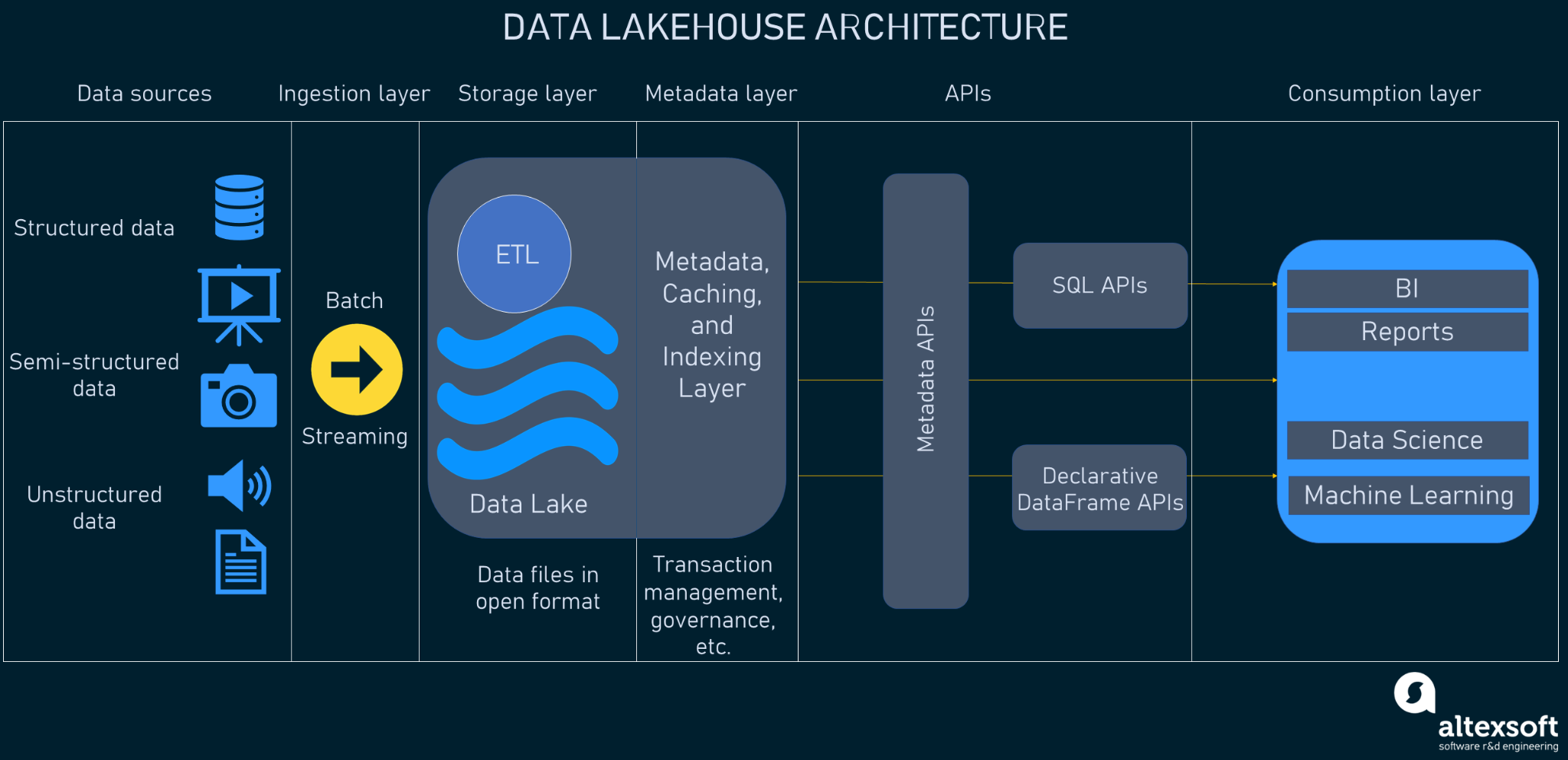

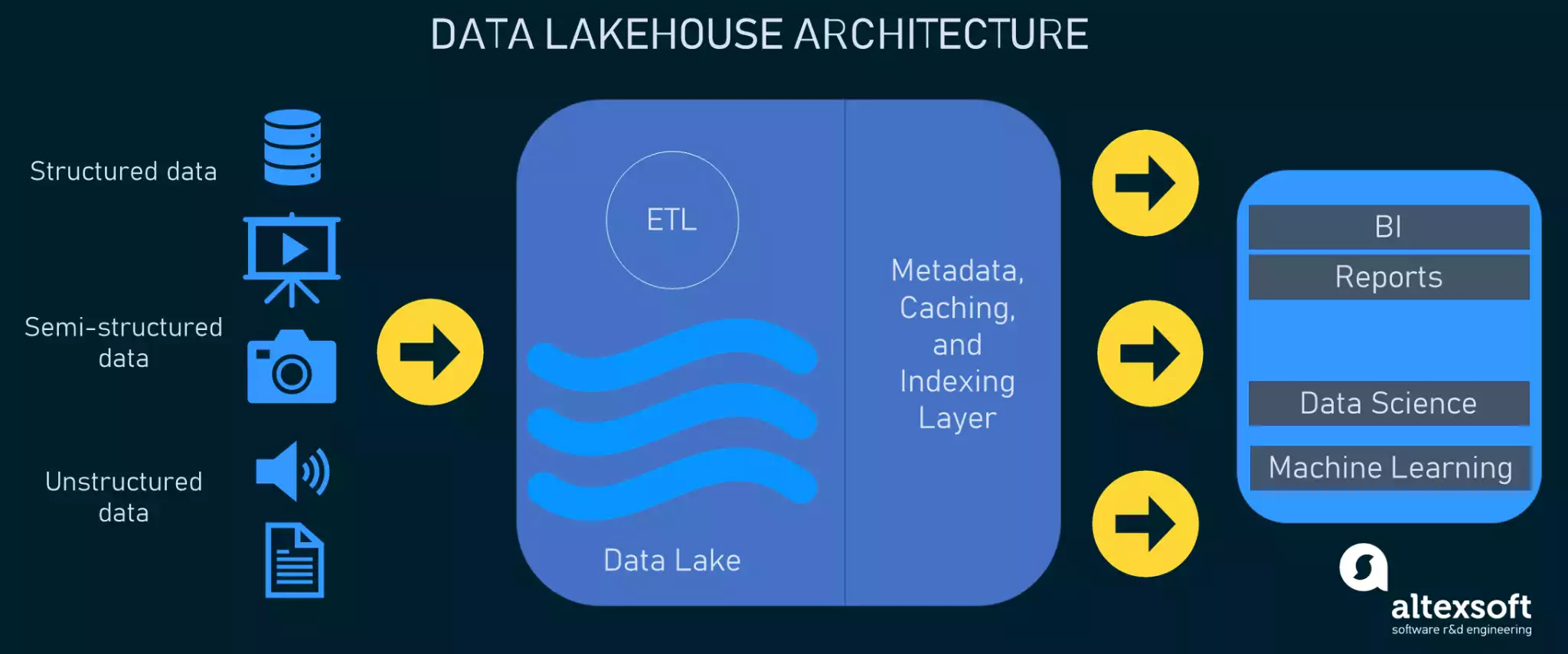

Core Components of Data Lake House Architecture

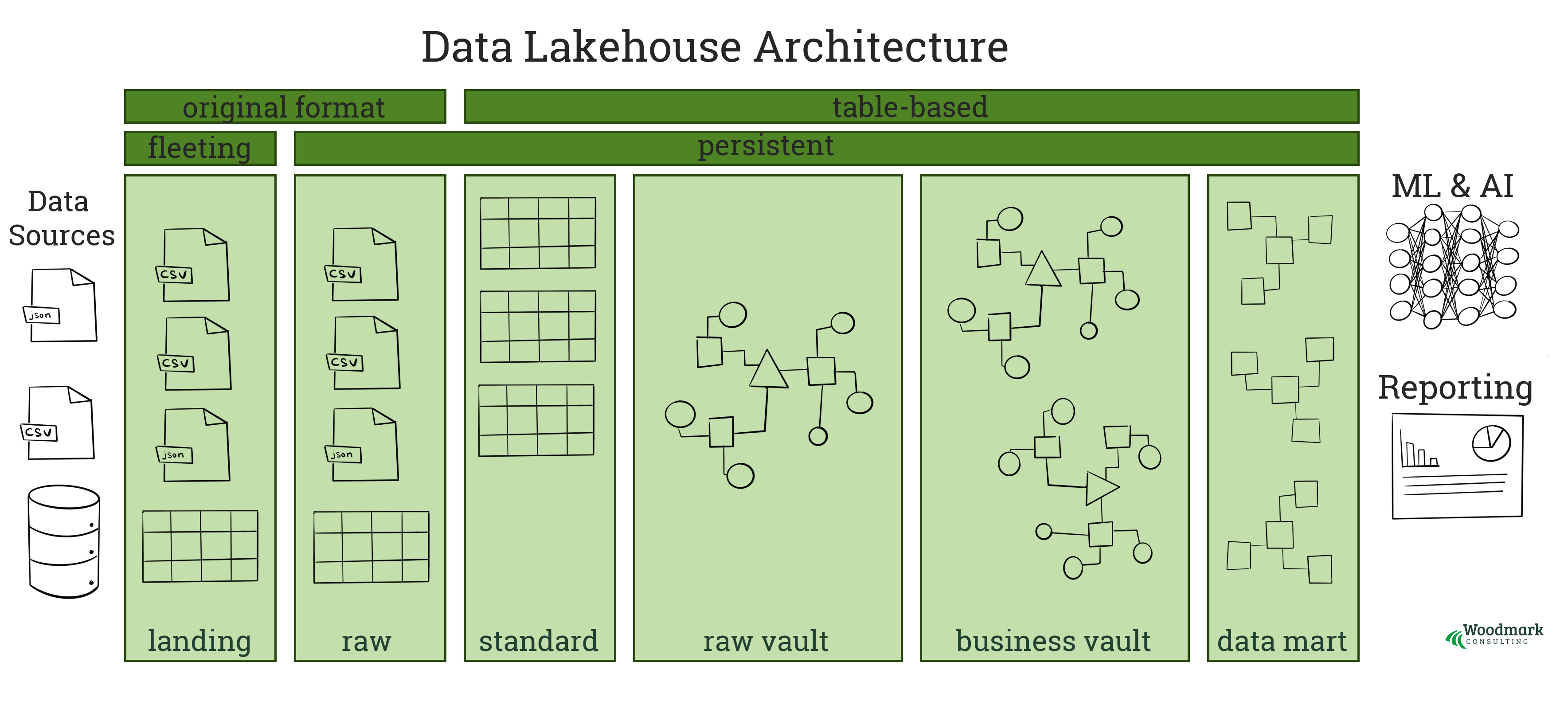

At its core, a Data Lake House integrates three key elements: a scalable data lake for raw, structured, and unstructured data; a transactional data warehouse for high-performance querying and analytics; and a unified metadata layer that ensures data discoverability and governance. This architecture supports diverse workloads—from real-time reporting and machine learning to advanced data science—without data silos or complex ETL processes. By enabling ACID transactions, schema enforcement, and support for multiple query engines, it delivers consistency across environments while maintaining flexibility.

5 Layers Of Data Lakehouse Architecture Explained

Source: www.montecarlodata.com

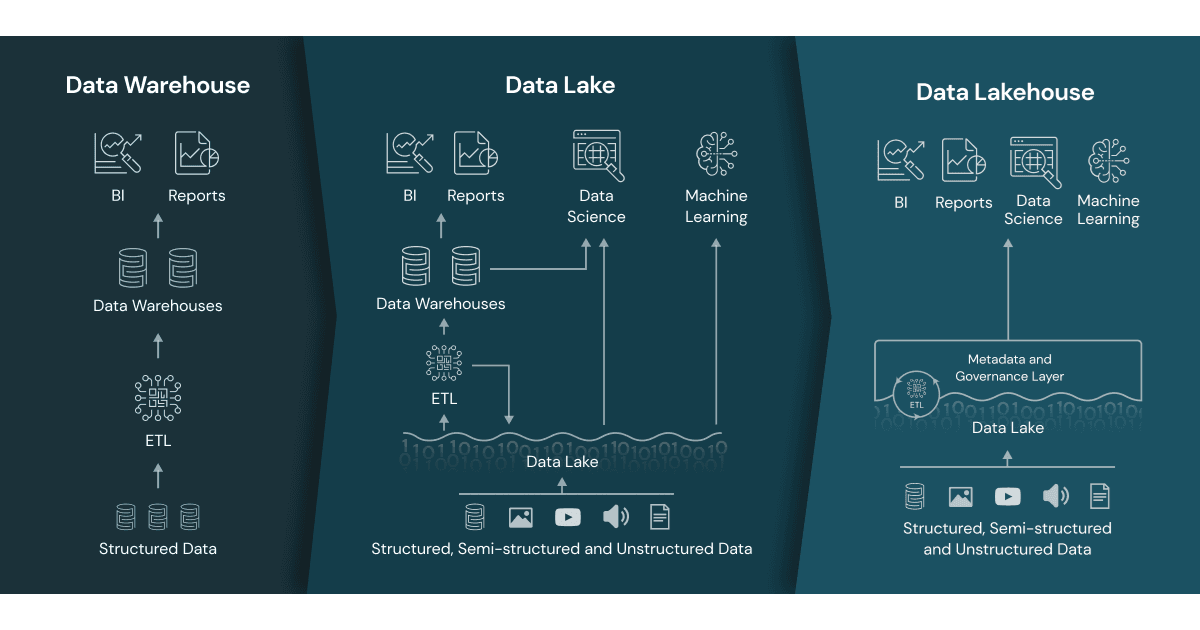

Benefits Over Traditional Data Platforms

Unlike legacy data lakes that struggle with performance and governance or traditional data warehouses limited by rigid schemas, Data Lake House delivers a unified ecosystem. It eliminates data silos by providing a single source of truth, reduces pipeline complexity with automated ingestion and transformation, and enhances security through built-in access controls and audit trails. Organizations benefit from faster time-to-insight, improved collaboration across teams, and cost efficiencies via optimized storage and compute resource usage.

What Is a Data Lakehouse? | Blog | Fivetran

Source: fivetran.com

Building a Scalable and Future-Proof Data Strategy

Implementing a Data Lake House requires careful planning around data ingestion, cataloging, governance, and performance tuning. Modern platforms support cloud-native elasticity, allowing seamless scaling during peak workloads. Adopting open standards like Apache Iceberg or Delta Lake ensures interoperability and avoids vendor lock-in. With robust metadata management, teams can catalog, profile, and lineage-track data effortlessly. As data volumes grow and analytical needs evolve, this architecture positions enterprises to adapt rapidly while maintaining data quality and compliance.

.png?width=1700&height=750&name=Data Lakehouse Architecture (1).png)

Data Lakehouse Architecture, Implementation and Best Practices

Source: insights.axtria.com

Data Lake House architecture represents the next evolution in data management—unifying flexibility, performance, and governance in one cohesive framework. By breaking down silos and enabling scalable, secure analytics, it empowers organizations to harness the full value of their data. For forward-thinking businesses, adopting a Data Lake House is no longer optional—it’s essential for staying competitive in an increasingly data-centric landscape.

The Essential Guide to a Data Lakehouse

Source: www.altexsoft.com

A data lakehouse is a system that combines the flexibility, cost-efficiency, and scale of data lakes with the data management and ACID transactions of data warehouses. Learn how data lakehouses enable BI and ML on all data with metadata layers, query engine optimizations, and open data formats. A data lakehouse is a data management system that combines the benefits of data lakes and data warehouses.

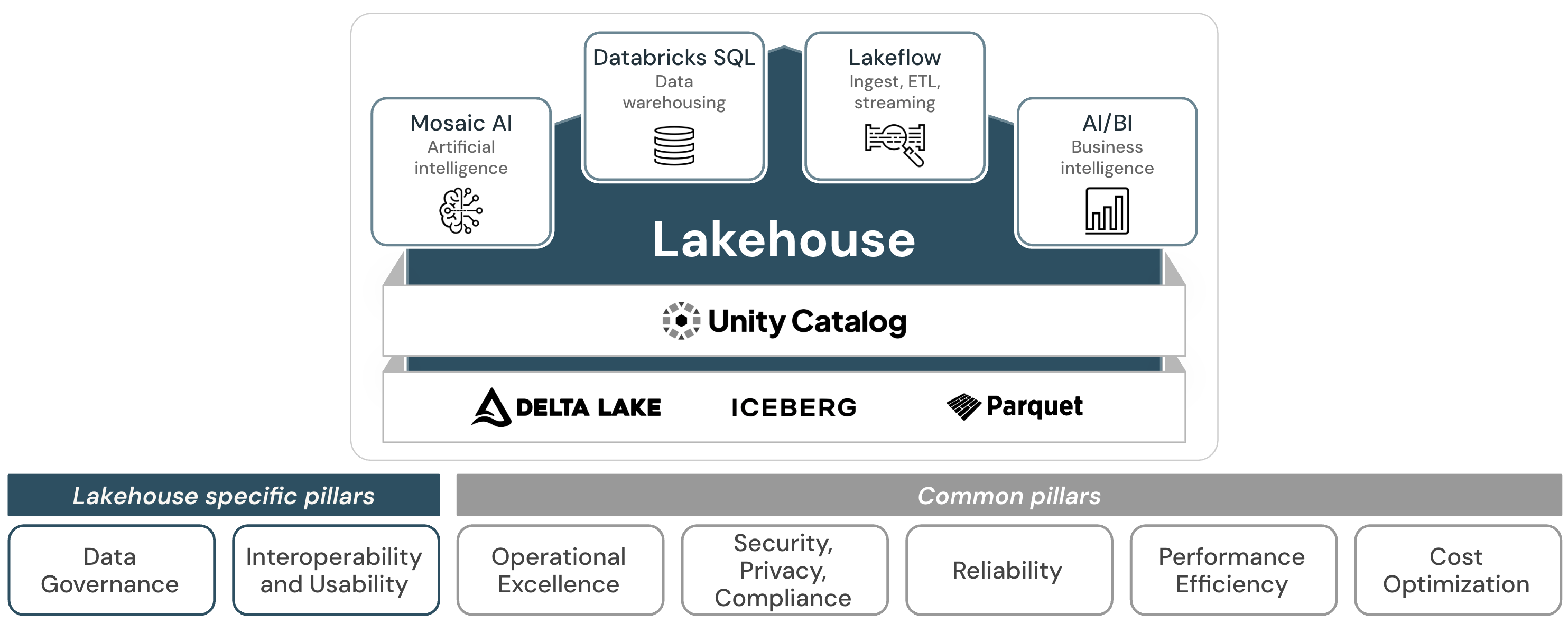

Accelerating AI Adoption with Unified Databricks Lakehouse Architecture

Source: www.ltimindtree.com

This article describes the lakehouse architectural pattern and what you can do with it on Azure Databricks. A data lakehouse is a data architecture that blends a data lake and data warehouse together. Data lakehouses enable machine learning, business intelligence, and predictive analytics, allowing organizations to leverage low-cost, flexible storage for all types of data - structured, unstructured, and semi.

Data architectures for ML applications and AI - Woodmark Consulting

Source: www.woodmark.de

Learn what a data lakehouse is and how it combines data lake and warehouse features. Explore its architecture, platforms, and real. Learn what a data lakehouse is, how it compares to data lakes and warehouses, and explore architecture patterns like medallion.

Data Lakehouse-Architektur: gut strukturiertes Framework von Databricks ...

Source: learn.microsoft.com

Covers open table formats (Iceberg, Delta Lake, DuckLake) and how to build your own lakehouse. Data lakehouse architecture combines the benefits of data warehouses and data lakes, bringing together the structure and performance of a data warehouse with the flexibility of a data lake. This architecture format consists of several key layers that are essential to helping an organization run fast analytics on structured and unstructured data.

Introduction to the well-architected data lakehouse These articles help you design and implement an effective lakehouse on the Databricks Data Intelligence Platform. They provide architectural guidance, best practices, and principles for building a reliable, efficient, and cost. A data lakehouse is a hybrid data architecture that combines the best attributes of data warehouses and data lakes to address their respective limitations.

This innovative approach to data management brings the transactional capabilities of data warehouses to cloud-based data lakes, offering scalability at lower costs. What is a lakehouse? New systems are beginning to emerge that address the limitations of data lakes. A lakehouse is a new, open architecture that combines the best elements of data lakes and data warehouses.

Lakehouses are enabled by a new system design: implementing similar data structures and data management features to those in a data warehouse directly on top of low cost cloud storage in. The data lakehouse combines the best of data warehouses and data lakes into a unified architecture. We'll explore its key components, advantages, and how ClickHouse fits into this modern analytics platform.