Advancements in American Sign Language Recognition Technology

As digital inclusion becomes a global priority, American Sign Language recognition is transforming how deaf individuals communicate and access information through cutting-edge AI and machine learning advancements.

www.semanticscholar.org

Technological Foundations of American Sign Language Recognition

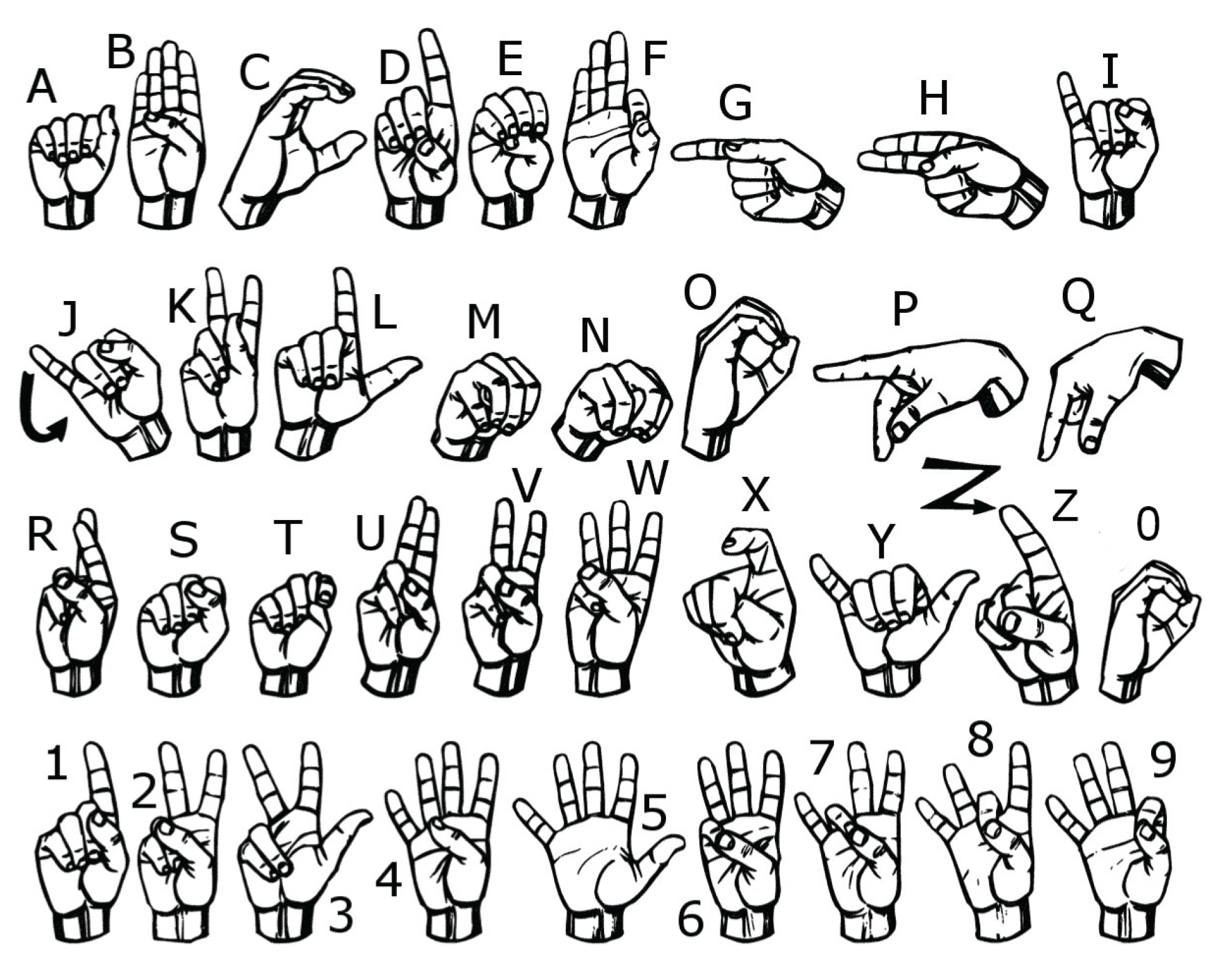

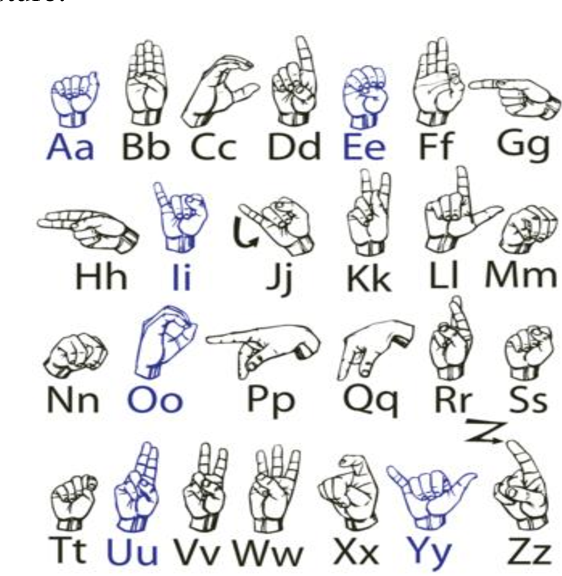

American Sign Language recognition relies on computer vision, deep learning, and motion tracking to interpret handshapes, facial expressions, and body language. Recent developments in convolutional neural networks and gesture segmentation have significantly improved accuracy, enabling real-time translation between ASL and spoken English. These systems leverage extensive datasets of signed language to train models capable of recognizing regional variations and nuanced signing styles, making technology more inclusive and responsive.

deep.ai

Applications and Impact on Deaf Communities

Modern ASL recognition powers accessible tools like mobile apps, video interpreters, and educational platforms that bridge communication gaps in classrooms, workplaces, and public services. These innovations empower deaf individuals with greater autonomy, fostering inclusion in digital spaces. Healthcare providers, educators, and government agencies increasingly adopt ASL recognition to ensure equitable access to vital services, reducing barriers and enhancing daily interactions.

debuggercafe.com

Challenges and Future Directions

Despite progress, challenges persist, including variability in signing speed, regional dialects, and the need for multimodal analysis combining gestures and facial cues. Ongoing research focuses on improving contextual understanding, reducing latency, and expanding support for diverse signing styles. Future advancements promise seamless integration with augmented reality and wearable devices, further empowering ASL users and promoting universal design in technology.

github.com

American Sign Language recognition is a cornerstone of inclusive communication technology, driven by continuous innovation and user-centered design. As AI capabilities evolve, so too does the potential to dissolve barriers and enrich lives across the deaf community—embracing a future where every voice is heard.

www.mdpi.com

This project aims to detect American Sign Language using PyTorch and deep learning. The neural network can also detect the sign language letters in real-time from a webcam video feed. Developed a program that lets users search dictionaries of American Sign Language (ASL), to look up the meaning of.

www.mdpi.com

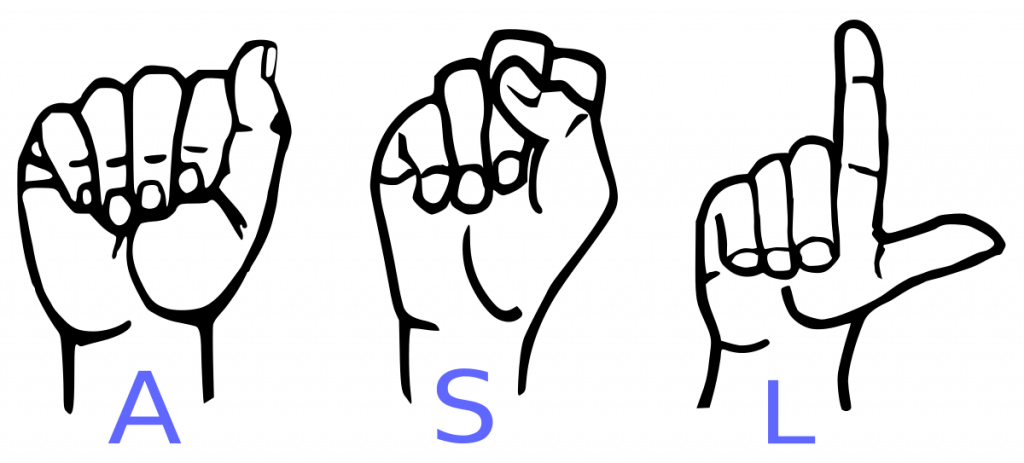

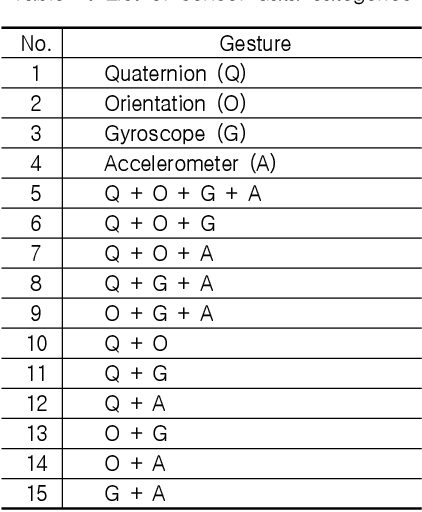

In this article, a simple and efficient vision-based approach for American Sign Language (ASL) alphabets recognition has been discussed to recognize both static and dynamic gestures. Mediapipe introduced by Google had been used to get hand landmarks and a custom dataset has been created and used for the experimental study. Gesture recognition plays a vital role in computer vision, especially for interpreting sign language and enabling human.

towardsdatascience.com

American Alphabet Sign Language Recognition System The most ordinary form of communication relies on alphabetic expression through speech, writing, or sign language. A study is the first-of-its-kind to recognize American Sign Language (ASL) alphabet gestures using computer vision. Researchers developed a custom dataset of 29,820 static images of ASL hand.

github.com

Abstract Our paper presents a two-pronged ablation study for sign language recognition for American Sign Language (ASL) characters on two datasets. Experimentation re-vealed that hyperparameter tuning, data augmentation, and hand landmark detection can help improve accuracy. The fi-nal model achieved a test accuracy of 96.42%.

www.semanticscholar.org

Future work includes running the model for a greater number of. American Sign Language (ASL) recognition aims to recognize hand gestures, and it is a crucial solution to communicating between the deaf community and hearing people. However, existing sign language recognition algorithms still have some drawbacks, such as difficulty recognizing hand movements and low recognition accuracy for most sign language recognition.

coderspacket.com

A Modified Convolutional Neural. In order to bridge this gap and connect deaf- mute with the rest of the world, with the help of deep learning, Sign Language Recognition (SLR) model has been introduced to revolutionize the way sign language is integrated into daily life, enhance communication, accessibility, and inclusively. Image of Sign Language 'F' from Pexels Sign Language is a form of communication used primarily by people hard of hearing or deaf.

quantinsightsnetwork.com

This type of gesture. "By improving American Sign Language recognition, this work contributes to creating tools that can enhance communication for the deaf and hard-of-hearing community," says Stella Batalama, PhD, dean, FAU College of Engineering and Computer Science. "The model's ability to reliably interpret gestures opens the door to more inclusive solutions that support accessibility, making daily.

sudarshanasrao.github.io

riset.guru

www.researchgate.net

www.semanticscholar.org