As digital transformation accelerates, edge computing has emerged as a vital architecture that processes data near its source, reducing latency and bandwidth use. Understanding the types of edge computing is essential for designing efficient, responsive systems.

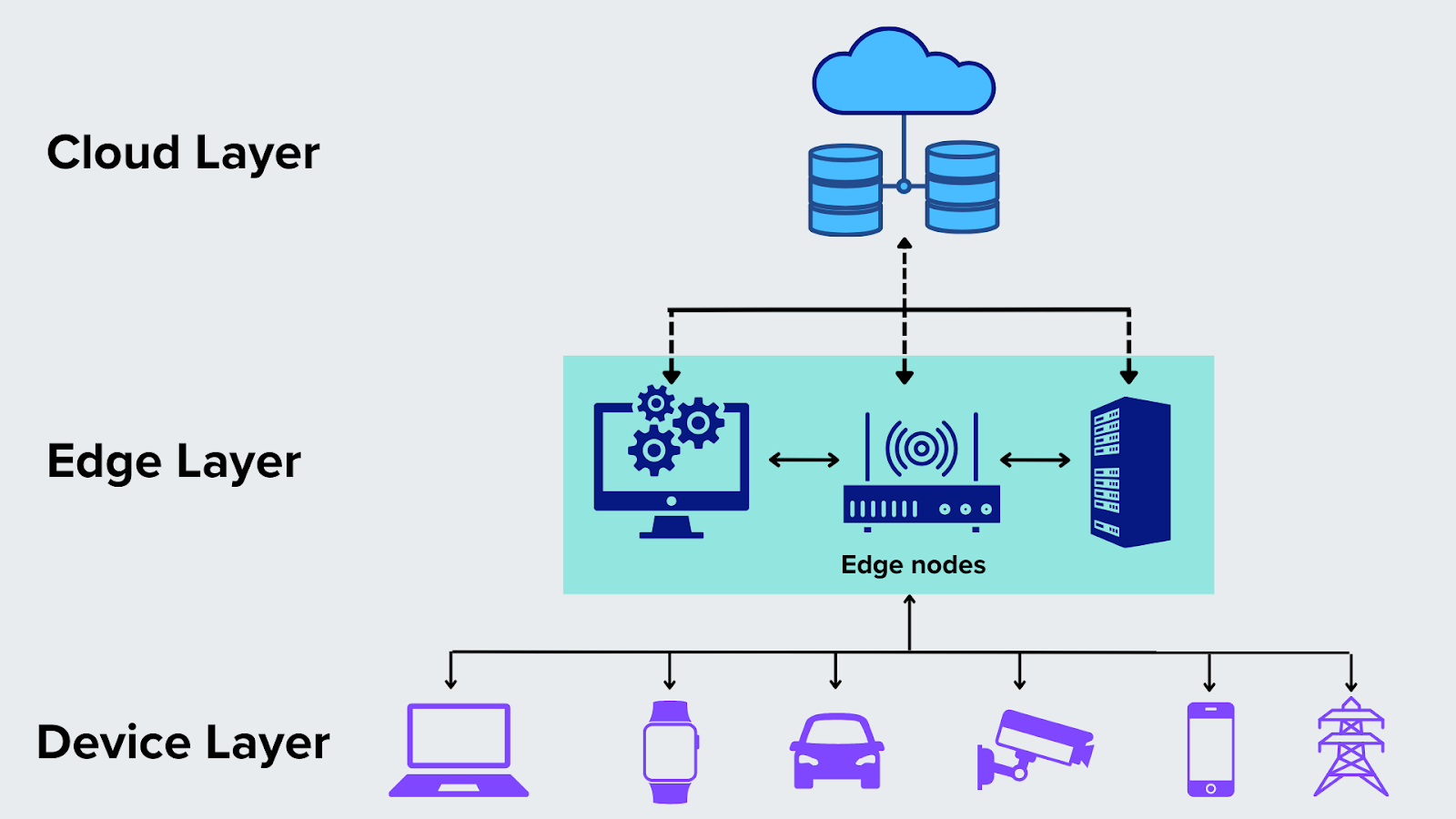

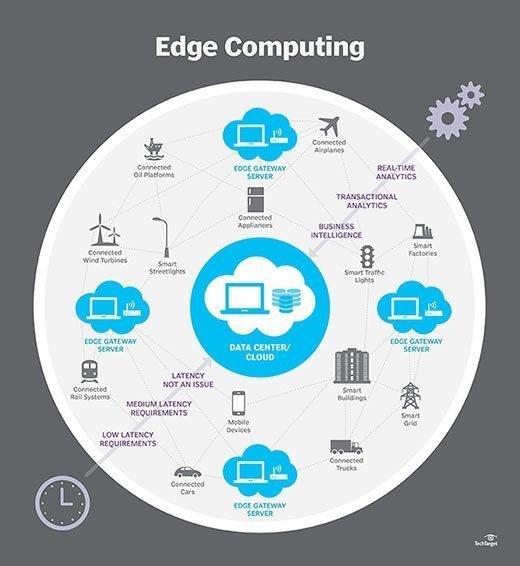

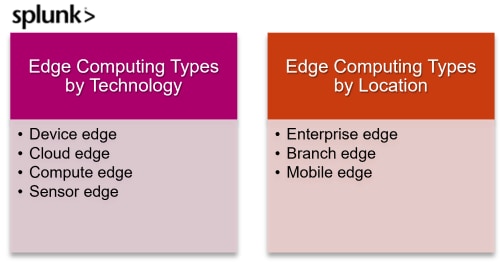

One primary type is **cloud-edge computing**, where cloud resources extend to distributed edge nodes, enabling scalable analytics and centralized management while maintaining low-latency operations. This hybrid model supports applications like real-time video analytics and smart city infrastructure.

Another key form is **on-premises edge computing**, where data processing occurs within local facilities such as factories, hospitals, or retail stores. This setup ensures data sovereignty, enhances security, and supports mission-critical operations without relying on distant cloud servers.

**Mobile edge computing (MEC)** delivers processing power to cellular networks and mobile devices, optimizing 5G networks by minimizing latency for applications such as augmented reality, autonomous vehicles, and live streaming.

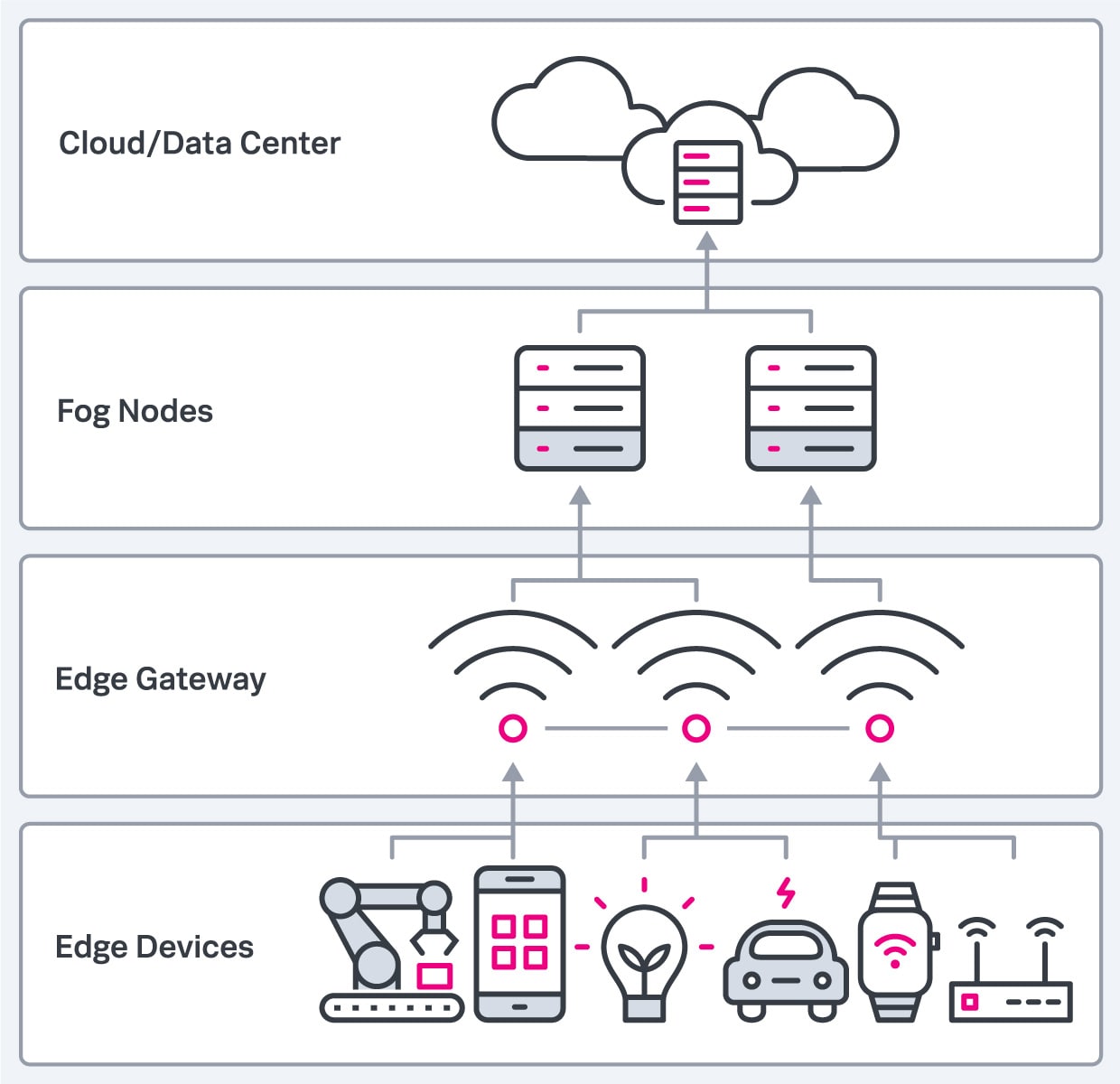

**Fog computing** acts as an intermediary layer between edge devices and the cloud, aggregating and filtering data across multiple edge nodes before sending relevant insights upward, improving scalability and resource efficiency.

Finally, **distributed edge computing** leverages decentralized networks of edge servers, enabling localized decision-making across geographically dispersed locations—ideal for IoT deployments and smart grids.

Each edge computing type offers unique advantages, empowering organizations to build responsive, intelligent systems. As edge adoption grows, selecting the right model becomes critical for performance and scalability. Embrace edge computing to unlock real-time insights and transform your digital strategy today.

With edge computing types evolving rapidly, staying informed is key to harnessing real-time data potential. Whether enhancing IoT networks or powering 5G applications, choosing the right edge architecture drives innovation. Start integrating edge solutions to future-proof your digital infrastructure today.

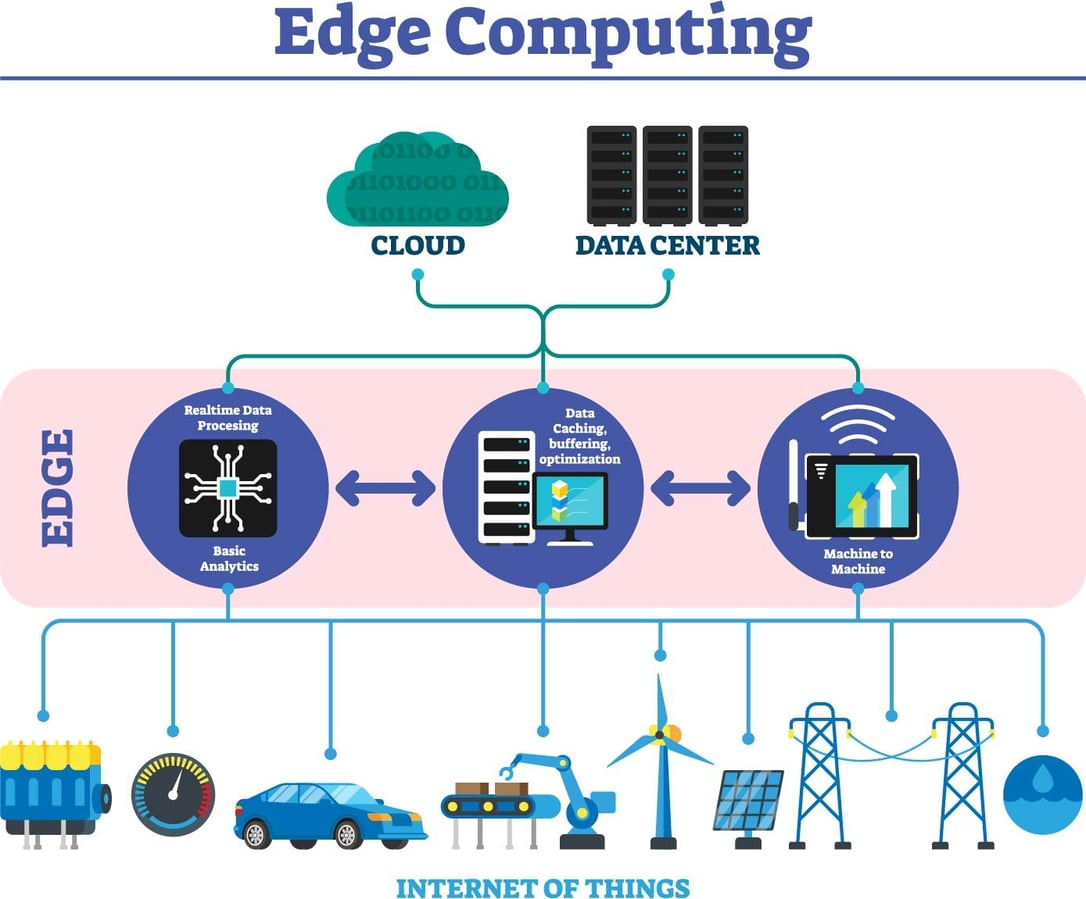

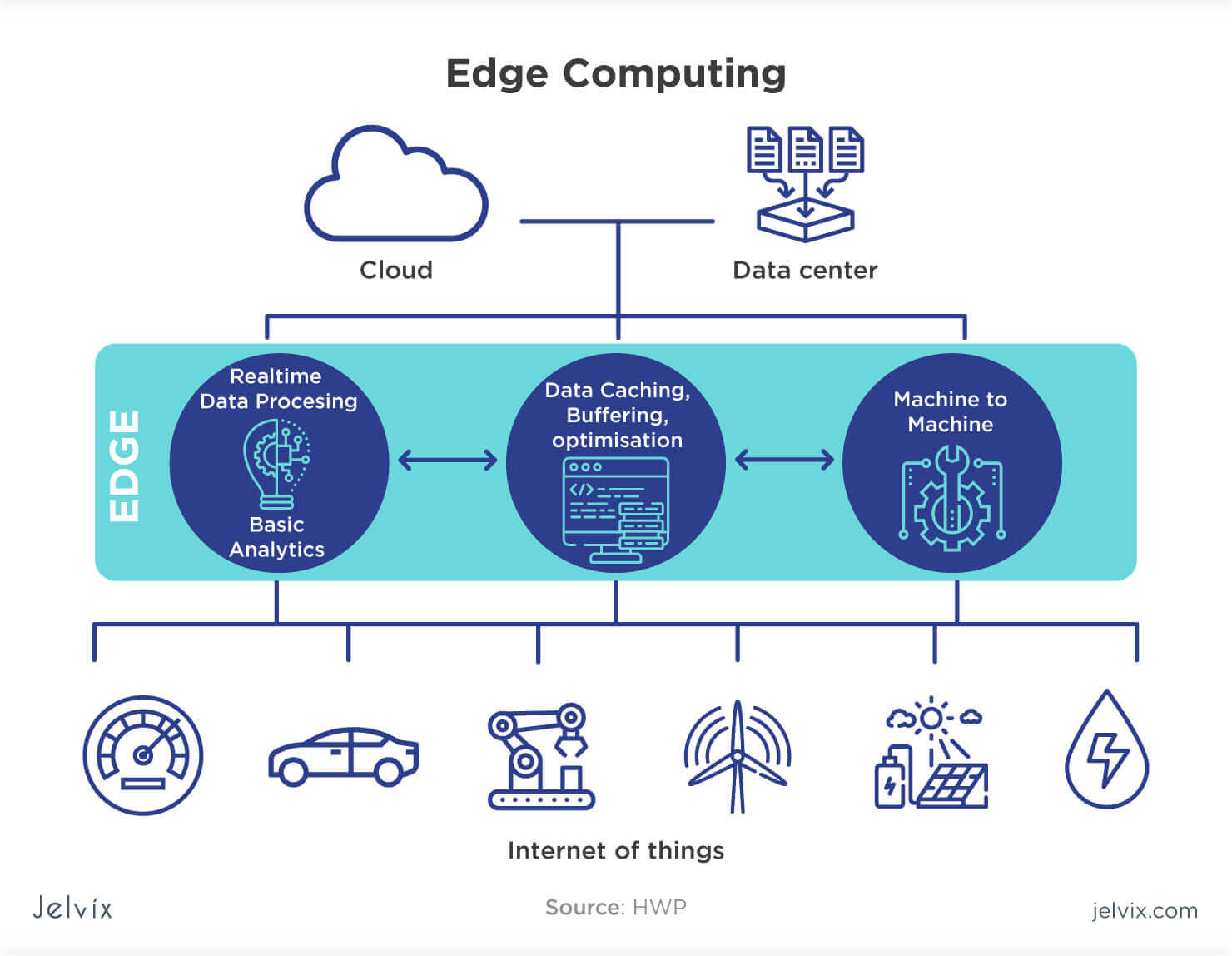

Learn what edge computing is, how it works, and why it matters. Explore its benefits, real-world use cases, security concerns, and role in modern networks. Edge computing is a distributed computing model that brings computation and data storage closer to the sources of data.

More broadly, it refers to any design that pushes computation physically closer to a user, so as to reduce the latency compared to when an application runs on a centralized data center. Edge computing is rapidly growing in many global sectors. Learn the variety of types.

What is edge computing? Edge computing is a distributed IT architecture that processes data close to its source using local compute, storage, networking, and security technologies. By being nearer to where data is generated, edge computing reduces latency, improves real-time responsiveness, and lowers bandwidth costs. There are four main types of edge computing: network edge, regional edge, on.

The edge computing appliances typically have more capacity than the previous types of edge compute servers and can host multiple virtual network functions and applications on the same hardware. The term "Edge" is used to mean many different things across many different industries, so. What is edge computing? Edge computing is a distributed computing framework that brings enterprise applications closer to data sources such as IoT devices or local edge servers.

This proximity to data at its source can deliver strong business benefits, including faster insights, improved response times and better bandwidth availability. There are four types of edge computing: device, cloud, on. Learn the differences between three common types of edge computing and understand which use cases are best supported by each edge approach.