/rust/registry/src/index.crates.io-1949cf8c6b5b557f/zmij-1.0.20/src/lib.rs

Line | Count | Source |

1 | | //! [![github]](https://github.com/dtolnay/zmij) [![crates-io]](https://crates.io/crates/zmij) [![docs-rs]](https://docs.rs/zmij) |

2 | | //! |

3 | | //! [github]: https://img.shields.io/badge/github-8da0cb?style=for-the-badge&labelColor=555555&logo=github |

4 | | //! [crates-io]: https://img.shields.io/badge/crates.io-fc8d62?style=for-the-badge&labelColor=555555&logo=rust |

5 | | //! [docs-rs]: https://img.shields.io/badge/docs.rs-66c2a5?style=for-the-badge&labelColor=555555&logo=docs.rs |

6 | | //! |

7 | | //! <br> |

8 | | //! |

9 | | //! A double-to-string conversion algorithm based on [Schubfach] and [yy]. |

10 | | //! |

11 | | //! This Rust implementation is a line-by-line port of Victor Zverovich's |

12 | | //! implementation in C++, <https://github.com/vitaut/zmij>. |

13 | | //! |

14 | | //! [Schubfach]: https://fmt.dev/papers/Schubfach4.pdf |

15 | | //! [yy]: https://github.com/ibireme/c_numconv_benchmark/blob/master/vendor/yy_double/yy_double.c |

16 | | //! |

17 | | //! <br> |

18 | | //! |

19 | | //! # Example |

20 | | //! |

21 | | //! ``` |

22 | | //! fn main() { |

23 | | //! let mut buffer = zmij::Buffer::new(); |

24 | | //! let printed = buffer.format(1.234); |

25 | | //! assert_eq!(printed, "1.234"); |

26 | | //! } |

27 | | //! ``` |

28 | | //! |

29 | | //! <br> |

30 | | //! |

31 | | //! ## Performance |

32 | | //! |

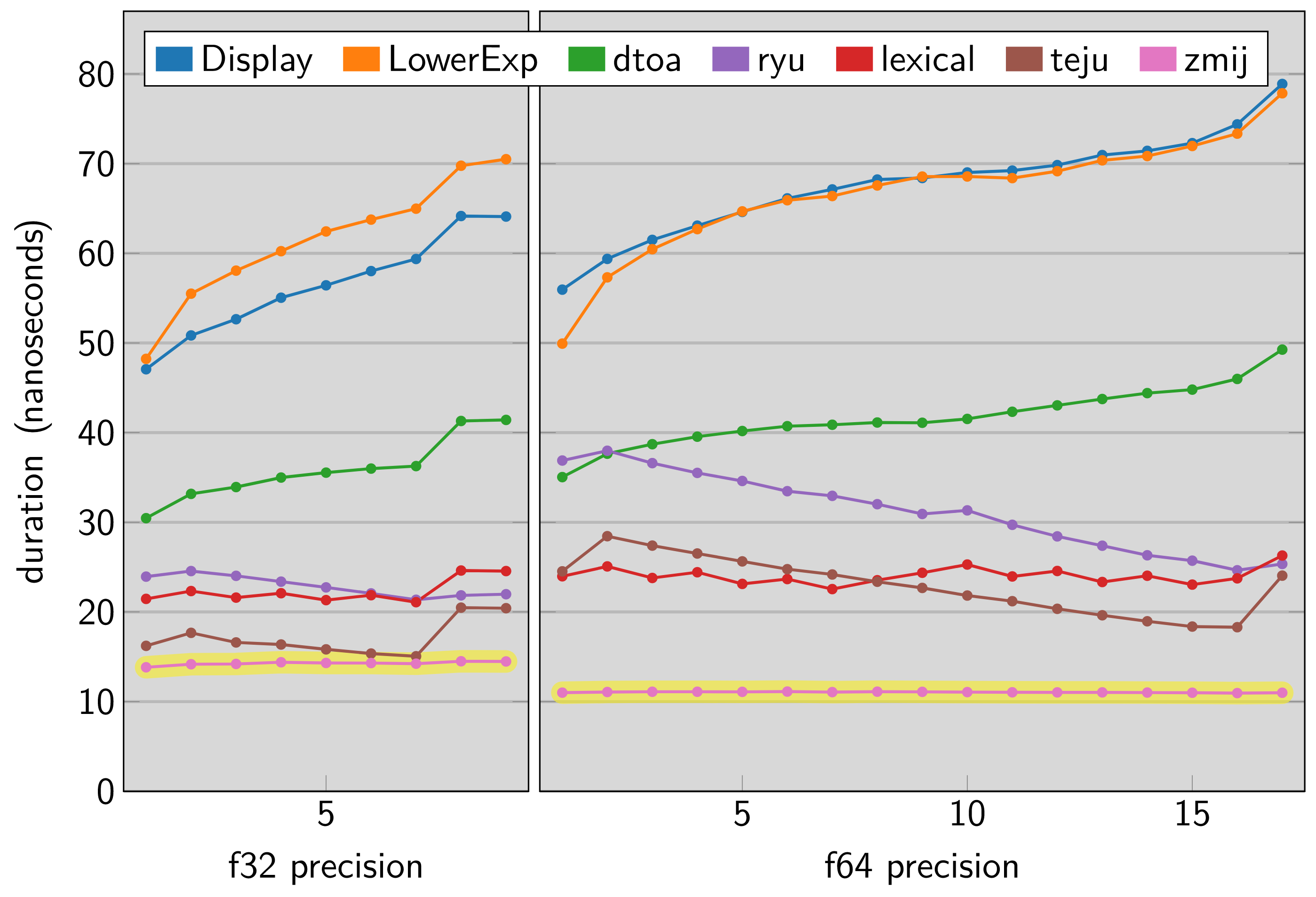

33 | | //! The [dtoa-benchmark] compares this library and other Rust floating point |

34 | | //! formatting implementations across a range of precisions. The vertical axis |

35 | | //! in this chart shows nanoseconds taken by a single execution of |

36 | | //! `zmij::Buffer::new().format_finite(value)` so a lower result indicates a |

37 | | //! faster library. |

38 | | //! |

39 | | //! [dtoa-benchmark]: https://github.com/dtolnay/dtoa-benchmark |

40 | | //! |

41 | | //!  |

42 | | |

43 | | #![no_std] |

44 | | #![doc(html_root_url = "https://docs.rs/zmij/1.0.20")] |

45 | | #![deny(unsafe_op_in_unsafe_fn)] |

46 | | #![allow(non_camel_case_types, non_snake_case)] |

47 | | #![allow( |

48 | | clippy::blocks_in_conditions, |

49 | | clippy::cast_possible_truncation, |

50 | | clippy::cast_possible_wrap, |

51 | | clippy::cast_ptr_alignment, |

52 | | clippy::cast_sign_loss, |

53 | | clippy::doc_markdown, |

54 | | clippy::incompatible_msrv, |

55 | | clippy::items_after_statements, |

56 | | clippy::many_single_char_names, |

57 | | clippy::modulo_one, |

58 | | clippy::must_use_candidate, |

59 | | clippy::needless_doctest_main, |

60 | | clippy::never_loop, |

61 | | clippy::redundant_else, |

62 | | clippy::similar_names, |

63 | | clippy::too_many_arguments, |

64 | | clippy::too_many_lines, |

65 | | clippy::unreadable_literal, |

66 | | clippy::used_underscore_items, |

67 | | clippy::while_immutable_condition, |

68 | | clippy::wildcard_imports |

69 | | )] |

70 | | |

71 | | #[cfg(zmij_no_select_unpredictable)] |

72 | | mod hint; |

73 | | #[cfg(all(target_arch = "x86_64", target_feature = "sse2", not(miri)))] |

74 | | mod stdarch_x86; |

75 | | #[cfg(test)] |

76 | | mod tests; |

77 | | mod traits; |

78 | | |

79 | | #[cfg(all(any(target_arch = "aarch64", target_arch = "x86_64"), not(miri)))] |

80 | | use core::arch::asm; |

81 | | #[cfg(not(zmij_no_select_unpredictable))] |

82 | | use core::hint; |

83 | | use core::mem::{self, MaybeUninit}; |

84 | | use core::ptr; |

85 | | use core::slice; |

86 | | use core::str; |

87 | | #[cfg(feature = "no-panic")] |

88 | | use no_panic::no_panic; |

89 | | |

90 | | const BUFFER_SIZE: usize = 24; |

91 | | const NAN: &str = "NaN"; |

92 | | const INFINITY: &str = "inf"; |

93 | | const NEG_INFINITY: &str = "-inf"; |

94 | | |

95 | | // Returns true_value if lhs < rhs, else false_value, without branching. |

96 | | #[inline] |

97 | 14.5k | fn select_if_less(lhs: u64, rhs: u64, true_value: i64, false_value: i64) -> i64 { |

98 | 14.5k | hint::select_unpredictable(lhs < rhs, true_value, false_value) |

99 | 14.5k | } |

100 | | |

101 | | #[derive(Copy, Clone)] |

102 | | #[cfg_attr(test, derive(Debug, PartialEq))] |

103 | | struct uint128 { |

104 | | hi: u64, |

105 | | lo: u64, |

106 | | } |

107 | | |

108 | | // Use umul128_hi64 for division. |

109 | | const USE_UMUL128_HI64: bool = cfg!(target_vendor = "apple"); |

110 | | |

111 | | // Computes 128-bit result of multiplication of two 64-bit unsigned integers. |

112 | 14.5k | const fn umul128(x: u64, y: u64) -> u128 { |

113 | 14.5k | x as u128 * y as u128 |

114 | 14.5k | } |

115 | | |

116 | 7.28k | const fn umul128_hi64(x: u64, y: u64) -> u64 { |

117 | 7.28k | (umul128(x, y) >> 64) as u64 |

118 | 7.28k | } |

119 | | |

120 | | #[cfg_attr(feature = "no-panic", no_panic)] |

121 | 0 | fn umul192_hi128(x_hi: u64, x_lo: u64, y: u64) -> uint128 { |

122 | 0 | let p = umul128(x_hi, y); |

123 | 0 | let lo = (p as u64).wrapping_add((umul128(x_lo, y) >> 64) as u64); |

124 | 0 | uint128 { |

125 | 0 | hi: (p >> 64) as u64 + u64::from(lo < p as u64), |

126 | 0 | lo, |

127 | 0 | } |

128 | 0 | } |

129 | | |

130 | | // Computes high 64 bits of multiplication of x and y, discards the least |

131 | | // significant bit and rounds to odd, where x = uint128_t(x_hi << 64) | x_lo. |

132 | | #[cfg_attr(feature = "no-panic", no_panic)] |

133 | 12 | fn umulhi_inexact_to_odd<UInt>(x_hi: u64, x_lo: u64, y: UInt) -> UInt |

134 | 12 | where |

135 | 12 | UInt: traits::UInt, |

136 | | { |

137 | 12 | let num_bits = mem::size_of::<UInt>() * 8; |

138 | 12 | if num_bits == 64 { |

139 | 0 | let p = umul192_hi128(x_hi, x_lo, y.into()); |

140 | 0 | UInt::truncate(p.hi | u64::from((p.lo >> 1) != 0)) |

141 | | } else { |

142 | 12 | let p = (umul128(x_hi, y.into()) >> 32) as u64; |

143 | 12 | UInt::enlarge((p >> 32) as u32 | u32::from((p as u32 >> 1) != 0)) |

144 | | } |

145 | 12 | } zmij::umulhi_inexact_to_odd::<u32> Line | Count | Source | 133 | 12 | fn umulhi_inexact_to_odd<UInt>(x_hi: u64, x_lo: u64, y: UInt) -> UInt | 134 | 12 | where | 135 | 12 | UInt: traits::UInt, | 136 | | { | 137 | 12 | let num_bits = mem::size_of::<UInt>() * 8; | 138 | 12 | if num_bits == 64 { | 139 | 0 | let p = umul192_hi128(x_hi, x_lo, y.into()); | 140 | 0 | UInt::truncate(p.hi | u64::from((p.lo >> 1) != 0)) | 141 | | } else { | 142 | 12 | let p = (umul128(x_hi, y.into()) >> 32) as u64; | 143 | 12 | UInt::enlarge((p >> 32) as u32 | u32::from((p as u32 >> 1) != 0)) | 144 | | } | 145 | 12 | } |

Unexecuted instantiation: zmij::umulhi_inexact_to_odd::<u64> |

146 | | |

147 | | trait FloatTraits: traits::Float { |

148 | | const NUM_BITS: i32; |

149 | | const NUM_SIG_BITS: i32 = Self::MANTISSA_DIGITS as i32 - 1; |

150 | | const NUM_EXP_BITS: i32 = Self::NUM_BITS - Self::NUM_SIG_BITS - 1; |

151 | | const EXP_MASK: i32 = (1 << Self::NUM_EXP_BITS) - 1; |

152 | | const EXP_BIAS: i32 = (1 << (Self::NUM_EXP_BITS - 1)) - 1; |

153 | | const EXP_OFFSET: i32 = Self::EXP_BIAS + Self::NUM_SIG_BITS; |

154 | | |

155 | | type SigType: traits::UInt; |

156 | | const IMPLICIT_BIT: Self::SigType; |

157 | | |

158 | | fn to_bits(self) -> Self::SigType; |

159 | | |

160 | 22.0k | fn is_negative(bits: Self::SigType) -> bool { |

161 | 22.0k | (bits >> (Self::NUM_BITS - 1)) != Self::SigType::from(0) |

162 | 22.0k | } <f64 as zmij::FloatTraits>::is_negative Line | Count | Source | 160 | 5.08k | fn is_negative(bits: Self::SigType) -> bool { | 161 | 5.08k | (bits >> (Self::NUM_BITS - 1)) != Self::SigType::from(0) | 162 | 5.08k | } |

<f32 as zmij::FloatTraits>::is_negative Line | Count | Source | 160 | 16.9k | fn is_negative(bits: Self::SigType) -> bool { | 161 | 16.9k | (bits >> (Self::NUM_BITS - 1)) != Self::SigType::from(0) | 162 | 16.9k | } |

|

163 | | |

164 | 22.0k | fn get_sig(bits: Self::SigType) -> Self::SigType { |

165 | 22.0k | bits & (Self::IMPLICIT_BIT - Self::SigType::from(1)) |

166 | 22.0k | } <f64 as zmij::FloatTraits>::get_sig Line | Count | Source | 164 | 5.08k | fn get_sig(bits: Self::SigType) -> Self::SigType { | 165 | 5.08k | bits & (Self::IMPLICIT_BIT - Self::SigType::from(1)) | 166 | 5.08k | } |

<f32 as zmij::FloatTraits>::get_sig Line | Count | Source | 164 | 16.9k | fn get_sig(bits: Self::SigType) -> Self::SigType { | 165 | 16.9k | bits & (Self::IMPLICIT_BIT - Self::SigType::from(1)) | 166 | 16.9k | } |

|

167 | | |

168 | 22.0k | fn get_exp(bits: Self::SigType) -> i64 { |

169 | 22.0k | (bits << 1u8 >> (Self::NUM_SIG_BITS + 1)).into() as i64 |

170 | 22.0k | } <f64 as zmij::FloatTraits>::get_exp Line | Count | Source | 168 | 5.08k | fn get_exp(bits: Self::SigType) -> i64 { | 169 | 5.08k | (bits << 1u8 >> (Self::NUM_SIG_BITS + 1)).into() as i64 | 170 | 5.08k | } |

<f32 as zmij::FloatTraits>::get_exp Line | Count | Source | 168 | 16.9k | fn get_exp(bits: Self::SigType) -> i64 { | 169 | 16.9k | (bits << 1u8 >> (Self::NUM_SIG_BITS + 1)).into() as i64 | 170 | 16.9k | } |

|

171 | | } |

172 | | |

173 | | impl FloatTraits for f32 { |

174 | | const NUM_BITS: i32 = 32; |

175 | | const IMPLICIT_BIT: u32 = 1 << Self::NUM_SIG_BITS; |

176 | | |

177 | | type SigType = u32; |

178 | | |

179 | 16.9k | fn to_bits(self) -> Self::SigType { |

180 | 16.9k | self.to_bits() |

181 | 16.9k | } |

182 | | } |

183 | | |

184 | | impl FloatTraits for f64 { |

185 | | const NUM_BITS: i32 = 64; |

186 | | const IMPLICIT_BIT: u64 = 1 << Self::NUM_SIG_BITS; |

187 | | |

188 | | type SigType = u64; |

189 | | |

190 | 5.08k | fn to_bits(self) -> Self::SigType { |

191 | 5.08k | self.to_bits() |

192 | 5.08k | } |

193 | | } |

194 | | |

195 | | #[repr(C, align(64))] |

196 | | struct Pow10SignificandsTable { |

197 | | data: [u64; if Self::COMPRESS { |

198 | | 0 |

199 | | } else { |

200 | | Self::NUM_POW10 * 2 |

201 | | }], |

202 | | } |

203 | | |

204 | | impl Pow10SignificandsTable { |

205 | | const COMPRESS: bool = false; |

206 | | const SPLIT_TABLES: bool = !Self::COMPRESS && cfg!(target_arch = "aarch64"); |

207 | | const NUM_POW10: usize = 617; |

208 | | |

209 | 7.29k | unsafe fn get_unchecked(&self, dec_exp: i32) -> uint128 { |

210 | | const DEC_EXP_MIN: i32 = -292; |

211 | 7.29k | if Self::COMPRESS { |

212 | 0 | let i = dec_exp - DEC_EXP_MIN; |

213 | | // 672 bytes of data |

214 | | #[rustfmt::skip] |

215 | | static POW10S: [u64; 28] = [ |

216 | | 0x8000000000000000, 0xa000000000000000, 0xc800000000000000, |

217 | | 0xfa00000000000000, 0x9c40000000000000, 0xc350000000000000, |

218 | | 0xf424000000000000, 0x9896800000000000, 0xbebc200000000000, |

219 | | 0xee6b280000000000, 0x9502f90000000000, 0xba43b74000000000, |

220 | | 0xe8d4a51000000000, 0x9184e72a00000000, 0xb5e620f480000000, |

221 | | 0xe35fa931a0000000, 0x8e1bc9bf04000000, 0xb1a2bc2ec5000000, |

222 | | 0xde0b6b3a76400000, 0x8ac7230489e80000, 0xad78ebc5ac620000, |

223 | | 0xd8d726b7177a8000, 0x878678326eac9000, 0xa968163f0a57b400, |

224 | | 0xd3c21bcecceda100, 0x84595161401484a0, 0xa56fa5b99019a5c8, |

225 | | 0xcecb8f27f4200f3a, |

226 | | ]; |

227 | | |

228 | | #[rustfmt::skip] |

229 | | static HIGH_PARTS: [uint128; 23] = [ |

230 | | uint128 { hi: 0xaf8e5410288e1b6f, lo: 0x07ecf0ae5ee44dda }, |

231 | | uint128 { hi: 0xb1442798f49ffb4a, lo: 0x99cd11cfdf41779d }, |

232 | | uint128 { hi: 0xb2fe3f0b8599ef07, lo: 0x861fa7e6dcb4aa15 }, |

233 | | uint128 { hi: 0xb4bca50b065abe63, lo: 0x0fed077a756b53aa }, |

234 | | uint128 { hi: 0xb67f6455292cbf08, lo: 0x1a3bc84c17b1d543 }, |

235 | | uint128 { hi: 0xb84687c269ef3bfb, lo: 0x3d5d514f40eea742 }, |

236 | | uint128 { hi: 0xba121a4650e4ddeb, lo: 0x92f34d62616ce413 }, |

237 | | uint128 { hi: 0xbbe226efb628afea, lo: 0x890489f70a55368c }, |

238 | | uint128 { hi: 0xbdb6b8e905cb600f, lo: 0x5400e987bbc1c921 }, |

239 | | uint128 { hi: 0xbf8fdb78849a5f96, lo: 0xde98520472bdd034 }, |

240 | | uint128 { hi: 0xc16d9a0095928a27, lo: 0x75b7053c0f178294 }, |

241 | | uint128 { hi: 0xc350000000000000, lo: 0x0000000000000000 }, |

242 | | uint128 { hi: 0xc5371912364ce305, lo: 0x6c28000000000000 }, |

243 | | uint128 { hi: 0xc722f0ef9d80aad6, lo: 0x424d3ad2b7b97ef6 }, |

244 | | uint128 { hi: 0xc913936dd571c84c, lo: 0x03bc3a19cd1e38ea }, |

245 | | uint128 { hi: 0xcb090c8001ab551c, lo: 0x5cadf5bfd3072cc6 }, |

246 | | uint128 { hi: 0xcd036837130890a1, lo: 0x36dba887c37a8c10 }, |

247 | | uint128 { hi: 0xcf02b2c21207ef2e, lo: 0x94f967e45e03f4bc }, |

248 | | uint128 { hi: 0xd106f86e69d785c7, lo: 0xe13336d701beba52 }, |

249 | | uint128 { hi: 0xd31045a8341ca07c, lo: 0x1ede48111209a051 }, |

250 | | uint128 { hi: 0xd51ea6fa85785631, lo: 0x552a74227f3ea566 }, |

251 | | uint128 { hi: 0xd732290fbacaf133, lo: 0xa97c177947ad4096 }, |

252 | | uint128 { hi: 0xd94ad8b1c7380874, lo: 0x18375281ae7822bc }, |

253 | | ]; |

254 | | |

255 | | #[rustfmt::skip] |

256 | | static FIXUPS: [u32; 20] = [ |

257 | | 0x05271b1f, 0x00000c20, 0x00003200, 0x12100020, |

258 | | 0x00000000, 0x06000000, 0xc16409c0, 0xaf26700f, |

259 | | 0xeb987b07, 0x0000000d, 0x00000000, 0x66fbfffe, |

260 | | 0xb74100ec, 0xa0669fe8, 0xedb21280, 0x00000686, |

261 | | 0x0a021200, 0x29b89c20, 0x08bc0eda, 0x00000000, |

262 | | ]; |

263 | | |

264 | 0 | let m = unsafe { *POW10S.get_unchecked(((i + 11) % 28) as usize) }; |

265 | 0 | let h = unsafe { *HIGH_PARTS.get_unchecked(((i + 11) / 28) as usize) }; |

266 | | |

267 | 0 | let h1 = umul128_hi64(h.lo, m); |

268 | | |

269 | 0 | let c0 = h.lo.wrapping_mul(m); |

270 | 0 | let c1 = h1.wrapping_add(h.hi.wrapping_mul(m)); |

271 | 0 | let c2 = u64::from(c1 < h1) + umul128_hi64(h.hi, m); |

272 | | |

273 | 0 | let mut result = if (c2 >> 63) != 0 { |

274 | 0 | uint128 { hi: c2, lo: c1 } |

275 | | } else { |

276 | 0 | uint128 { |

277 | 0 | hi: (c2 << 1) | (c1 >> 63), |

278 | 0 | lo: (c1 << 1) | (c0 >> 63), |

279 | 0 | } |

280 | | }; |

281 | | |

282 | 0 | result.lo -= |

283 | 0 | u64::from((unsafe { *FIXUPS.get_unchecked((i >> 5) as usize) } >> (i & 31)) & 1); |

284 | 0 | return result; |

285 | 7.29k | } |

286 | 7.29k | if !Self::SPLIT_TABLES { |

287 | 7.29k | let index = ((dec_exp - DEC_EXP_MIN) * 2) as usize; |

288 | 7.29k | return uint128 { |

289 | 7.29k | hi: unsafe { *self.data.get_unchecked(index) }, |

290 | 7.29k | lo: unsafe { *self.data.get_unchecked(index + 1) }, |

291 | 7.29k | }; |

292 | 0 | } |

293 | | |

294 | | unsafe { |

295 | | #[cfg_attr( |

296 | | not(all(any(target_arch = "x86_64", target_arch = "aarch64"), not(miri))), |

297 | | allow(unused_mut) |

298 | | )] |

299 | 0 | let mut hi = self |

300 | 0 | .data |

301 | 0 | .as_ptr() |

302 | 0 | .offset(Self::NUM_POW10 as isize + DEC_EXP_MIN as isize - 1); |

303 | | #[cfg_attr( |

304 | | not(all(any(target_arch = "x86_64", target_arch = "aarch64"), not(miri))), |

305 | | allow(unused_mut) |

306 | | )] |

307 | 0 | let mut lo = hi.add(Self::NUM_POW10); |

308 | | |

309 | | // Force indexed loads. |

310 | | #[cfg(all(any(target_arch = "x86_64", target_arch = "aarch64"), not(miri)))] |

311 | 0 | asm!("/*{0}{1}*/", inout(reg) hi, inout(reg) lo); |

312 | 0 | uint128 { |

313 | 0 | hi: *hi.offset(-dec_exp as isize), |

314 | 0 | lo: *lo.offset(-dec_exp as isize), |

315 | 0 | } |

316 | | } |

317 | 7.29k | } |

318 | | |

319 | | #[cfg(test)] |

320 | | fn get(&self, dec_exp: i32) -> uint128 { |

321 | | const DEC_EXP_MIN: i32 = -292; |

322 | | assert!((DEC_EXP_MIN..DEC_EXP_MIN + Self::NUM_POW10 as i32).contains(&dec_exp)); |

323 | | unsafe { self.get_unchecked(dec_exp) } |

324 | | } |

325 | | } |

326 | | |

327 | | // 128-bit significands of powers of 10 rounded down. |

328 | | // Generation with 192-bit arithmetic and compression by Dougall Johnson. |

329 | | static POW10_SIGNIFICANDS: Pow10SignificandsTable = { |

330 | | let mut data = [0; if Pow10SignificandsTable::COMPRESS { |

331 | | 0 |

332 | | } else { |

333 | | Pow10SignificandsTable::NUM_POW10 * 2 |

334 | | }]; |

335 | | |

336 | | struct uint192 { |

337 | | w0: u64, // least significant |

338 | | w1: u64, |

339 | | w2: u64, // most significant |

340 | | } |

341 | | |

342 | | // First element, rounded up to cancel out rounding down in the |

343 | | // multiplication, and minimize significant bits. |

344 | | let mut current = uint192 { |

345 | | w0: 0xe000000000000000, |

346 | | w1: 0x25e8e89c13bb0f7a, |

347 | | w2: 0xff77b1fcbebcdc4f, |

348 | | }; |

349 | | let ten = 0xa000000000000000; |

350 | | let mut i = 0; |

351 | | while i < Pow10SignificandsTable::NUM_POW10 && !Pow10SignificandsTable::COMPRESS { |

352 | | if Pow10SignificandsTable::SPLIT_TABLES { |

353 | | data[Pow10SignificandsTable::NUM_POW10 - i - 1] = current.w2; |

354 | | data[Pow10SignificandsTable::NUM_POW10 * 2 - i - 1] = current.w1; |

355 | | } else { |

356 | | data[i * 2] = current.w2; |

357 | | data[i * 2 + 1] = current.w1; |

358 | | } |

359 | | |

360 | | let h0: u64 = umul128_hi64(current.w0, ten); |

361 | | let h1: u64 = umul128_hi64(current.w1, ten); |

362 | | |

363 | | let c0: u64 = h0.wrapping_add(current.w1.wrapping_mul(ten)); |

364 | | let c1: u64 = ((c0 < h0) as u64 + h1).wrapping_add(current.w2.wrapping_mul(ten)); |

365 | | let c2: u64 = (c1 < h1) as u64 + umul128_hi64(current.w2, ten); // dodgy carry |

366 | | |

367 | | // normalise |

368 | | if (c2 >> 63) != 0 { |

369 | | current = uint192 { |

370 | | w0: c0, |

371 | | w1: c1, |

372 | | w2: c2, |

373 | | }; |

374 | | } else { |

375 | | current = uint192 { |

376 | | w0: c0 << 1, |

377 | | w1: c1 << 1 | c0 >> 63, |

378 | | w2: c2 << 1 | c1 >> 63, |

379 | | }; |

380 | | } |

381 | | |

382 | | i += 1; |

383 | | } |

384 | | |

385 | | Pow10SignificandsTable { data } |

386 | | }; |

387 | | |

388 | | // Computes the decimal exponent as floor(log10(2**bin_exp)) if regular or |

389 | | // floor(log10(3/4 * 2**bin_exp)) otherwise, without branching. |

390 | 7.29k | const fn compute_dec_exp(bin_exp: i32, regular: bool) -> i32 { |

391 | 7.29k | debug_assert!(bin_exp >= -1334 && bin_exp <= 2620); |

392 | | // log10_3_over_4_sig = -log10(3/4) * 2**log10_2_exp rounded to a power of 2 |

393 | | const LOG10_3_OVER_4_SIG: i32 = 131_072; |

394 | | // log10_2_sig = round(log10(2) * 2**log10_2_exp) |

395 | | const LOG10_2_SIG: i32 = 315_653; |

396 | | const LOG10_2_EXP: i32 = 20; |

397 | 7.29k | (bin_exp * LOG10_2_SIG - !regular as i32 * LOG10_3_OVER_4_SIG) >> LOG10_2_EXP |

398 | 7.29k | } |

399 | | |

400 | | #[inline] |

401 | 7.29k | const fn do_compute_exp_shift(bin_exp: i32, dec_exp: i32) -> u8 { |

402 | 7.29k | debug_assert!(dec_exp >= -350 && dec_exp <= 350); |

403 | | // log2_pow10_sig = round(log2(10) * 2**log2_pow10_exp) + 1 |

404 | | const LOG2_POW10_SIG: i32 = 217_707; |

405 | | const LOG2_POW10_EXP: i32 = 16; |

406 | | // pow10_bin_exp = floor(log2(10**-dec_exp)) |

407 | 7.29k | let pow10_bin_exp = (-dec_exp * LOG2_POW10_SIG) >> LOG2_POW10_EXP; |

408 | | // pow10 = ((pow10_hi << 64) | pow10_lo) * 2**(pow10_bin_exp - 127) |

409 | 7.29k | (bin_exp + pow10_bin_exp + 1) as u8 |

410 | 7.29k | } |

411 | | |

412 | | struct ExpShiftTable { |

413 | | data: [u8; if Self::ENABLE { |

414 | | f64::EXP_MASK as usize + 1 |

415 | | } else { |

416 | | 1 |

417 | | }], |

418 | | } |

419 | | |

420 | | impl ExpShiftTable { |

421 | | const ENABLE: bool = true; |

422 | | } |

423 | | |

424 | | static EXP_SHIFTS: ExpShiftTable = { |

425 | | let mut data = [0u8; if ExpShiftTable::ENABLE { |

426 | | f64::EXP_MASK as usize + 1 |

427 | | } else { |

428 | | 1 |

429 | | }]; |

430 | | |

431 | | let mut raw_exp = 0; |

432 | | while raw_exp < data.len() && ExpShiftTable::ENABLE { |

433 | | let mut bin_exp = raw_exp as i32 - f64::EXP_OFFSET; |

434 | | if raw_exp == 0 { |

435 | | bin_exp += 1; |

436 | | } |

437 | | let dec_exp = compute_dec_exp(bin_exp, true); |

438 | | data[raw_exp] = do_compute_exp_shift(bin_exp, dec_exp) as u8; |

439 | | raw_exp += 1; |

440 | | } |

441 | | |

442 | | ExpShiftTable { data } |

443 | | }; |

444 | | |

445 | | // Computes a shift so that, after scaling by a power of 10, the intermediate |

446 | | // result always has a fixed 128-bit fractional part (for double). |

447 | | // |

448 | | // Different binary exponents can map to the same decimal exponent, but place |

449 | | // the decimal point at different bit positions. The shift compensates for this. |

450 | | // |

451 | | // For example, both 3 * 2**59 and 3 * 2**60 have dec_exp = 2, but dividing by |

452 | | // 10^dec_exp puts the decimal point in different bit positions: |

453 | | // 3 * 2**59 / 100 = 1.72...e+16 (needs shift = 1 + 1) |

454 | | // 3 * 2**60 / 100 = 3.45...e+16 (needs shift = 2 + 1) |

455 | | #[inline] |

456 | 7.29k | unsafe fn compute_exp_shift<UInt, const ONLY_REGULAR: bool>(bin_exp: i32, dec_exp: i32) -> u8 |

457 | 7.29k | where |

458 | 7.29k | UInt: traits::UInt, |

459 | | { |

460 | 7.29k | let num_bits = mem::size_of::<UInt>() * 8; |

461 | 7.29k | if num_bits == 64 && ExpShiftTable::ENABLE && ONLY_REGULAR { |

462 | | unsafe { |

463 | 0 | *EXP_SHIFTS |

464 | 0 | .data |

465 | 0 | .as_ptr() |

466 | 0 | .add((bin_exp + f64::EXP_OFFSET) as usize) |

467 | | } |

468 | | } else { |

469 | 7.29k | do_compute_exp_shift(bin_exp, dec_exp) |

470 | | } |

471 | 7.29k | } zmij::compute_exp_shift::<u32, false> Line | Count | Source | 456 | 6 | unsafe fn compute_exp_shift<UInt, const ONLY_REGULAR: bool>(bin_exp: i32, dec_exp: i32) -> u8 | 457 | 6 | where | 458 | 6 | UInt: traits::UInt, | 459 | | { | 460 | 6 | let num_bits = mem::size_of::<UInt>() * 8; | 461 | 6 | if num_bits == 64 && ExpShiftTable::ENABLE && ONLY_REGULAR { | 462 | | unsafe { | 463 | 0 | *EXP_SHIFTS | 464 | 0 | .data | 465 | 0 | .as_ptr() | 466 | 0 | .add((bin_exp + f64::EXP_OFFSET) as usize) | 467 | | } | 468 | | } else { | 469 | 6 | do_compute_exp_shift(bin_exp, dec_exp) | 470 | | } | 471 | 6 | } |

zmij::compute_exp_shift::<u32, true> Line | Count | Source | 456 | 7.28k | unsafe fn compute_exp_shift<UInt, const ONLY_REGULAR: bool>(bin_exp: i32, dec_exp: i32) -> u8 | 457 | 7.28k | where | 458 | 7.28k | UInt: traits::UInt, | 459 | | { | 460 | 7.28k | let num_bits = mem::size_of::<UInt>() * 8; | 461 | 7.28k | if num_bits == 64 && ExpShiftTable::ENABLE && ONLY_REGULAR { | 462 | | unsafe { | 463 | 0 | *EXP_SHIFTS | 464 | 0 | .data | 465 | 0 | .as_ptr() | 466 | 0 | .add((bin_exp + f64::EXP_OFFSET) as usize) | 467 | | } | 468 | | } else { | 469 | 7.28k | do_compute_exp_shift(bin_exp, dec_exp) | 470 | | } | 471 | 7.28k | } |

Unexecuted instantiation: zmij::compute_exp_shift::<u64, false> Unexecuted instantiation: zmij::compute_exp_shift::<u64, true> |

472 | | |

473 | | #[cfg_attr(feature = "no-panic", no_panic)] |

474 | 7.28k | fn count_trailing_nonzeros(x: u64) -> usize { |

475 | | // We count the number of bytes until there are only zeros left. |

476 | | // The code is equivalent to |

477 | | // 8 - x.leading_zeros() / 8 |

478 | | // but if the BSR instruction is emitted (as gcc on x64 does with default |

479 | | // settings), subtracting the constant before dividing allows the compiler |

480 | | // to combine it with the subtraction which it inserts due to BSR counting |

481 | | // in the opposite direction. |

482 | | // |

483 | | // Additionally, the BSR instruction requires a zero check. Since the high |

484 | | // bit is unused we can avoid the zero check by shifting the datum left by |

485 | | // one and inserting a sentinel bit at the end. This can be faster than the |

486 | | // automatically inserted range check. |

487 | 7.28k | (70 - ((x.to_le() << 1) | 1).leading_zeros() as usize) / 8 |

488 | 7.28k | } |

489 | | |

490 | | // Align data since unaligned access may be slower when crossing a |

491 | | // hardware-specific boundary. |

492 | | #[repr(C, align(2))] |

493 | | struct Digits2([u8; 200]); |

494 | | |

495 | | static DIGITS2: Digits2 = Digits2( |

496 | | *b"0001020304050607080910111213141516171819\ |

497 | | 2021222324252627282930313233343536373839\ |

498 | | 4041424344454647484950515253545556575859\ |

499 | | 6061626364656667686970717273747576777879\ |

500 | | 8081828384858687888990919293949596979899", |

501 | | ); |

502 | | |

503 | | // Converts value in the range [0, 100) to a string. GCC generates a bit better |

504 | | // code when value is pointer-size (https://www.godbolt.org/z/5fEPMT1cc). |

505 | | #[cfg_attr(feature = "no-panic", no_panic)] |

506 | 0 | unsafe fn digits2(value: usize) -> &'static u16 { |

507 | 0 | debug_assert!(value < 100); |

508 | | |

509 | | #[allow(clippy::cast_ptr_alignment)] |

510 | | unsafe { |

511 | 0 | &*DIGITS2.0.as_ptr().cast::<u16>().add(value) |

512 | | } |

513 | 0 | } |

514 | | |

515 | | const DIV10K_EXP: i32 = 40; |

516 | | const DIV10K_SIG: u32 = ((1u64 << DIV10K_EXP) / 10000 + 1) as u32; |

517 | | const NEG10K: u32 = ((1u64 << 32) - 10000) as u32; |

518 | | |

519 | | const DIV100_EXP: i32 = 19; |

520 | | const DIV100_SIG: u32 = (1 << DIV100_EXP) / 100 + 1; |

521 | | const NEG100: u32 = (1 << 16) - 100; |

522 | | |

523 | | const DIV10_EXP: i32 = 10; |

524 | | const DIV10_SIG: u32 = (1 << DIV10_EXP) / 10 + 1; |

525 | | const NEG10: u32 = (1 << 8) - 10; |

526 | | |

527 | | const ZEROS: u64 = 0x0101010101010101 * b'0' as u64; |

528 | | |

529 | | #[cfg_attr(feature = "no-panic", no_panic)] |

530 | 7.28k | fn to_bcd8(abcdefgh: u64) -> u64 { |

531 | | // An optimization from Xiang JunBo. |

532 | | // Three steps BCD. Base 10000 -> base 100 -> base 10. |

533 | | // div and mod are evaluated simultaneously as, e.g. |

534 | | // (abcdefgh / 10000) << 32 + (abcdefgh % 10000) |

535 | | // == abcdefgh + (2**32 - 10000) * (abcdefgh / 10000))) |

536 | | // where the division on the RHS is implemented by the usual multiply + shift |

537 | | // trick and the fractional bits are masked away. |

538 | 7.28k | let abcd_efgh = |

539 | 7.28k | abcdefgh + u64::from(NEG10K) * ((abcdefgh * u64::from(DIV10K_SIG)) >> DIV10K_EXP); |

540 | 7.28k | let ab_cd_ef_gh = abcd_efgh |

541 | 7.28k | + u64::from(NEG100) * (((abcd_efgh * u64::from(DIV100_SIG)) >> DIV100_EXP) & 0x7f0000007f); |

542 | 7.28k | let a_b_c_d_e_f_g_h = ab_cd_ef_gh |

543 | 7.28k | + u64::from(NEG10) |

544 | 7.28k | * (((ab_cd_ef_gh * u64::from(DIV10_SIG)) >> DIV10_EXP) & 0xf000f000f000f); |

545 | 7.28k | a_b_c_d_e_f_g_h.to_be() |

546 | 7.28k | } |

547 | | |

548 | 7.28k | unsafe fn write_if(buffer: *mut u8, digit: u32, condition: bool) -> *mut u8 { |

549 | | unsafe { |

550 | 7.28k | *buffer = b'0' + digit as u8; |

551 | 7.28k | buffer.add(usize::from(condition)) |

552 | | } |

553 | 7.28k | } |

554 | | |

555 | 7.28k | unsafe fn write8(buffer: *mut u8, value: u64) { |

556 | 7.28k | unsafe { |

557 | 7.28k | buffer.cast::<u64>().write_unaligned(value); |

558 | 7.28k | } |

559 | 7.28k | } |

560 | | |

561 | | // Writes a significand and removes trailing zeros. value has up to 17 decimal |

562 | | // digits (16-17 for normals) for double (num_bits == 64) and up to 9 digits |

563 | | // (8-9 for normals) for float. The significant digits start from buffer[1]. |

564 | | // buffer[0] may contain '0' after this function if the leading digit is zero. |

565 | | #[cfg_attr(feature = "no-panic", no_panic)] |

566 | | #[inline] |

567 | 7.28k | unsafe fn write_significand<Float>(mut buffer: *mut u8, value: u64, extra_digit: bool) -> *mut u8 |

568 | 7.28k | where |

569 | 7.28k | Float: FloatTraits, |

570 | | { |

571 | 7.28k | if Float::NUM_BITS == 32 { |

572 | 7.28k | buffer = unsafe { write_if(buffer, (value / 100_000_000) as u32, extra_digit) }; |

573 | 7.28k | let bcd = to_bcd8(value % 100_000_000); |

574 | | unsafe { |

575 | 7.28k | write8(buffer, bcd + ZEROS); |

576 | 7.28k | return buffer.add(count_trailing_nonzeros(bcd)); |

577 | | } |

578 | 0 | } |

579 | | |

580 | | #[cfg(not(any( |

581 | | all(target_arch = "aarch64", target_feature = "neon", not(miri)), |

582 | | all(target_arch = "x86_64", target_feature = "sse2", not(miri)), |

583 | | )))] |

584 | | { |

585 | | // Digits/pairs of digits are denoted by letters: value = abbccddeeffgghhii. |

586 | | let abbccddee = (value / 100_000_000) as u32; |

587 | | let ffgghhii = (value % 100_000_000) as u32; |

588 | | buffer = unsafe { write_if(buffer, abbccddee / 100_000_000, extra_digit) }; |

589 | | let bcd = to_bcd8(u64::from(abbccddee % 100_000_000)); |

590 | | unsafe { |

591 | | write8(buffer, bcd + ZEROS); |

592 | | } |

593 | | if ffgghhii == 0 { |

594 | | return unsafe { buffer.add(count_trailing_nonzeros(bcd)) }; |

595 | | } |

596 | | let bcd = to_bcd8(u64::from(ffgghhii)); |

597 | | unsafe { |

598 | | write8(buffer.add(8), bcd + ZEROS); |

599 | | buffer.add(8).add(count_trailing_nonzeros(bcd)) |

600 | | } |

601 | | } |

602 | | |

603 | | #[cfg(all(target_arch = "aarch64", target_feature = "neon", not(miri)))] |

604 | | { |

605 | | // An optimized version for NEON by Dougall Johnson. |

606 | | |

607 | | use core::arch::aarch64::*; |

608 | | |

609 | | const NEG10K: i32 = -10000 + 0x10000; |

610 | | |

611 | | #[repr(C, align(64))] |

612 | | struct Consts { |

613 | | mul_const: u64, |

614 | | hundred_million: u64, |

615 | | multipliers32: int32x4_t, |

616 | | multipliers16: int16x8_t, |

617 | | } |

618 | | |

619 | | static CONSTS: Consts = Consts { |

620 | | mul_const: 0xabcc77118461cefd, |

621 | | hundred_million: 100000000, |

622 | | multipliers32: unsafe { |

623 | | mem::transmute::<[i32; 4], int32x4_t>([ |

624 | | DIV10K_SIG as i32, |

625 | | NEG10K, |

626 | | (DIV100_SIG << 12) as i32, |

627 | | NEG100 as i32, |

628 | | ]) |

629 | | }, |

630 | | multipliers16: unsafe { |

631 | | mem::transmute::<[i16; 8], int16x8_t>([0xce0, NEG10 as i16, 0, 0, 0, 0, 0, 0]) |

632 | | }, |

633 | | }; |

634 | | |

635 | | let mut c = ptr::addr_of!(CONSTS); |

636 | | |

637 | | // Compiler barrier, or clang doesn't load from memory and generates 15 |

638 | | // more instructions. |

639 | | let c = unsafe { |

640 | | asm!("/*{0}*/", inout(reg) c); |

641 | | &*c |

642 | | }; |

643 | | |

644 | | let mut hundred_million = c.hundred_million; |

645 | | |

646 | | // Compiler barrier, or clang narrows the load to 32-bit and unpairs it. |

647 | | unsafe { |

648 | | asm!("/*{0}*/", inout(reg) hundred_million); |

649 | | } |

650 | | |

651 | | // Equivalent to abbccddee = value / 100000000, ffgghhii = value % 100000000. |

652 | | let abbccddee = (umul128(value, c.mul_const) >> 90) as u64; |

653 | | let ffgghhii = value - abbccddee * hundred_million; |

654 | | |

655 | | // We could probably make this bit faster, but we're preferring to |

656 | | // reuse the constants for now. |

657 | | let a = (umul128(abbccddee, c.mul_const) >> 90) as u64; |

658 | | let bbccddee = abbccddee - a * hundred_million; |

659 | | |

660 | | buffer = unsafe { write_if(buffer, a as u32, extra_digit) }; |

661 | | |

662 | | unsafe { |

663 | | let ffgghhii_bbccddee_64: uint64x1_t = |

664 | | mem::transmute::<u64, uint64x1_t>((ffgghhii << 32) | bbccddee); |

665 | | let bbccddee_ffgghhii: int32x2_t = vreinterpret_s32_u64(ffgghhii_bbccddee_64); |

666 | | |

667 | | let bbcc_ffgg: int32x2_t = vreinterpret_s32_u32(vshr_n_u32( |

668 | | vreinterpret_u32_s32(vqdmulh_n_s32( |

669 | | bbccddee_ffgghhii, |

670 | | mem::transmute::<int32x4_t, [i32; 4]>(c.multipliers32)[0], |

671 | | )), |

672 | | 9, |

673 | | )); |

674 | | let ddee_bbcc_hhii_ffgg_32: int32x2_t = vmla_n_s32( |

675 | | bbccddee_ffgghhii, |

676 | | bbcc_ffgg, |

677 | | mem::transmute::<int32x4_t, [i32; 4]>(c.multipliers32)[1], |

678 | | ); |

679 | | |

680 | | let mut ddee_bbcc_hhii_ffgg: int32x4_t = |

681 | | vreinterpretq_s32_u32(vshll_n_u16(vreinterpret_u16_s32(ddee_bbcc_hhii_ffgg_32), 0)); |

682 | | |

683 | | // Compiler barrier, or clang breaks the subsequent MLA into UADDW + |

684 | | // MUL. |

685 | | asm!("/*{:v}*/", inout(vreg) ddee_bbcc_hhii_ffgg); |

686 | | |

687 | | let dd_bb_hh_ff: int32x4_t = vqdmulhq_n_s32( |

688 | | ddee_bbcc_hhii_ffgg, |

689 | | mem::transmute::<int32x4_t, [i32; 4]>(c.multipliers32)[2], |

690 | | ); |

691 | | let ee_dd_cc_bb_ii_hh_gg_ff: int16x8_t = vreinterpretq_s16_s32(vmlaq_n_s32( |

692 | | ddee_bbcc_hhii_ffgg, |

693 | | dd_bb_hh_ff, |

694 | | mem::transmute::<int32x4_t, [i32; 4]>(c.multipliers32)[3], |

695 | | )); |

696 | | let high_10s: int16x8_t = vqdmulhq_n_s16( |

697 | | ee_dd_cc_bb_ii_hh_gg_ff, |

698 | | mem::transmute::<int16x8_t, [i16; 8]>(c.multipliers16)[0], |

699 | | ); |

700 | | let digits: uint8x16_t = vrev64q_u8(vreinterpretq_u8_s16(vmlaq_n_s16( |

701 | | ee_dd_cc_bb_ii_hh_gg_ff, |

702 | | high_10s, |

703 | | mem::transmute::<int16x8_t, [i16; 8]>(c.multipliers16)[1], |

704 | | ))); |

705 | | let str: uint16x8_t = vaddq_u16( |

706 | | vreinterpretq_u16_u8(digits), |

707 | | vreinterpretq_u16_s8(vdupq_n_s8(b'0' as i8)), |

708 | | ); |

709 | | |

710 | | buffer.cast::<uint16x8_t>().write_unaligned(str); |

711 | | |

712 | | let is_not_zero: uint16x8_t = |

713 | | vreinterpretq_u16_u8(vcgtzq_s8(vreinterpretq_s8_u8(digits))); |

714 | | let zeros: u64 = vget_lane_u64(vreinterpret_u64_u8(vshrn_n_u16(is_not_zero, 4)), 0); |

715 | | |

716 | | buffer.add(16 - (zeros.leading_zeros() as usize >> 2)) |

717 | | } |

718 | | } |

719 | | |

720 | | #[cfg(all(target_arch = "x86_64", target_feature = "sse2", not(miri)))] |

721 | | { |

722 | | use crate::stdarch_x86::*; |

723 | | |

724 | 0 | let abbccddee = (value / 100_000_000) as u32; |

725 | 0 | let ffgghhii = (value % 100_000_000) as u32; |

726 | 0 | let a = abbccddee / 100_000_000; |

727 | 0 | let bbccddee = abbccddee % 100_000_000; |

728 | | |

729 | 0 | buffer = unsafe { write_if(buffer, a, extra_digit) }; |

730 | | |

731 | | #[repr(C, align(64))] |

732 | | struct Consts { |

733 | | div10k: u128, |

734 | | neg10k: u128, |

735 | | div100: u128, |

736 | | div10: u128, |

737 | | #[cfg(target_feature = "sse4.1")] |

738 | | neg100: u128, |

739 | | #[cfg(target_feature = "sse4.1")] |

740 | | neg10: u128, |

741 | | #[cfg(target_feature = "sse4.1")] |

742 | | bswap: u128, |

743 | | #[cfg(not(target_feature = "sse4.1"))] |

744 | | hundred: u128, |

745 | | #[cfg(not(target_feature = "sse4.1"))] |

746 | | moddiv10: u128, |

747 | | zeros: u128, |

748 | | } |

749 | | |

750 | | impl Consts { |

751 | 0 | const fn splat64(x: u64) -> u128 { |

752 | 0 | ((x as u128) << 64) | x as u128 |

753 | 0 | } |

754 | | |

755 | 0 | const fn splat32(x: u32) -> u128 { |

756 | 0 | Self::splat64(((x as u64) << 32) | x as u64) |

757 | 0 | } |

758 | | |

759 | 0 | const fn splat16(x: u16) -> u128 { |

760 | 0 | Self::splat32(((x as u32) << 16) | x as u32) |

761 | 0 | } |

762 | | |

763 | | #[cfg(target_feature = "sse4.1")] |

764 | | const fn pack8(a: u8, b: u8, c: u8, d: u8, e: u8, f: u8, g: u8, h: u8) -> u64 { |

765 | | ((h as u64) << 56) |

766 | | | ((g as u64) << 48) |

767 | | | ((f as u64) << 40) |

768 | | | ((e as u64) << 32) |

769 | | | ((d as u64) << 24) |

770 | | | ((c as u64) << 16) |

771 | | | ((b as u64) << 8) |

772 | | | a as u64 |

773 | | } |

774 | | } |

775 | | |

776 | | static CONSTS: Consts = Consts { |

777 | | div10k: Consts::splat64(DIV10K_SIG as u64), |

778 | | neg10k: Consts::splat64(NEG10K as u64), |

779 | | div100: Consts::splat32(DIV100_SIG), |

780 | | div10: Consts::splat16(((1u32 << 16) / 10 + 1) as u16), |

781 | | #[cfg(target_feature = "sse4.1")] |

782 | | neg100: Consts::splat32(NEG100), |

783 | | #[cfg(target_feature = "sse4.1")] |

784 | | neg10: Consts::splat16((1 << 8) - 10), |

785 | | #[cfg(target_feature = "sse4.1")] |

786 | | bswap: Consts::pack8(15, 14, 13, 12, 11, 10, 9, 8) as u128 |

787 | | | (Consts::pack8(7, 6, 5, 4, 3, 2, 1, 0) as u128) << 64, |

788 | | #[cfg(not(target_feature = "sse4.1"))] |

789 | | hundred: Consts::splat32(100), |

790 | | #[cfg(not(target_feature = "sse4.1"))] |

791 | | moddiv10: Consts::splat16(10 * (1 << 8) - 1), |

792 | | zeros: Consts::splat64(ZEROS), |

793 | | }; |

794 | | |

795 | 0 | let mut c = ptr::addr_of!(CONSTS); |

796 | | // Load constants from memory. |

797 | 0 | unsafe { |

798 | 0 | asm!("/*{0}*/", inout(reg) c); |

799 | 0 | } |

800 | | |

801 | 0 | let div10k = unsafe { _mm_load_si128(ptr::addr_of!((*c).div10k).cast::<__m128i>()) }; |

802 | 0 | let neg10k = unsafe { _mm_load_si128(ptr::addr_of!((*c).neg10k).cast::<__m128i>()) }; |

803 | 0 | let div100 = unsafe { _mm_load_si128(ptr::addr_of!((*c).div100).cast::<__m128i>()) }; |

804 | 0 | let div10 = unsafe { _mm_load_si128(ptr::addr_of!((*c).div10).cast::<__m128i>()) }; |

805 | | #[cfg(target_feature = "sse4.1")] |

806 | | let neg100 = unsafe { _mm_load_si128(ptr::addr_of!((*c).neg100).cast::<__m128i>()) }; |

807 | | #[cfg(target_feature = "sse4.1")] |

808 | | let neg10 = unsafe { _mm_load_si128(ptr::addr_of!((*c).neg10).cast::<__m128i>()) }; |

809 | | #[cfg(target_feature = "sse4.1")] |

810 | | let bswap = unsafe { _mm_load_si128(ptr::addr_of!((*c).bswap).cast::<__m128i>()) }; |

811 | | #[cfg(not(target_feature = "sse4.1"))] |

812 | 0 | let hundred = unsafe { _mm_load_si128(ptr::addr_of!((*c).hundred).cast::<__m128i>()) }; |

813 | | #[cfg(not(target_feature = "sse4.1"))] |

814 | 0 | let moddiv10 = unsafe { _mm_load_si128(ptr::addr_of!((*c).moddiv10).cast::<__m128i>()) }; |

815 | 0 | let zeros = unsafe { _mm_load_si128(ptr::addr_of!((*c).zeros).cast::<__m128i>()) }; |

816 | | |

817 | | // The BCD sequences are based on ones provided by Xiang JunBo. |

818 | | unsafe { |

819 | 0 | let x: __m128i = _mm_set_epi64x(i64::from(bbccddee), i64::from(ffgghhii)); |

820 | 0 | let y: __m128i = _mm_add_epi64( |

821 | 0 | x, |

822 | 0 | _mm_mul_epu32(neg10k, _mm_srli_epi64(_mm_mul_epu32(x, div10k), DIV10K_EXP)), |

823 | | ); |

824 | | |

825 | | #[cfg(target_feature = "sse4.1")] |

826 | | let bcd: __m128i = { |

827 | | // _mm_mullo_epi32 is SSE 4.1 |

828 | | let z: __m128i = _mm_add_epi64( |

829 | | y, |

830 | | _mm_mullo_epi32(neg100, _mm_srli_epi32(_mm_mulhi_epu16(y, div100), 3)), |

831 | | ); |

832 | | let big_endian_bcd: __m128i = |

833 | | _mm_add_epi64(z, _mm_mullo_epi16(neg10, _mm_mulhi_epu16(z, div10))); |

834 | | // SSSE3 |

835 | | _mm_shuffle_epi8(big_endian_bcd, bswap) |

836 | | }; |

837 | | |

838 | | #[cfg(not(target_feature = "sse4.1"))] |

839 | 0 | let bcd: __m128i = { |

840 | 0 | let y_div_100: __m128i = _mm_srli_epi16(_mm_mulhi_epu16(y, div100), 3); |

841 | 0 | let y_mod_100: __m128i = _mm_sub_epi16(y, _mm_mullo_epi16(y_div_100, hundred)); |

842 | 0 | let z: __m128i = _mm_or_si128(_mm_slli_epi32(y_mod_100, 16), y_div_100); |

843 | 0 | let bcd_shuffled: __m128i = _mm_sub_epi16( |

844 | 0 | _mm_slli_epi16(z, 8), |

845 | 0 | _mm_mullo_epi16(moddiv10, _mm_mulhi_epu16(z, div10)), |

846 | | ); |

847 | 0 | _mm_shuffle_epi32(bcd_shuffled, _MM_SHUFFLE(0, 1, 2, 3)) |

848 | | }; |

849 | | |

850 | 0 | let digits = _mm_or_si128(bcd, zeros); |

851 | | |

852 | | // Count leading zeros. |

853 | 0 | let mask128: __m128i = _mm_cmpgt_epi8(bcd, _mm_setzero_si128()); |

854 | 0 | let mask = _mm_movemask_epi8(mask128) as u32; |

855 | 0 | let len = 32 - mask.leading_zeros() as usize; |

856 | | |

857 | 0 | _mm_storeu_si128(buffer.cast::<__m128i>(), digits); |

858 | 0 | buffer.add(len) |

859 | | } |

860 | | } |

861 | 7.28k | } Unexecuted instantiation: zmij::write_significand::<f64> zmij::write_significand::<f32> Line | Count | Source | 567 | 7.28k | unsafe fn write_significand<Float>(mut buffer: *mut u8, value: u64, extra_digit: bool) -> *mut u8 | 568 | 7.28k | where | 569 | 7.28k | Float: FloatTraits, | 570 | | { | 571 | 7.28k | if Float::NUM_BITS == 32 { | 572 | 7.28k | buffer = unsafe { write_if(buffer, (value / 100_000_000) as u32, extra_digit) }; | 573 | 7.28k | let bcd = to_bcd8(value % 100_000_000); | 574 | | unsafe { | 575 | 7.28k | write8(buffer, bcd + ZEROS); | 576 | 7.28k | return buffer.add(count_trailing_nonzeros(bcd)); | 577 | | } | 578 | 0 | } | 579 | | | 580 | | #[cfg(not(any( | 581 | | all(target_arch = "aarch64", target_feature = "neon", not(miri)), | 582 | | all(target_arch = "x86_64", target_feature = "sse2", not(miri)), | 583 | | )))] | 584 | | { | 585 | | // Digits/pairs of digits are denoted by letters: value = abbccddeeffgghhii. | 586 | | let abbccddee = (value / 100_000_000) as u32; | 587 | | let ffgghhii = (value % 100_000_000) as u32; | 588 | | buffer = unsafe { write_if(buffer, abbccddee / 100_000_000, extra_digit) }; | 589 | | let bcd = to_bcd8(u64::from(abbccddee % 100_000_000)); | 590 | | unsafe { | 591 | | write8(buffer, bcd + ZEROS); | 592 | | } | 593 | | if ffgghhii == 0 { | 594 | | return unsafe { buffer.add(count_trailing_nonzeros(bcd)) }; | 595 | | } | 596 | | let bcd = to_bcd8(u64::from(ffgghhii)); | 597 | | unsafe { | 598 | | write8(buffer.add(8), bcd + ZEROS); | 599 | | buffer.add(8).add(count_trailing_nonzeros(bcd)) | 600 | | } | 601 | | } | 602 | | | 603 | | #[cfg(all(target_arch = "aarch64", target_feature = "neon", not(miri)))] | 604 | | { | 605 | | // An optimized version for NEON by Dougall Johnson. | 606 | | | 607 | | use core::arch::aarch64::*; | 608 | | | 609 | | const NEG10K: i32 = -10000 + 0x10000; | 610 | | | 611 | | #[repr(C, align(64))] | 612 | | struct Consts { | 613 | | mul_const: u64, | 614 | | hundred_million: u64, | 615 | | multipliers32: int32x4_t, | 616 | | multipliers16: int16x8_t, | 617 | | } | 618 | | | 619 | | static CONSTS: Consts = Consts { | 620 | | mul_const: 0xabcc77118461cefd, | 621 | | hundred_million: 100000000, | 622 | | multipliers32: unsafe { | 623 | | mem::transmute::<[i32; 4], int32x4_t>([ | 624 | | DIV10K_SIG as i32, | 625 | | NEG10K, | 626 | | (DIV100_SIG << 12) as i32, | 627 | | NEG100 as i32, | 628 | | ]) | 629 | | }, | 630 | | multipliers16: unsafe { | 631 | | mem::transmute::<[i16; 8], int16x8_t>([0xce0, NEG10 as i16, 0, 0, 0, 0, 0, 0]) | 632 | | }, | 633 | | }; | 634 | | | 635 | | let mut c = ptr::addr_of!(CONSTS); | 636 | | | 637 | | // Compiler barrier, or clang doesn't load from memory and generates 15 | 638 | | // more instructions. | 639 | | let c = unsafe { | 640 | | asm!("/*{0}*/", inout(reg) c); | 641 | | &*c | 642 | | }; | 643 | | | 644 | | let mut hundred_million = c.hundred_million; | 645 | | | 646 | | // Compiler barrier, or clang narrows the load to 32-bit and unpairs it. | 647 | | unsafe { | 648 | | asm!("/*{0}*/", inout(reg) hundred_million); | 649 | | } | 650 | | | 651 | | // Equivalent to abbccddee = value / 100000000, ffgghhii = value % 100000000. | 652 | | let abbccddee = (umul128(value, c.mul_const) >> 90) as u64; | 653 | | let ffgghhii = value - abbccddee * hundred_million; | 654 | | | 655 | | // We could probably make this bit faster, but we're preferring to | 656 | | // reuse the constants for now. | 657 | | let a = (umul128(abbccddee, c.mul_const) >> 90) as u64; | 658 | | let bbccddee = abbccddee - a * hundred_million; | 659 | | | 660 | | buffer = unsafe { write_if(buffer, a as u32, extra_digit) }; | 661 | | | 662 | | unsafe { | 663 | | let ffgghhii_bbccddee_64: uint64x1_t = | 664 | | mem::transmute::<u64, uint64x1_t>((ffgghhii << 32) | bbccddee); | 665 | | let bbccddee_ffgghhii: int32x2_t = vreinterpret_s32_u64(ffgghhii_bbccddee_64); | 666 | | | 667 | | let bbcc_ffgg: int32x2_t = vreinterpret_s32_u32(vshr_n_u32( | 668 | | vreinterpret_u32_s32(vqdmulh_n_s32( | 669 | | bbccddee_ffgghhii, | 670 | | mem::transmute::<int32x4_t, [i32; 4]>(c.multipliers32)[0], | 671 | | )), | 672 | | 9, | 673 | | )); | 674 | | let ddee_bbcc_hhii_ffgg_32: int32x2_t = vmla_n_s32( | 675 | | bbccddee_ffgghhii, | 676 | | bbcc_ffgg, | 677 | | mem::transmute::<int32x4_t, [i32; 4]>(c.multipliers32)[1], | 678 | | ); | 679 | | | 680 | | let mut ddee_bbcc_hhii_ffgg: int32x4_t = | 681 | | vreinterpretq_s32_u32(vshll_n_u16(vreinterpret_u16_s32(ddee_bbcc_hhii_ffgg_32), 0)); | 682 | | | 683 | | // Compiler barrier, or clang breaks the subsequent MLA into UADDW + | 684 | | // MUL. | 685 | | asm!("/*{:v}*/", inout(vreg) ddee_bbcc_hhii_ffgg); | 686 | | | 687 | | let dd_bb_hh_ff: int32x4_t = vqdmulhq_n_s32( | 688 | | ddee_bbcc_hhii_ffgg, | 689 | | mem::transmute::<int32x4_t, [i32; 4]>(c.multipliers32)[2], | 690 | | ); | 691 | | let ee_dd_cc_bb_ii_hh_gg_ff: int16x8_t = vreinterpretq_s16_s32(vmlaq_n_s32( | 692 | | ddee_bbcc_hhii_ffgg, | 693 | | dd_bb_hh_ff, | 694 | | mem::transmute::<int32x4_t, [i32; 4]>(c.multipliers32)[3], | 695 | | )); | 696 | | let high_10s: int16x8_t = vqdmulhq_n_s16( | 697 | | ee_dd_cc_bb_ii_hh_gg_ff, | 698 | | mem::transmute::<int16x8_t, [i16; 8]>(c.multipliers16)[0], | 699 | | ); | 700 | | let digits: uint8x16_t = vrev64q_u8(vreinterpretq_u8_s16(vmlaq_n_s16( | 701 | | ee_dd_cc_bb_ii_hh_gg_ff, | 702 | | high_10s, | 703 | | mem::transmute::<int16x8_t, [i16; 8]>(c.multipliers16)[1], | 704 | | ))); | 705 | | let str: uint16x8_t = vaddq_u16( | 706 | | vreinterpretq_u16_u8(digits), | 707 | | vreinterpretq_u16_s8(vdupq_n_s8(b'0' as i8)), | 708 | | ); | 709 | | | 710 | | buffer.cast::<uint16x8_t>().write_unaligned(str); | 711 | | | 712 | | let is_not_zero: uint16x8_t = | 713 | | vreinterpretq_u16_u8(vcgtzq_s8(vreinterpretq_s8_u8(digits))); | 714 | | let zeros: u64 = vget_lane_u64(vreinterpret_u64_u8(vshrn_n_u16(is_not_zero, 4)), 0); | 715 | | | 716 | | buffer.add(16 - (zeros.leading_zeros() as usize >> 2)) | 717 | | } | 718 | | } | 719 | | | 720 | | #[cfg(all(target_arch = "x86_64", target_feature = "sse2", not(miri)))] | 721 | | { | 722 | | use crate::stdarch_x86::*; | 723 | | | 724 | 0 | let abbccddee = (value / 100_000_000) as u32; | 725 | 0 | let ffgghhii = (value % 100_000_000) as u32; | 726 | 0 | let a = abbccddee / 100_000_000; | 727 | 0 | let bbccddee = abbccddee % 100_000_000; | 728 | | | 729 | 0 | buffer = unsafe { write_if(buffer, a, extra_digit) }; | 730 | | | 731 | | #[repr(C, align(64))] | 732 | | struct Consts { | 733 | | div10k: u128, | 734 | | neg10k: u128, | 735 | | div100: u128, | 736 | | div10: u128, | 737 | | #[cfg(target_feature = "sse4.1")] | 738 | | neg100: u128, | 739 | | #[cfg(target_feature = "sse4.1")] | 740 | | neg10: u128, | 741 | | #[cfg(target_feature = "sse4.1")] | 742 | | bswap: u128, | 743 | | #[cfg(not(target_feature = "sse4.1"))] | 744 | | hundred: u128, | 745 | | #[cfg(not(target_feature = "sse4.1"))] | 746 | | moddiv10: u128, | 747 | | zeros: u128, | 748 | | } | 749 | | | 750 | | impl Consts { | 751 | | const fn splat64(x: u64) -> u128 { | 752 | | ((x as u128) << 64) | x as u128 | 753 | | } | 754 | | | 755 | | const fn splat32(x: u32) -> u128 { | 756 | | Self::splat64(((x as u64) << 32) | x as u64) | 757 | | } | 758 | | | 759 | | const fn splat16(x: u16) -> u128 { | 760 | | Self::splat32(((x as u32) << 16) | x as u32) | 761 | | } | 762 | | | 763 | | #[cfg(target_feature = "sse4.1")] | 764 | | const fn pack8(a: u8, b: u8, c: u8, d: u8, e: u8, f: u8, g: u8, h: u8) -> u64 { | 765 | | ((h as u64) << 56) | 766 | | | ((g as u64) << 48) | 767 | | | ((f as u64) << 40) | 768 | | | ((e as u64) << 32) | 769 | | | ((d as u64) << 24) | 770 | | | ((c as u64) << 16) | 771 | | | ((b as u64) << 8) | 772 | | | a as u64 | 773 | | } | 774 | | } | 775 | | | 776 | | static CONSTS: Consts = Consts { | 777 | | div10k: Consts::splat64(DIV10K_SIG as u64), | 778 | | neg10k: Consts::splat64(NEG10K as u64), | 779 | | div100: Consts::splat32(DIV100_SIG), | 780 | | div10: Consts::splat16(((1u32 << 16) / 10 + 1) as u16), | 781 | | #[cfg(target_feature = "sse4.1")] | 782 | | neg100: Consts::splat32(NEG100), | 783 | | #[cfg(target_feature = "sse4.1")] | 784 | | neg10: Consts::splat16((1 << 8) - 10), | 785 | | #[cfg(target_feature = "sse4.1")] | 786 | | bswap: Consts::pack8(15, 14, 13, 12, 11, 10, 9, 8) as u128 | 787 | | | (Consts::pack8(7, 6, 5, 4, 3, 2, 1, 0) as u128) << 64, | 788 | | #[cfg(not(target_feature = "sse4.1"))] | 789 | | hundred: Consts::splat32(100), | 790 | | #[cfg(not(target_feature = "sse4.1"))] | 791 | | moddiv10: Consts::splat16(10 * (1 << 8) - 1), | 792 | | zeros: Consts::splat64(ZEROS), | 793 | | }; | 794 | | | 795 | 0 | let mut c = ptr::addr_of!(CONSTS); | 796 | | // Load constants from memory. | 797 | 0 | unsafe { | 798 | 0 | asm!("/*{0}*/", inout(reg) c); | 799 | 0 | } | 800 | | | 801 | 0 | let div10k = unsafe { _mm_load_si128(ptr::addr_of!((*c).div10k).cast::<__m128i>()) }; | 802 | 0 | let neg10k = unsafe { _mm_load_si128(ptr::addr_of!((*c).neg10k).cast::<__m128i>()) }; | 803 | 0 | let div100 = unsafe { _mm_load_si128(ptr::addr_of!((*c).div100).cast::<__m128i>()) }; | 804 | 0 | let div10 = unsafe { _mm_load_si128(ptr::addr_of!((*c).div10).cast::<__m128i>()) }; | 805 | | #[cfg(target_feature = "sse4.1")] | 806 | | let neg100 = unsafe { _mm_load_si128(ptr::addr_of!((*c).neg100).cast::<__m128i>()) }; | 807 | | #[cfg(target_feature = "sse4.1")] | 808 | | let neg10 = unsafe { _mm_load_si128(ptr::addr_of!((*c).neg10).cast::<__m128i>()) }; | 809 | | #[cfg(target_feature = "sse4.1")] | 810 | | let bswap = unsafe { _mm_load_si128(ptr::addr_of!((*c).bswap).cast::<__m128i>()) }; | 811 | | #[cfg(not(target_feature = "sse4.1"))] | 812 | 0 | let hundred = unsafe { _mm_load_si128(ptr::addr_of!((*c).hundred).cast::<__m128i>()) }; | 813 | | #[cfg(not(target_feature = "sse4.1"))] | 814 | 0 | let moddiv10 = unsafe { _mm_load_si128(ptr::addr_of!((*c).moddiv10).cast::<__m128i>()) }; | 815 | 0 | let zeros = unsafe { _mm_load_si128(ptr::addr_of!((*c).zeros).cast::<__m128i>()) }; | 816 | | | 817 | | // The BCD sequences are based on ones provided by Xiang JunBo. | 818 | | unsafe { | 819 | 0 | let x: __m128i = _mm_set_epi64x(i64::from(bbccddee), i64::from(ffgghhii)); | 820 | 0 | let y: __m128i = _mm_add_epi64( | 821 | 0 | x, | 822 | 0 | _mm_mul_epu32(neg10k, _mm_srli_epi64(_mm_mul_epu32(x, div10k), DIV10K_EXP)), | 823 | | ); | 824 | | | 825 | | #[cfg(target_feature = "sse4.1")] | 826 | | let bcd: __m128i = { | 827 | | // _mm_mullo_epi32 is SSE 4.1 | 828 | | let z: __m128i = _mm_add_epi64( | 829 | | y, | 830 | | _mm_mullo_epi32(neg100, _mm_srli_epi32(_mm_mulhi_epu16(y, div100), 3)), | 831 | | ); | 832 | | let big_endian_bcd: __m128i = | 833 | | _mm_add_epi64(z, _mm_mullo_epi16(neg10, _mm_mulhi_epu16(z, div10))); | 834 | | // SSSE3 | 835 | | _mm_shuffle_epi8(big_endian_bcd, bswap) | 836 | | }; | 837 | | | 838 | | #[cfg(not(target_feature = "sse4.1"))] | 839 | 0 | let bcd: __m128i = { | 840 | 0 | let y_div_100: __m128i = _mm_srli_epi16(_mm_mulhi_epu16(y, div100), 3); | 841 | 0 | let y_mod_100: __m128i = _mm_sub_epi16(y, _mm_mullo_epi16(y_div_100, hundred)); | 842 | 0 | let z: __m128i = _mm_or_si128(_mm_slli_epi32(y_mod_100, 16), y_div_100); | 843 | 0 | let bcd_shuffled: __m128i = _mm_sub_epi16( | 844 | 0 | _mm_slli_epi16(z, 8), | 845 | 0 | _mm_mullo_epi16(moddiv10, _mm_mulhi_epu16(z, div10)), | 846 | | ); | 847 | 0 | _mm_shuffle_epi32(bcd_shuffled, _MM_SHUFFLE(0, 1, 2, 3)) | 848 | | }; | 849 | | | 850 | 0 | let digits = _mm_or_si128(bcd, zeros); | 851 | | | 852 | | // Count leading zeros. | 853 | 0 | let mask128: __m128i = _mm_cmpgt_epi8(bcd, _mm_setzero_si128()); | 854 | 0 | let mask = _mm_movemask_epi8(mask128) as u32; | 855 | 0 | let len = 32 - mask.leading_zeros() as usize; | 856 | | | 857 | 0 | _mm_storeu_si128(buffer.cast::<__m128i>(), digits); | 858 | 0 | buffer.add(len) | 859 | | } | 860 | | } | 861 | 7.28k | } |

|

862 | | |

863 | | struct ToDecimalResult { |

864 | | sig: i64, |

865 | | exp: i32, |

866 | | } |

867 | | |

868 | | #[cfg_attr(feature = "no-panic", no_panic)] |

869 | | #[inline] |

870 | 6 | fn to_decimal_schubfach<UInt>(bin_sig: UInt, bin_exp: i64, regular: bool) -> ToDecimalResult |

871 | 6 | where |

872 | 6 | UInt: traits::UInt, |

873 | | { |

874 | 6 | let num_bits = mem::size_of::<UInt>() as i32 * 8; |

875 | 6 | let dec_exp = compute_dec_exp(bin_exp as i32, regular); |

876 | 6 | let exp_shift = unsafe { compute_exp_shift::<UInt, false>(bin_exp as i32, dec_exp) }; |

877 | 6 | let mut pow10 = unsafe { POW10_SIGNIFICANDS.get_unchecked(-dec_exp) }; |

878 | | |

879 | | // Fallback to Schubfach to guarantee correctness in boundary cases. This |

880 | | // requires switching to strict overestimates of powers of 10. |

881 | 6 | if num_bits == 64 { |

882 | 0 | pow10.lo += 1; |

883 | 6 | } else { |

884 | 6 | pow10.hi += 1; |

885 | 6 | } |

886 | | |

887 | | // Shift the significand so that boundaries are integer. |

888 | | const BOUND_SHIFT: u32 = 2; |

889 | 6 | let bin_sig_shifted = bin_sig << BOUND_SHIFT; |

890 | | |

891 | | // Compute the estimates of lower and upper bounds of the rounding interval |

892 | | // by multiplying them by the power of 10 and applying modified rounding. |

893 | 6 | let lsb = bin_sig & UInt::from(1); |

894 | 6 | let lower = (bin_sig_shifted - (UInt::from(regular) + UInt::from(1))) << exp_shift; |

895 | 6 | let lower = umulhi_inexact_to_odd(pow10.hi, pow10.lo, lower) + lsb; |

896 | 6 | let upper = (bin_sig_shifted + UInt::from(2)) << exp_shift; |

897 | 6 | let upper = umulhi_inexact_to_odd(pow10.hi, pow10.lo, upper) - lsb; |

898 | | |

899 | | // The idea of using a single shorter candidate is by Cassio Neri. |

900 | | // It is less or equal to the upper bound by construction. |

901 | 6 | let shorter = (upper >> BOUND_SHIFT) / UInt::from(10) * UInt::from(10); |

902 | 6 | if (shorter << BOUND_SHIFT) >= lower { |

903 | 6 | return ToDecimalResult { |

904 | 6 | sig: shorter.into() as i64, |

905 | 6 | exp: dec_exp, |

906 | 6 | }; |

907 | 0 | } |

908 | | |

909 | 0 | let scaled_sig = umulhi_inexact_to_odd(pow10.hi, pow10.lo, bin_sig_shifted << exp_shift); |

910 | 0 | let longer_below = scaled_sig >> BOUND_SHIFT; |

911 | 0 | let longer_above = longer_below + UInt::from(1); |

912 | | |

913 | | // Pick the closest of longer_below and longer_above and check if it's in |

914 | | // the rounding interval. |

915 | 0 | let cmp = scaled_sig |

916 | 0 | .wrapping_sub((longer_below + longer_above) << 1) |

917 | 0 | .to_signed(); |

918 | 0 | let below_closer = cmp < UInt::from(0).to_signed() |

919 | 0 | || (cmp == UInt::from(0).to_signed() && (longer_below & UInt::from(1)) == UInt::from(0)); |

920 | 0 | let below_in = (longer_below << BOUND_SHIFT) >= lower; |

921 | 0 | let dec_sig = if below_closer & below_in { |

922 | 0 | longer_below |

923 | | } else { |

924 | 0 | longer_above |

925 | | }; |

926 | 0 | ToDecimalResult { |

927 | 0 | sig: dec_sig.into() as i64, |

928 | 0 | exp: dec_exp, |

929 | 0 | } |

930 | 6 | } zmij::to_decimal_schubfach::<u32> Line | Count | Source | 870 | 6 | fn to_decimal_schubfach<UInt>(bin_sig: UInt, bin_exp: i64, regular: bool) -> ToDecimalResult | 871 | 6 | where | 872 | 6 | UInt: traits::UInt, | 873 | | { | 874 | 6 | let num_bits = mem::size_of::<UInt>() as i32 * 8; | 875 | 6 | let dec_exp = compute_dec_exp(bin_exp as i32, regular); | 876 | 6 | let exp_shift = unsafe { compute_exp_shift::<UInt, false>(bin_exp as i32, dec_exp) }; | 877 | 6 | let mut pow10 = unsafe { POW10_SIGNIFICANDS.get_unchecked(-dec_exp) }; | 878 | | | 879 | | // Fallback to Schubfach to guarantee correctness in boundary cases. This | 880 | | // requires switching to strict overestimates of powers of 10. | 881 | 6 | if num_bits == 64 { | 882 | 0 | pow10.lo += 1; | 883 | 6 | } else { | 884 | 6 | pow10.hi += 1; | 885 | 6 | } | 886 | | | 887 | | // Shift the significand so that boundaries are integer. | 888 | | const BOUND_SHIFT: u32 = 2; | 889 | 6 | let bin_sig_shifted = bin_sig << BOUND_SHIFT; | 890 | | | 891 | | // Compute the estimates of lower and upper bounds of the rounding interval | 892 | | // by multiplying them by the power of 10 and applying modified rounding. | 893 | 6 | let lsb = bin_sig & UInt::from(1); | 894 | 6 | let lower = (bin_sig_shifted - (UInt::from(regular) + UInt::from(1))) << exp_shift; | 895 | 6 | let lower = umulhi_inexact_to_odd(pow10.hi, pow10.lo, lower) + lsb; | 896 | 6 | let upper = (bin_sig_shifted + UInt::from(2)) << exp_shift; | 897 | 6 | let upper = umulhi_inexact_to_odd(pow10.hi, pow10.lo, upper) - lsb; | 898 | | | 899 | | // The idea of using a single shorter candidate is by Cassio Neri. | 900 | | // It is less or equal to the upper bound by construction. | 901 | 6 | let shorter = (upper >> BOUND_SHIFT) / UInt::from(10) * UInt::from(10); | 902 | 6 | if (shorter << BOUND_SHIFT) >= lower { | 903 | 6 | return ToDecimalResult { | 904 | 6 | sig: shorter.into() as i64, | 905 | 6 | exp: dec_exp, | 906 | 6 | }; | 907 | 0 | } | 908 | | | 909 | 0 | let scaled_sig = umulhi_inexact_to_odd(pow10.hi, pow10.lo, bin_sig_shifted << exp_shift); | 910 | 0 | let longer_below = scaled_sig >> BOUND_SHIFT; | 911 | 0 | let longer_above = longer_below + UInt::from(1); | 912 | | | 913 | | // Pick the closest of longer_below and longer_above and check if it's in | 914 | | // the rounding interval. | 915 | 0 | let cmp = scaled_sig | 916 | 0 | .wrapping_sub((longer_below + longer_above) << 1) | 917 | 0 | .to_signed(); | 918 | 0 | let below_closer = cmp < UInt::from(0).to_signed() | 919 | 0 | || (cmp == UInt::from(0).to_signed() && (longer_below & UInt::from(1)) == UInt::from(0)); | 920 | 0 | let below_in = (longer_below << BOUND_SHIFT) >= lower; | 921 | 0 | let dec_sig = if below_closer & below_in { | 922 | 0 | longer_below | 923 | | } else { | 924 | 0 | longer_above | 925 | | }; | 926 | 0 | ToDecimalResult { | 927 | 0 | sig: dec_sig.into() as i64, | 928 | 0 | exp: dec_exp, | 929 | 0 | } | 930 | 6 | } |

Unexecuted instantiation: zmij::to_decimal_schubfach::<u64> |

931 | | |

932 | | // Here be 🐉s. |

933 | | // Converts a binary FP number bin_sig * 2**bin_exp to the shortest decimal |

934 | | // representation, where bin_exp = raw_exp - exp_offset. |

935 | | #[cfg_attr(feature = "no-panic", no_panic)] |

936 | | #[inline] |

937 | 7.28k | fn to_decimal_fast<Float, UInt>(bin_sig: UInt, raw_exp: i64, regular: bool) -> ToDecimalResult |

938 | 7.28k | where |

939 | 7.28k | Float: FloatTraits, |

940 | 7.28k | UInt: traits::UInt, |

941 | | { |

942 | 7.28k | let bin_exp = raw_exp - i64::from(Float::EXP_OFFSET); |

943 | 7.28k | let num_bits = mem::size_of::<UInt>() as i32 * 8; |

944 | | // An optimization from yy by Yaoyuan Guo: |

945 | 7.28k | while regular { |

946 | 7.28k | let dec_exp = if USE_UMUL128_HI64 { |

947 | 0 | umul128_hi64(bin_exp as u64, 0x4d10500000000000) as i32 |

948 | | } else { |

949 | 7.28k | compute_dec_exp(bin_exp as i32, true) |

950 | | }; |

951 | 7.28k | let exp_shift = unsafe { compute_exp_shift::<UInt, true>(bin_exp as i32, dec_exp) }; |

952 | 7.28k | let pow10 = unsafe { POW10_SIGNIFICANDS.get_unchecked(-dec_exp) }; |

953 | | |

954 | | let integral; // integral part of bin_sig * pow10 |

955 | | let fractional; // fractional part of bin_sig * pow10 |

956 | 7.28k | if num_bits == 64 { |

957 | 0 | let p = umul192_hi128(pow10.hi, pow10.lo, (bin_sig << exp_shift).into()); |

958 | 0 | integral = UInt::truncate(p.hi); |

959 | 0 | fractional = p.lo; |

960 | 7.28k | } else { |

961 | 7.28k | let p = umul128(pow10.hi, (bin_sig << exp_shift).into()); |

962 | 7.28k | integral = UInt::truncate((p >> 64) as u64); |

963 | 7.28k | fractional = p as u64; |

964 | 7.28k | } |

965 | | const HALF_ULP: u64 = 1 << 63; |

966 | | |

967 | | // Exact half-ulp tie when rounding to nearest integer. |

968 | 7.28k | let cmp = fractional.wrapping_sub(HALF_ULP) as i64; |

969 | 7.28k | if cmp == 0 { |

970 | 6 | break; |

971 | 7.28k | } |

972 | | |

973 | | // An optimization of integral % 10 by Dougall Johnson. Relies on range |

974 | | // calculation: (max_bin_sig << max_exp_shift) * max_u128. |

975 | | // (1 << 63) / 5 == (1 << 64) / 10 without an intermediate int128. |

976 | | const DIV10_SIG64: u64 = (1 << 63) / 5 + 1; |

977 | 7.28k | let div10 = umul128_hi64(integral.into(), DIV10_SIG64); |

978 | | #[allow(unused_mut)] |

979 | 7.28k | let mut digit = integral.into() - div10 * 10; |

980 | | // or it narrows to 32-bit and doesn't use madd/msub |

981 | | #[cfg(all(any(target_arch = "aarch64", target_arch = "x86_64"), not(miri)))] |

982 | 7.28k | unsafe { |

983 | 7.28k | asm!("/*{0}*/", inout(reg) digit); |

984 | 7.28k | } |

985 | | |

986 | | // Switch to a fixed-point representation with the least significant |

987 | | // integral digit in the upper bits and fractional digits in the lower |

988 | | // bits. |

989 | 7.28k | let num_integral_bits = if num_bits == 64 { 4 } else { 32 }; |

990 | 7.28k | let num_fractional_bits = 64 - num_integral_bits; |

991 | 7.28k | let ten = 10u64 << num_fractional_bits; |

992 | | // Fixed-point remainder of the scaled significand modulo 10. |

993 | 7.28k | let scaled_sig_mod10 = (digit << num_fractional_bits) | (fractional >> num_integral_bits); |

994 | | |

995 | | // scaled_half_ulp = 0.5 * pow10 in the fixed-point format. |

996 | | // dec_exp is chosen so that 10**dec_exp <= 2**bin_exp < 10**(dec_exp + 1). |

997 | | // Since 1ulp == 2**bin_exp it will be in the range [1, 10) after scaling |

998 | | // by 10**dec_exp. Add 1 to combine the shift with division by two. |

999 | 7.28k | let scaled_half_ulp = pow10.hi >> (num_integral_bits - exp_shift + 1); |

1000 | 7.28k | let upper = scaled_sig_mod10 + scaled_half_ulp; |

1001 | | |

1002 | | // value = 5.0507837461e-27 |

1003 | | // next = 5.0507837461000010e-27 |

1004 | | // |

1005 | | // c = integral.fractional' = 50507837461000003.153987... (value) |

1006 | | // 50507837461000010.328635... (next) |

1007 | | // scaled_half_ulp = 3.587324... |

1008 | | // |

1009 | | // fractional' = fractional / 2**64, fractional = 2840565642863009226 |

1010 | | // |

1011 | | // 50507837461000000 c upper 50507837461000010 |

1012 | | // s l| L | S |

1013 | | // ───┬────┬────┼────┬────┬────┼*-──┼────┬────┬───*┬────┬────┬────┼-*--┬─── |

1014 | | // 8 9 0 1 2 3 4 5 6 7 8 9 0 | 1 |

1015 | | // └─────────────────┼─────────────────┘ next |

1016 | | // 1ulp |

1017 | | // |

1018 | | // s - shorter underestimate, S - shorter overestimate |

1019 | | // l - longer underestimate, L - longer overestimate |

1020 | | |

1021 | | // Check for boundary case when rounding down to nearest 10 and |

1022 | | // near-boundary case when rounding up to nearest 10. |

1023 | | // Case where upper == ten is insufficient: 1.342178e+08f. |

1024 | 7.28k | if ten.wrapping_sub(upper) <= 1 // upper == ten || upper == ten - 1 |

1025 | 7.28k | || scaled_sig_mod10 == scaled_half_ulp |

1026 | | { |

1027 | 0 | break; |

1028 | 7.28k | } |

1029 | | |

1030 | 7.28k | let shorter = (integral.into() - digit) as i64; |

1031 | 7.28k | let longer = (integral.into() + u64::from(cmp >= 0)) as i64; |

1032 | 7.28k | let dec_sig = select_if_less(scaled_sig_mod10, scaled_half_ulp, shorter, longer); |

1033 | 7.28k | return ToDecimalResult { |

1034 | 7.28k | sig: select_if_less(ten, upper, shorter + 10, dec_sig), |

1035 | 7.28k | exp: dec_exp, |

1036 | 7.28k | }; |

1037 | | } |

1038 | 6 | to_decimal_schubfach(bin_sig, bin_exp, regular) |

1039 | 7.28k | } Unexecuted instantiation: zmij::to_decimal_fast::<f64, u64> zmij::to_decimal_fast::<f32, u32> Line | Count | Source | 937 | 7.28k | fn to_decimal_fast<Float, UInt>(bin_sig: UInt, raw_exp: i64, regular: bool) -> ToDecimalResult | 938 | 7.28k | where | 939 | 7.28k | Float: FloatTraits, | 940 | 7.28k | UInt: traits::UInt, | 941 | | { | 942 | 7.28k | let bin_exp = raw_exp - i64::from(Float::EXP_OFFSET); | 943 | 7.28k | let num_bits = mem::size_of::<UInt>() as i32 * 8; | 944 | | // An optimization from yy by Yaoyuan Guo: | 945 | 7.28k | while regular { | 946 | 7.28k | let dec_exp = if USE_UMUL128_HI64 { | 947 | 0 | umul128_hi64(bin_exp as u64, 0x4d10500000000000) as i32 | 948 | | } else { | 949 | 7.28k | compute_dec_exp(bin_exp as i32, true) | 950 | | }; | 951 | 7.28k | let exp_shift = unsafe { compute_exp_shift::<UInt, true>(bin_exp as i32, dec_exp) }; | 952 | 7.28k | let pow10 = unsafe { POW10_SIGNIFICANDS.get_unchecked(-dec_exp) }; | 953 | | | 954 | | let integral; // integral part of bin_sig * pow10 | 955 | | let fractional; // fractional part of bin_sig * pow10 | 956 | 7.28k | if num_bits == 64 { | 957 | 0 | let p = umul192_hi128(pow10.hi, pow10.lo, (bin_sig << exp_shift).into()); | 958 | 0 | integral = UInt::truncate(p.hi); | 959 | 0 | fractional = p.lo; | 960 | 7.28k | } else { | 961 | 7.28k | let p = umul128(pow10.hi, (bin_sig << exp_shift).into()); | 962 | 7.28k | integral = UInt::truncate((p >> 64) as u64); | 963 | 7.28k | fractional = p as u64; | 964 | 7.28k | } | 965 | | const HALF_ULP: u64 = 1 << 63; | 966 | | | 967 | | // Exact half-ulp tie when rounding to nearest integer. | 968 | 7.28k | let cmp = fractional.wrapping_sub(HALF_ULP) as i64; | 969 | 7.28k | if cmp == 0 { | 970 | 6 | break; | 971 | 7.28k | } | 972 | | | 973 | | // An optimization of integral % 10 by Dougall Johnson. Relies on range | 974 | | // calculation: (max_bin_sig << max_exp_shift) * max_u128. | 975 | | // (1 << 63) / 5 == (1 << 64) / 10 without an intermediate int128. | 976 | | const DIV10_SIG64: u64 = (1 << 63) / 5 + 1; | 977 | 7.28k | let div10 = umul128_hi64(integral.into(), DIV10_SIG64); | 978 | | #[allow(unused_mut)] | 979 | 7.28k | let mut digit = integral.into() - div10 * 10; | 980 | | // or it narrows to 32-bit and doesn't use madd/msub | 981 | | #[cfg(all(any(target_arch = "aarch64", target_arch = "x86_64"), not(miri)))] | 982 | 7.28k | unsafe { | 983 | 7.28k | asm!("/*{0}*/", inout(reg) digit); | 984 | 7.28k | } | 985 | | | 986 | | // Switch to a fixed-point representation with the least significant | 987 | | // integral digit in the upper bits and fractional digits in the lower | 988 | | // bits. | 989 | 7.28k | let num_integral_bits = if num_bits == 64 { 4 } else { 32 }; | 990 | 7.28k | let num_fractional_bits = 64 - num_integral_bits; | 991 | 7.28k | let ten = 10u64 << num_fractional_bits; | 992 | | // Fixed-point remainder of the scaled significand modulo 10. | 993 | 7.28k | let scaled_sig_mod10 = (digit << num_fractional_bits) | (fractional >> num_integral_bits); | 994 | | | 995 | | // scaled_half_ulp = 0.5 * pow10 in the fixed-point format. | 996 | | // dec_exp is chosen so that 10**dec_exp <= 2**bin_exp < 10**(dec_exp + 1). | 997 | | // Since 1ulp == 2**bin_exp it will be in the range [1, 10) after scaling | 998 | | // by 10**dec_exp. Add 1 to combine the shift with division by two. | 999 | 7.28k | let scaled_half_ulp = pow10.hi >> (num_integral_bits - exp_shift + 1); | 1000 | 7.28k | let upper = scaled_sig_mod10 + scaled_half_ulp; | 1001 | | | 1002 | | // value = 5.0507837461e-27 | 1003 | | // next = 5.0507837461000010e-27 | 1004 | | // | 1005 | | // c = integral.fractional' = 50507837461000003.153987... (value) | 1006 | | // 50507837461000010.328635... (next) | 1007 | | // scaled_half_ulp = 3.587324... | 1008 | | // | 1009 | | // fractional' = fractional / 2**64, fractional = 2840565642863009226 | 1010 | | // | 1011 | | // 50507837461000000 c upper 50507837461000010 | 1012 | | // s l| L | S | 1013 | | // ───┬────┬────┼────┬────┬────┼*-──┼────┬────┬───*┬────┬────┬────┼-*--┬─── | 1014 | | // 8 9 0 1 2 3 4 5 6 7 8 9 0 | 1 | 1015 | | // └─────────────────┼─────────────────┘ next | 1016 | | // 1ulp | 1017 | | // | 1018 | | // s - shorter underestimate, S - shorter overestimate | 1019 | | // l - longer underestimate, L - longer overestimate | 1020 | | | 1021 | | // Check for boundary case when rounding down to nearest 10 and | 1022 | | // near-boundary case when rounding up to nearest 10. | 1023 | | // Case where upper == ten is insufficient: 1.342178e+08f. | 1024 | 7.28k | if ten.wrapping_sub(upper) <= 1 // upper == ten || upper == ten - 1 | 1025 | 7.28k | || scaled_sig_mod10 == scaled_half_ulp | 1026 | | { | 1027 | 0 | break; | 1028 | 7.28k | } | 1029 | | | 1030 | 7.28k | let shorter = (integral.into() - digit) as i64; | 1031 | 7.28k | let longer = (integral.into() + u64::from(cmp >= 0)) as i64; | 1032 | 7.28k | let dec_sig = select_if_less(scaled_sig_mod10, scaled_half_ulp, shorter, longer); | 1033 | 7.28k | return ToDecimalResult { | 1034 | 7.28k | sig: select_if_less(ten, upper, shorter + 10, dec_sig), | 1035 | 7.28k | exp: dec_exp, | 1036 | 7.28k | }; | 1037 | | } | 1038 | 6 | to_decimal_schubfach(bin_sig, bin_exp, regular) | 1039 | 7.28k | } |

|

1040 | | |

1041 | | /// Writes the shortest correctly rounded decimal representation of `value` to |

1042 | | /// `buffer`. `buffer` should point to a buffer of size `buffer_size` or larger. |

1043 | | #[cfg_attr(feature = "no-panic", no_panic)] |

1044 | 22.0k | unsafe fn write<Float>(value: Float, mut buffer: *mut u8) -> *mut u8 |

1045 | 22.0k | where |

1046 | 22.0k | Float: FloatTraits, |

1047 | | { |

1048 | 22.0k | let bits = value.to_bits(); |

1049 | | // It is beneficial to extract exponent and significand early. |

1050 | 22.0k | let bin_exp = Float::get_exp(bits); // binary exponent |

1051 | 22.0k | let bin_sig = Float::get_sig(bits); // binary significand |

1052 | | |

1053 | 22.0k | unsafe { |

1054 | 22.0k | *buffer = b'-'; |

1055 | 22.0k | } |

1056 | 22.0k | buffer = unsafe { buffer.add(usize::from(Float::is_negative(bits))) }; |

1057 | | |

1058 | | let mut dec; |

1059 | 22.0k | let threshold = if Float::NUM_BITS == 64 { |

1060 | 5.08k | 10_000_000_000_000_000 |

1061 | | } else { |

1062 | 16.9k | 100_000_000 |

1063 | | }; |

1064 | 22.0k | if bin_exp == 0 { |

1065 | 14.7k | if bin_sig == Float::SigType::from(0) { |

1066 | | return unsafe { |

1067 | 14.7k | *buffer = b'0'; |

1068 | 14.7k | *buffer.add(1) = b'.'; |

1069 | 14.7k | *buffer.add(2) = b'0'; |

1070 | 14.7k | buffer.add(3) |

1071 | | }; |

1072 | 0 | } |

1073 | 0 | dec = to_decimal_schubfach(bin_sig, i64::from(1 - Float::EXP_OFFSET), true); |

1074 | 0 | while dec.sig < threshold { |

1075 | 0 | dec.sig *= 10; |

1076 | 0 | dec.exp -= 1; |

1077 | 0 | } |

1078 | 7.28k | } else { |

1079 | 7.28k | dec = to_decimal_fast::<Float, Float::SigType>( |

1080 | 7.28k | bin_sig | Float::IMPLICIT_BIT, |

1081 | 7.28k | bin_exp, |

1082 | 7.28k | bin_sig != Float::SigType::from(0), |

1083 | 7.28k | ); |

1084 | 7.28k | } |

1085 | 7.28k | let mut dec_exp = dec.exp; |

1086 | 7.28k | let extra_digit = dec.sig >= threshold; |

1087 | 7.28k | dec_exp += Float::MAX_DIGITS10 as i32 - 2 + i32::from(extra_digit); |

1088 | 7.28k | if Float::NUM_BITS == 32 && dec.sig < 10_000_000 { |

1089 | 0 | dec.sig *= 10; |

1090 | 0 | dec_exp -= 1; |

1091 | 7.28k | } |

1092 | | |

1093 | | // Write significand. |

1094 | 7.28k | let end = unsafe { write_significand::<Float>(buffer.add(1), dec.sig as u64, extra_digit) }; |

1095 | | |

1096 | 7.28k | let length = unsafe { end.offset_from(buffer.add(1)) } as usize; |

1097 | | |

1098 | 7.28k | if Float::NUM_BITS == 32 && (-6..=12).contains(&dec_exp) |

1099 | 0 | || Float::NUM_BITS == 64 && (-5..=15).contains(&dec_exp) |

1100 | | { |

1101 | 7.28k | if length as i32 - 1 <= dec_exp { |

1102 | | // 1234e7 -> 12340000000.0 |

1103 | | return unsafe { |

1104 | 1.27k | ptr::copy(buffer.add(1), buffer, length); |

1105 | 1.27k | ptr::write_bytes(buffer.add(length), b'0', dec_exp as usize + 3 - length); |

1106 | 1.27k | *buffer.add(dec_exp as usize + 1) = b'.'; |

1107 | 1.27k | buffer.add(dec_exp as usize + 3) |

1108 | | }; |

1109 | 6.01k | } else if 0 <= dec_exp { |

1110 | | // 1234e-2 -> 12.34 |

1111 | | return unsafe { |

1112 | 4.74k | ptr::copy(buffer.add(1), buffer, dec_exp as usize + 1); |

1113 | 4.74k | *buffer.add(dec_exp as usize + 1) = b'.'; |

1114 | 4.74k | buffer.add(length + 1) |

1115 | | }; |

1116 | | } else { |

1117 | | // 1234e-6 -> 0.001234 |

1118 | | return unsafe { |

1119 | 1.27k | ptr::copy(buffer.add(1), buffer.add((1 - dec_exp) as usize), length); |

1120 | 1.27k | ptr::write_bytes(buffer, b'0', (1 - dec_exp) as usize); |

1121 | 1.27k | *buffer.add(1) = b'.'; |

1122 | 1.27k | buffer.add((1 - dec_exp) as usize + length) |

1123 | | }; |

1124 | | } |

1125 | 0 | } |

1126 | | |

1127 | 0 | unsafe { |

1128 | 0 | // 1234e30 -> 1.234e33 |

1129 | 0 | *buffer = *buffer.add(1); |

1130 | 0 | *buffer.add(1) = b'.'; |

1131 | 0 | } |

1132 | 0 | buffer = unsafe { buffer.add(length + usize::from(length > 1)) }; |

1133 | | |

1134 | | // Write exponent. |

1135 | 0 | let sign_ptr = buffer; |

1136 | 0 | let e_sign = if dec_exp >= 0 { |

1137 | 0 | (u16::from(b'+') << 8) | u16::from(b'e') |

1138 | | } else { |

1139 | 0 | (u16::from(b'-') << 8) | u16::from(b'e') |

1140 | | }; |

1141 | 0 | buffer = unsafe { buffer.add(1) }; |

1142 | 0 | dec_exp = if dec_exp >= 0 { dec_exp } else { -dec_exp }; |

1143 | 0 | buffer = unsafe { buffer.add(usize::from(dec_exp >= 10)) }; |

1144 | 0 | if Float::MIN_10_EXP > -100 && Float::MAX_10_EXP < 100 { |

1145 | | unsafe { |

1146 | 0 | buffer |

1147 | 0 | .cast::<u16>() |

1148 | 0 | .write_unaligned(*digits2(dec_exp as usize)); |

1149 | 0 | sign_ptr.cast::<u16>().write_unaligned(e_sign.to_le()); |

1150 | 0 | return buffer.add(2); |

1151 | | } |

1152 | 0 | } |

1153 | | // digit = dec_exp / 100 |

1154 | 0 | let digit = if USE_UMUL128_HI64 { |

1155 | 0 | umul128_hi64(dec_exp as u64, 0x290000000000000) as u32 |

1156 | | } else { |

1157 | 0 | (dec_exp as u32 * DIV100_SIG) >> DIV100_EXP |

1158 | | }; |

1159 | 0 | unsafe { |

1160 | 0 | *buffer = b'0' + digit as u8; |

1161 | 0 | } |

1162 | 0 | buffer = unsafe { buffer.add(usize::from(dec_exp >= 100)) }; |

1163 | | unsafe { |

1164 | 0 | buffer |

1165 | 0 | .cast::<u16>() |

1166 | 0 | .write_unaligned(*digits2((dec_exp as u32 - digit * 100) as usize)); |

1167 | 0 | sign_ptr.cast::<u16>().write_unaligned(e_sign.to_le()); |

1168 | 0 | buffer.add(2) |

1169 | | } |