Project overview: llamacpp

High level conclusions

Reachability and coverage overview

Project functions overview

The following table shows data about each function in the project. The functions included in this table correspond to all functions that exist in the executables of the fuzzers. As such, there may be functions that are from third-party libraries.

For further technical details on the meaning of columns in the below table, please see the Glossary .

| Func name | Functions filename | Args | Function call depth | Reached by Fuzzers | Runtime reached by Fuzzers | Combined reached by Fuzzers | Fuzzers runtime hit | Func lines hit % | I Count | BB Count | Cyclomatic complexity | Functions reached | Reached by functions | Accumulated cyclomatic complexity | Undiscovered complexity |

|---|

Fuzzer details

Fuzzer: fuzz_grammar

Call tree

The calltree shows the control flow of the fuzzer. This is overlaid with coverage information to display how much of the potential code a fuzzer can reach is in fact covered at runtime. In the following there is a link to a detailed calltree visualisation as well as a bitmap showing a high-level view of the calltree. For further information about these topics please see the glossary for full calltree and calltree overview

Call tree overview bitmap:

The distribution of callsites in terms of coloring is

| Color | Runtime hitcount | Callsite count | Percentage |

|---|---|---|---|

| red | 0 | 465 | 82.7% |

| gold | [1:9] | 32 | 5.69% |

| yellow | [10:29] | 17 | 3.02% |

| greenyellow | [30:49] | 0 | 0.0% |

| lawngreen | 50+ | 48 | 8.54% |

| All colors | 562 | 100 |

Fuzz blockers

The following nodes represent call sites where fuzz blockers occur.

| Amount of callsites blocked | Calltree index | Parent function | Callsite | Largest blocked function |

|---|---|---|---|---|

| 420 | 64 | parse_int(char const*) | call site: 00064 | ggml_abort |

| 5 | 26 |

llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string

|

call site: 00026 | __cxa_allocate_exception |

| 5 | 38 | parse_char(char const*) | call site: 00038 | __cxa_allocate_exception |

| 5 | 50 |

llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string

|

call site: 00050 | __cxa_allocate_exception |

| 4 | 7 | is_word_char(char) | call site: 00007 | __cxa_allocate_exception |

| 4 | 58 |

llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string

|

call site: 00058 | __cxa_allocate_exception |

| 4 | 506 |

llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string

|

call site: 00506 | __cxa_allocate_exception |

| 4 | 519 |

llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string

|

call site: 00519 | __cxa_allocate_exception |

| 4 | 529 |

llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string

|

call site: 00529 | __cxa_allocate_exception |

| 4 | 539 |

llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string

|

call site: 00539 | __cxa_allocate_exception |

| 4 | 549 | llama_grammar_parser::parse_rule(char const*) | call site: 00549 | __cxa_allocate_exception |

| 1 | 32 | parse_char(char const*) | call site: 00032 |

Runtime coverage analysis

| Function name | source code lines | source lines hit | percentage hit |

|---|

Files reached

| filename | functions hit |

|---|---|

| /src/llama.cpp/fuzzers/fuzz_grammar.cpp | 1 |

| /src/llama.cpp/src/llama-grammar.h | 2 |

| /src/llama.cpp/src/llama-grammar.cpp | 17 |

| /src/llama.cpp/src/llama-vocab.cpp | 66 |

| /src/llama.cpp/ggml/src/ggml.c | 1 |

| /src/llama.cpp/src/llama-impl.cpp | 3 |

| /src/llama.cpp/src/unicode.cpp | 36 |

| /usr/local/bin/../include/c++/v1/stdexcept | 1 |

| /src/llama.cpp/src/unicode.h | 3 |

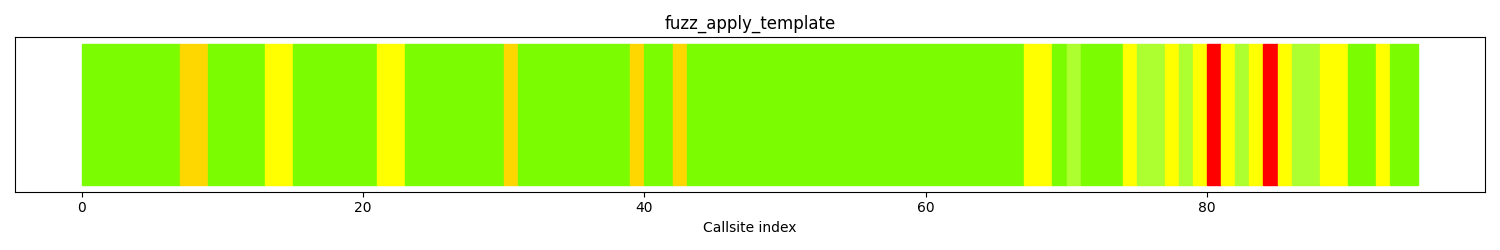

Fuzzer: fuzz_apply_template

Call tree

The calltree shows the control flow of the fuzzer. This is overlaid with coverage information to display how much of the potential code a fuzzer can reach is in fact covered at runtime. In the following there is a link to a detailed calltree visualisation as well as a bitmap showing a high-level view of the calltree. For further information about these topics please see the glossary for full calltree and calltree overview

Call tree overview bitmap:

The distribution of callsites in terms of coloring is

| Color | Runtime hitcount | Callsite count | Percentage |

|---|---|---|---|

| red | 0 | 2 | 2.10% |

| gold | [1:9] | 5 | 5.26% |

| yellow | [10:29] | 15 | 15.7% |

| greenyellow | [30:49] | 7 | 7.36% |

| lawngreen | 50+ | 66 | 69.4% |

| All colors | 95 | 100 |

Fuzz blockers

The following nodes represent call sites where fuzz blockers occur.

| Amount of callsites blocked | Calltree index | Parent function | Callsite | Largest blocked function |

|---|---|---|---|---|

| 1 | 79 |

llm_chat_apply_template(llm_chat_template, std::__1::vector

|

call site: 00079 | |

| 1 | 83 |

llm_chat_apply_template(llm_chat_template, std::__1::vector

|

call site: 00083 |

Runtime coverage analysis

| Function name | source code lines | source lines hit | percentage hit |

|---|

Files reached

| filename | functions hit |

|---|---|

| /src/llama.cpp/fuzzers/fuzz_apply_template.cpp | 1 |

| /src/llama.cpp/src/llama.cpp | 1 |

| /src/llama.cpp/src/llama-chat.cpp | 5 |

Fuzzer: fuzz_load_model

Call tree

The calltree shows the control flow of the fuzzer. This is overlaid with coverage information to display how much of the potential code a fuzzer can reach is in fact covered at runtime. In the following there is a link to a detailed calltree visualisation as well as a bitmap showing a high-level view of the calltree. For further information about these topics please see the glossary for full calltree and calltree overview

Call tree overview bitmap:

The distribution of callsites in terms of coloring is

| Color | Runtime hitcount | Callsite count | Percentage |

|---|---|---|---|

| red | 0 | 7580 | 98.6% |

| gold | [1:9] | 71 | 0.92% |

| yellow | [10:29] | 5 | 0.06% |

| greenyellow | [30:49] | 9 | 0.11% |

| lawngreen | 50+ | 16 | 0.20% |

| All colors | 7681 | 100 |

Fuzz blockers

The following nodes represent call sites where fuzz blockers occur.

| Amount of callsites blocked | Calltree index | Parent function | Callsite | Largest blocked function |

|---|---|---|---|---|

| 7449 | 213 | llama_model::~llama_model() | call site: 00213 | ggml_backend_alloc_ctx_tensors_from_buft |

| 87 | 87 | llama_model::llama_model(llama_model_params const&) | call site: 00087 | ggml_backend_dev_count |

| 13 | 180 | ggml_backend_dev_get | call site: 00180 | ggml_backend_dev_buffer_type |

| 12 | 6 | ggml_init | call site: 00006 | ggml_abort |

| 10 | 197 | ggml_backend_dev_type | call site: 00197 | ggml_backend_dev_backend_reg |

| 2 | 175 | ggml_backend_dev_count | call site: 00175 | ggml_backend_dev_get |

| 1 | 19 | ggml_init | call site: 00019 | ggml_log_internal |

| 1 | 21 | ggml_aligned_malloc | call site: 00021 | ggml_log_internal |

| 1 | 38 | llama_model_load_from_file | call site: 00038 | |

| 1 | 41 | llama_log_internal_v(ggml_log_level, char const*, __va_list_tag*) | call site: 00041 | vsnprintf |

| 1 | 53 | ggml_cpu_init | call site: 00053 | clock_gettime |

| 1 | 82 | get_reg() | call site: 00082 |

Runtime coverage analysis

| Function name | source code lines | source lines hit | percentage hit |

|---|

Files reached

| filename | functions hit |

|---|---|

| /src/llama.cpp/fuzzers/fuzz_load_model.cpp | 2 |

| /src/llama.cpp/src/llama.cpp | 10 |

| /src/llama.cpp/ggml/src/ggml.c | 71 |

| /src/llama.cpp/ggml/src/ggml-threading.cpp | 2 |

| /src/llama.cpp/src/llama-impl.cpp | 10 |

| /src/llama.cpp/ggml/src/ggml-backend-reg.cpp | 13 |

| /src/llama.cpp/ggml/src/ggml-cpu/ggml-cpu.cpp | 1 |

| /src/llama.cpp/ggml/src/ggml-cpu/ggml-cpu.c | 1 |

| /src/llama.cpp/ggml/src/./ggml-impl.h | 5 |

| /src/llama.cpp/ggml/src/ggml-cpu/vec.h | 2 |

| /src/llama.cpp/ggml/src/ggml-backend.cpp | 50 |

| /src/llama.cpp/src/llama-model.cpp | 33 |

| /src/llama.cpp/src/llama-vocab.cpp | 88 |

| /src/llama.cpp/ggml/src/ggml-backend-meta.cpp | 33 |

| /src/llama.cpp/src/llama-hparams.cpp | 20 |

| /src/llama.cpp/src/llama-adapter.h | 1 |

| /src/llama.cpp/src/llama-model-loader.cpp | 96 |

| /src/llama.cpp/src/llama-arch.cpp | 9 |

| /src/llama.cpp/ggml/src/gguf.cpp | 81 |

| /src/llama.cpp/src/llama-mmap.cpp | 29 |

| /src/llama.cpp/src/llama-model-loader.h | 2 |

| /src/llama.cpp/src/unicode.cpp | 38 |

| /usr/local/bin/../include/c++/v1/stdexcept | 1 |

| /src/llama.cpp/src/unicode.h | 3 |

| /src/llama.cpp/src/llama-arch.h | 3 |

| /src/llama.cpp/ggml/src/ggml-impl.h | 1 |

| /src/llama.cpp/ggml/src/ggml-alloc.c | 7 |

| /src/llama.cpp/ggml/src/ggml-quants.c | 10 |

Fuzzer: fuzz_structured

Call tree

The calltree shows the control flow of the fuzzer. This is overlaid with coverage information to display how much of the potential code a fuzzer can reach is in fact covered at runtime. In the following there is a link to a detailed calltree visualisation as well as a bitmap showing a high-level view of the calltree. For further information about these topics please see the glossary for full calltree and calltree overview

Call tree overview bitmap:

The distribution of callsites in terms of coloring is

| Color | Runtime hitcount | Callsite count | Percentage |

|---|---|---|---|

| red | 0 | 7580 | 98.6% |

| gold | [1:9] | 76 | 0.98% |

| yellow | [10:29] | 9 | 0.11% |

| greenyellow | [30:49] | 2 | 0.02% |

| lawngreen | 50+ | 19 | 0.24% |

| All colors | 7686 | 100 |

Fuzz blockers

The following nodes represent call sites where fuzz blockers occur.

| Amount of callsites blocked | Calltree index | Parent function | Callsite | Largest blocked function |

|---|---|---|---|---|

| 7449 | 217 | llama_model::~llama_model() | call site: 00217 | ggml_backend_alloc_ctx_tensors_from_buft |

| 87 | 91 | llama_model::llama_model(llama_model_params const&) | call site: 00091 | ggml_backend_dev_count |

| 13 | 184 | ggml_backend_dev_get | call site: 00184 | ggml_backend_dev_buffer_type |

| 12 | 6 | ggml_init | call site: 00006 | ggml_abort |

| 10 | 201 | ggml_backend_dev_type | call site: 00201 | ggml_backend_dev_backend_reg |

| 2 | 179 | ggml_backend_dev_count | call site: 00179 | ggml_backend_dev_get |

| 1 | 19 | ggml_init | call site: 00019 | ggml_log_internal |

| 1 | 21 | ggml_aligned_malloc | call site: 00021 | ggml_log_internal |

| 1 | 42 | llama_model_load_from_file | call site: 00042 | |

| 1 | 45 | llama_log_internal_v(ggml_log_level, char const*, __va_list_tag*) | call site: 00045 | vsnprintf |

| 1 | 57 | ggml_cpu_init | call site: 00057 | clock_gettime |

| 1 | 86 | get_reg() | call site: 00086 |

Runtime coverage analysis

| Function name | source code lines | source lines hit | percentage hit |

|---|

Files reached

| filename | functions hit |

|---|---|

| /src/llama.cpp/fuzzers/fuzz_structured.cpp | 2 |

| /src/llama.cpp/src/llama.cpp | 10 |

| /src/llama.cpp/ggml/src/ggml.c | 71 |

| /src/llama.cpp/ggml/src/ggml-threading.cpp | 2 |

| /src/llama.cpp/src/llama-impl.cpp | 10 |

| /src/llama.cpp/ggml/src/ggml-backend-reg.cpp | 13 |

| /src/llama.cpp/ggml/src/ggml-cpu/ggml-cpu.cpp | 1 |

| /src/llama.cpp/ggml/src/ggml-cpu/ggml-cpu.c | 1 |

| /src/llama.cpp/ggml/src/./ggml-impl.h | 5 |

| /src/llama.cpp/ggml/src/ggml-cpu/vec.h | 2 |

| /src/llama.cpp/ggml/src/ggml-backend.cpp | 50 |

| /src/llama.cpp/src/llama-model.cpp | 33 |

| /src/llama.cpp/src/llama-vocab.cpp | 88 |

| /src/llama.cpp/ggml/src/ggml-backend-meta.cpp | 33 |

| /src/llama.cpp/src/llama-hparams.cpp | 20 |

| /src/llama.cpp/src/llama-adapter.h | 1 |

| /src/llama.cpp/src/llama-model-loader.cpp | 96 |

| /src/llama.cpp/src/llama-arch.cpp | 9 |

| /src/llama.cpp/ggml/src/gguf.cpp | 81 |

| /src/llama.cpp/src/llama-mmap.cpp | 29 |

| /src/llama.cpp/src/llama-model-loader.h | 2 |

| /src/llama.cpp/src/unicode.cpp | 38 |

| /usr/local/bin/../include/c++/v1/stdexcept | 1 |

| /src/llama.cpp/src/unicode.h | 3 |

| /src/llama.cpp/src/llama-arch.h | 3 |

| /src/llama.cpp/ggml/src/ggml-impl.h | 1 |

| /src/llama.cpp/ggml/src/ggml-alloc.c | 7 |

| /src/llama.cpp/ggml/src/ggml-quants.c | 10 |

Fuzzer: fuzz_json_to_grammar

Call tree

The calltree shows the control flow of the fuzzer. This is overlaid with coverage information to display how much of the potential code a fuzzer can reach is in fact covered at runtime. In the following there is a link to a detailed calltree visualisation as well as a bitmap showing a high-level view of the calltree. For further information about these topics please see the glossary for full calltree and calltree overview

Call tree overview bitmap:

The distribution of callsites in terms of coloring is

| Color | Runtime hitcount | Callsite count | Percentage |

|---|---|---|---|

| red | 0 | 368 | 43.2% |

| gold | [1:9] | 3 | 0.35% |

| yellow | [10:29] | 9 | 1.05% |

| greenyellow | [30:49] | 1 | 0.11% |

| lawngreen | 50+ | 470 | 55.2% |

| All colors | 851 | 100 |

Fuzz blockers

The following nodes represent call sites where fuzz blockers occur.

| Amount of callsites blocked | Calltree index | Parent function | Callsite | Largest blocked function |

|---|---|---|---|---|

| 93 | 472 |

void nlohmann::json_abi_v3_12_0::detail::external_constructor<(nlohmann::json_abi_v3_12_0::detail::value_t)6>::construct

|

call site: 00472 | _ZN8nlohmann16json_abi_v3_12_06detail11parse_error6createIDnTnNSt3__19enable_ifIXsr21is_basic_json_contextIT_EE5valueEiE4typeELi0EEES2_iRKNS1_10position_tERKNS4_12basic_stringIcNS4_11char_traitsIcEENS4_9allocatorIcEEEES6_ |

| 39 | 242 |

nlohmann::json_abi_v3_12_0::basic_json

|

call site: 00242 | _ZN8nlohmann16json_abi_v3_12_010basic_jsonINSt3__13mapENS2_6vectorENS2_12basic_stringIcNS2_11char_traitsIcEENS2_9allocatorIcEEEEblmdS8_NS0_14adl_serializerENS4_IhNS8_IhEEEEvE5eraseINS0_6detail9iter_implISE_EETnNS2_9enable_ifIXoosr3std7is_sameIT_SI_EE5valuesr3std7is_sameISK_NSH_IKSE_EEEE5valueEiE4typeELi0EEESK_SK_ |

| 21 | 370 | _ZN8nlohmann16json_abi_v3_12_010basic_jsonINSt3__13mapENS2_6vectorENS2_12basic_stringIcNS2_11char_traitsIcEENS2_9allocatorIcEEEEblmdS8_NS0_14adl_serializerENS4_IhNS8_IhEEEEvEC2IRSA_SA_TnNS2_9enable_ifIXaantsr6detail13is_basic_jsonIT0_EE5valuesr6detail18is_compatible_typeISE_SI_EE5valueEiE4typeELi0EEEOT_ | call site: 00370 | _ZN8nlohmann16json_abi_v3_12_06detail11parse_error6createIDnTnNSt3__19enable_ifIXsr21is_basic_json_contextIT_EE5valueEiE4typeELi0EEES2_iRKNS1_10position_tERKNS4_12basic_stringIcNS4_11char_traitsIcEENS4_9allocatorIcEEEES6_ |

| 16 | 392 |

std::__1::basic_string

|

call site: 00392 | _ZN8nlohmann16json_abi_v3_12_06detail12out_of_range6createIPNS0_10basic_jsonINSt3__13mapENS5_6vectorENS5_12basic_stringIcNS5_11char_traitsIcEENS5_9allocatorIcEEEEblmdSB_NS0_14adl_serializerENS7_IhNSB_IhEEEEvEETnNS5_9enable_ifIXsr21is_basic_json_contextIT_EE5valueEiE4typeELi0EEES2_iRKSD_SK_ |

| 15 | 218 |

nlohmann::json_abi_v3_12_0::basic_json

|

call site: 00218 | |

| 15 | 345 | nlohmann::json_abi_v3_12_0::detail::parse_error::parse_error(int, unsigned long, char const*) | call site: 00345 | _ZN8nlohmann16json_abi_v3_12_010basic_jsonINSt3__13mapENS2_6vectorENS2_12basic_stringIcNS2_11char_traitsIcEENS2_9allocatorIcEEEEblmdS8_NS0_14adl_serializerENS4_IhNS8_IhEEEEvEC2IRSA_SA_TnNS2_9enable_ifIXaantsr6detail13is_basic_jsonIT0_EE5valuesr6detail18is_compatible_typeISE_SI_EE5valueEiE4typeELi0EEEOT_ |

| 12 | 801 |

nlohmann::json_abi_v3_12_0::byte_container_with_subtype

|

call site: 00801 | _ZN8nlohmann16json_abi_v3_12_014adl_serializerINS0_27byte_container_with_subtypeINSt3__16vectorIhNS3_9allocatorIhEEEEEEvE7to_jsonINS0_10basic_jsonINS0_11ordered_mapES4_NS3_12basic_stringIcNS3_11char_traitsIcEENS5_IcEEEEblmdS5_S1_S7_vEERKS8_EEDTcmclL_ZNS0_7to_jsonEEfp_clsr3stdE7forwardIT0_Efp0_EEcvv_EERT_OSL_ |

| 10 | 183 |

nlohmann::json_abi_v3_12_0::basic_json

|

call site: 00183 | __cxa_allocate_exception |

| 10 | 411 | _ZN8nlohmann16json_abi_v3_12_06detail12out_of_range6createIDnTnNSt3__19enable_ifIXsr21is_basic_json_contextIT_EE5valueEiE4typeELi0EEES2_iRKNS4_12basic_stringIcNS4_11char_traitsIcEENS4_9allocatorIcEEEES6_ | call site: 00411 | _ZN8nlohmann16json_abi_v3_12_010basic_jsonINSt3__13mapENS2_6vectorENS2_12basic_stringIcNS2_11char_traitsIcEENS2_9allocatorIcEEEEblmdS8_NS0_14adl_serializerENS4_IhNS8_IhEEEEvEC2IRddTnNS2_9enable_ifIXaantsr6detail13is_basic_jsonIT0_EE5valuesr6detail18is_compatible_typeISE_SI_EE5valueEiE4typeELi0EEEOT_ |

| 8 | 172 |

nlohmann::json_abi_v3_12_0::basic_json

|

call site: 00172 | |

| 8 | 204 | nlohmann::json_abi_v3_12_0::detail::out_of_range::out_of_range(int, char const*) | call site: 00204 | __cxa_throw |

| 8 | 440 |

void nlohmann::json_abi_v3_12_0::detail::external_constructor<(nlohmann::json_abi_v3_12_0::detail::value_t)4>::construct

|

call site: 00440 | _ZN8nlohmann16json_abi_v3_12_010basic_jsonINSt3__13mapENS2_6vectorENS2_12basic_stringIcNS2_11char_traitsIcEENS2_9allocatorIcEEEEblmdS8_NS0_14adl_serializerENS4_IhNS8_IhEEEEvEC2IRllTnNS2_9enable_ifIXaantsr6detail13is_basic_jsonIT0_EE5valuesr6detail18is_compatible_typeISE_SI_EE5valueEiE4typeELi0EEEOT_ |

Runtime coverage analysis

| Function name | source code lines | source lines hit | percentage hit |

|---|

Files reached

| filename | functions hit |

|---|---|

| /src/llama.cpp/fuzzers/fuzz_json_to_grammar.cpp | 1 |

| /src/llama.cpp/vendor/nlohmann/json.hpp | 375 |

| /usr/local/bin/../include/c++/v1/__exception/exception.h | 2 |

| /src/llama.cpp/common/json-schema-to-grammar.cpp | 6 |

| /src/llama.cpp/common/common.cpp | 1 |

| /usr/local/bin/../include/c++/v1/stdexcept | 1 |

| /src/llama.cpp/common/json-schema-to-grammar.h | 1 |

Fuzzer: fuzz_inference

Call tree

The calltree shows the control flow of the fuzzer. This is overlaid with coverage information to display how much of the potential code a fuzzer can reach is in fact covered at runtime. In the following there is a link to a detailed calltree visualisation as well as a bitmap showing a high-level view of the calltree. For further information about these topics please see the glossary for full calltree and calltree overview

Call tree overview bitmap:

The distribution of callsites in terms of coloring is

| Color | Runtime hitcount | Callsite count | Percentage |

|---|---|---|---|

| red | 0 | 8456 | 94.0% |

| gold | [1:9] | 42 | 0.46% |

| yellow | [10:29] | 25 | 0.27% |

| greenyellow | [30:49] | 6 | 0.06% |

| lawngreen | 50+ | 461 | 5.12% |

| All colors | 8990 | 100 |

Fuzz blockers

The following nodes represent call sites where fuzz blockers occur.

| Amount of callsites blocked | Calltree index | Parent function | Callsite | Largest blocked function |

|---|---|---|---|---|

| 5975 | 1520 |

llama_model_load(gguf_context*, void (*)(ggml_tensor*, void*), void*, std::__1::basic_string

|

call site: 01520 | ggml_backend_alloc_ctx_tensors_from_buft |

| 914 | 8059 | ggml_compute_fp16_to_fp32 | call site: 08059 | ggml_backend_sched_reserve_size |

| 556 | 954 | llama_model::load_hparams(llama_model_loader&) | call site: 00954 | llama_model_rope_type |

| 271 | 7682 |

llama_model_load_from_file_impl(gguf_context*, void (*)(ggml_tensor*, void*), void*, std::__1::basic_string

|

call site: 07682 | llama_new_context_with_model |

| 108 | 7559 | llama_file::impl::init_fp(char const*) | call site: 07559 | ggml_validate_row_data |

| 87 | 109 | llama_model::llama_model(llama_model_params const&) | call site: 00109 | ggml_backend_dev_count |

| 79 | 7976 | ggml_compute_fp32_to_fp16 | call site: 07976 | quantize_q2_K |

| 58 | 591 |

llama_model_loader::llama_model_loader(gguf_context*, void (*)(ggml_tensor*, void*), void*, std::__1::basic_string

|

call site: 00591 | gguf_init_from_file |

| 39 | 7510 | llama_file::impl::tell() const | call site: 07510 | __cxa_allocate_exception |

| 30 | 870 |

GGUFMeta::GKV

|

call site: 00870 | gguf_find_key |

| 26 | 673 |

llama_model_loader::llama_model_loader(gguf_context*, void (*)(ggml_tensor*, void*), void*, std::__1::basic_string

|

call site: 00673 | gguf_init_from_file_ptr |

| 19 | 27 | LLVMFuzzerTestOneInput | call site: 00027 | llama_max_devices |

Runtime coverage analysis

| Function name | source code lines | source lines hit | percentage hit |

|---|

Files reached

| filename | functions hit |

|---|---|

| /src/llama.cpp/fuzzers/fuzz_inference.cpp | 1 |

| /src/llama.cpp/src/llama.cpp | 12 |

| /src/llama.cpp/ggml/src/ggml.c | 118 |

| /src/llama.cpp/ggml/src/ggml-threading.cpp | 2 |

| /src/llama.cpp/common/common.h | 15 |

| /src/llama.cpp/common/common.cpp | 2 |

| /src/llama.cpp/src/llama-model.cpp | 41 |

| /src/llama.cpp/src/llama-impl.cpp | 10 |

| /src/llama.cpp/ggml/src/ggml-backend-reg.cpp | 14 |

| /src/llama.cpp/ggml/src/ggml-cpu/ggml-cpu.cpp | 1 |

| /src/llama.cpp/ggml/src/ggml-cpu/ggml-cpu.c | 1 |

| /src/llama.cpp/ggml/src/./ggml-impl.h | 5 |

| /src/llama.cpp/ggml/src/ggml-cpu/vec.h | 2 |

| /src/llama.cpp/ggml/src/ggml-backend.cpp | 77 |

| /src/llama.cpp/src/llama-vocab.cpp | 88 |

| /src/llama.cpp/ggml/src/ggml-backend-meta.cpp | 33 |

| /src/llama.cpp/src/llama-hparams.cpp | 26 |

| /src/llama.cpp/src/llama-adapter.h | 2 |

| /src/llama.cpp/src/llama-model-loader.cpp | 96 |

| /src/llama.cpp/src/llama-arch.cpp | 10 |

| /src/llama.cpp/ggml/src/gguf.cpp | 81 |

| /src/llama.cpp/src/llama-mmap.cpp | 29 |

| /src/llama.cpp/src/llama-model-loader.h | 2 |

| /src/llama.cpp/src/unicode.cpp | 38 |

| /usr/local/bin/../include/c++/v1/stdexcept | 1 |

| /src/llama.cpp/src/unicode.h | 3 |

| /src/llama.cpp/src/llama-arch.h | 3 |

| /src/llama.cpp/ggml/src/ggml-impl.h | 24 |

| /src/llama.cpp/ggml/src/ggml-alloc.c | 33 |

| /src/llama.cpp/ggml/src/ggml-quants.c | 84 |

| /src/llama.cpp/src/llama-context.cpp | 18 |

| /src/llama.cpp/src/llama-graph.h | 6 |

| /src/llama.cpp/src/llama-context.h | 2 |

| /src/llama.cpp/src/llama-sampler.cpp | 3 |

| /src/llama.cpp/src/llama-memory-recurrent.cpp | 4 |

| /src/llama.cpp/src/llama-memory.h | 2 |

| /src/llama.cpp/src/llama-memory-hybrid-iswa.cpp | 1 |

| /src/llama.cpp/src/llama-kv-cache-iswa.cpp | 1 |

| /src/llama.cpp/src/llama-memory-hybrid.cpp | 1 |

| /src/llama.cpp/src/llama-kv-cache.cpp | 5 |

| /src/llama.cpp/src/llama-kv-cache.h | 3 |

| /src/llama.cpp/src/llama-kv-cells.h | 3 |

| /src/llama.cpp/src/llama-graph.cpp | 11 |

| /src/llama.cpp/src/llama-hparams.h | 1 |

| /src/llama.cpp/src/llama-batch.h | 5 |

| /src/llama.cpp/src/llama-batch.cpp | 5 |

| /src/llama.cpp/ggml/src/ggml-opt.cpp | 2 |

Analyses and suggestions

Optimal target analysis

Remaining optimal interesting functions

The following table shows a list of functions that are optimal targets. Optimal targets are identified by finding the functions that in combination, yield a high code coverage.

| Func name | Functions filename | Arg count | Args | Function depth | hitcount | instr count | bb count | cyclomatic complexity | Reachable functions | Incoming references | total cyclomatic complexity | Unreached complexity |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

ggml_backend_cpu_graph_compute(ggml_backend*,ggml_cgraph*)

|

/src/llama.cpp/ggml/src/ggml-cpu/ggml-cpu.cpp | 2 | ['N/A', 'N/A'] | 15 | 0 | 63 | 9 | 4 | 1748 | 0 | 7726 | 7579 |

common_init_from_params(common_params&)

|

/src/llama.cpp/common/common.cpp | 2 | ['N/A', 'N/A'] | 19 | 0 | 593 | 149 | 132 | 1877 | 0 | 25887 | 3644 |

common_schema_converter::visit(nlohmann::json_abi_v3_12_0::basic_json

|

/src/llama.cpp/common/json-schema-to-grammar.cpp | 4 | ['N/A', 'N/A', 'N/A', 'N/A'] | 18 | 0 | 2533 | 646 | 517 | 507 | 5 | 2846 | 2540 |

Implementing fuzzers that target the above functions will improve reachability such that it becomes:

All functions overview

If you implement fuzzers for these functions, the status of all functions in the project will be:

| Func name | Functions filename | Args | Function call depth | Reached by Fuzzers | Runtime reached by Fuzzers | Combined reached by Fuzzers | Fuzzers runtime hit | Func lines hit % | I Count | BB Count | Cyclomatic complexity | Functions reached | Reached by functions | Accumulated cyclomatic complexity | Undiscovered complexity |

|---|

Fuzz engine guidance

This sections provides heuristics that can be used as input to a fuzz engine when running a given fuzz target. The current focus is on providing input that is usable by libFuzzer.

/src/llama.cpp/fuzzers/fuzz_grammar.cpp

Dictionary

Use this with the libFuzzer -dict=DICT.file flag

Fuzzer function priority

Use one of these functions as input to libfuzzer with flag: -focus_function name

-focus_function=['parse_int(char const*)', 'llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string, std::__1::allocator > const&, std::__1::vector >&, bool)', 'parse_char(char const*)', 'llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string, std::__1::allocator > const&, std::__1::vector >&, bool)', 'is_word_char(char)', 'llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string, std::__1::allocator > const&, std::__1::vector >&, bool)', 'llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string, std::__1::allocator > const&, std::__1::vector >&, bool)::$_0::operator()(unsigned long, unsigned long) const', 'llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string, std::__1::allocator > const&, std::__1::vector >&, bool)', 'llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string, std::__1::allocator > const&, std::__1::vector >&, bool)', 'llama_grammar_parser::parse_sequence(char const*, std::__1::basic_string, std::__1::allocator > const&, std::__1::vector >&, bool)'] /src/llama.cpp/fuzzers/fuzz_apply_template.cpp

Dictionary

Use this with the libFuzzer -dict=DICT.file flag

Fuzzer function priority

Use one of these functions as input to libfuzzer with flag: -focus_function name

-focus_function=['llm_chat_apply_template(llm_chat_template, std::__1::vector > const&, std::__1::basic_string, std::__1::allocator >&, bool)', 'llm_chat_apply_template(llm_chat_template, std::__1::vector > const&, std::__1::basic_string, std::__1::allocator >&, bool)'] /src/llama.cpp/fuzzers/fuzz_load_model.cpp

Dictionary

Use this with the libFuzzer -dict=DICT.file flag

Fuzzer function priority

Use one of these functions as input to libfuzzer with flag: -focus_function name

-focus_function=['llama_model::~llama_model()', 'llama_model::llama_model(llama_model_params const&)', 'ggml_backend_dev_get', 'ggml_init', 'ggml_backend_dev_type', 'ggml_backend_dev_count', 'ggml_aligned_malloc', 'llama_model_load_from_file', 'llama_log_internal_v(ggml_log_level, char const*, __va_list_tag*)']/src/llama.cpp/fuzzers/fuzz_structured.cpp

Dictionary

Use this with the libFuzzer -dict=DICT.file flag

Fuzzer function priority

Use one of these functions as input to libfuzzer with flag: -focus_function name

-focus_function=['llama_model::~llama_model()', 'llama_model::llama_model(llama_model_params const&)', 'ggml_backend_dev_get', 'ggml_init', 'ggml_backend_dev_type', 'ggml_backend_dev_count', 'ggml_aligned_malloc', 'llama_model_load_from_file', 'llama_log_internal_v(ggml_log_level, char const*, __va_list_tag*)']/src/llama.cpp/fuzzers/fuzz_json_to_grammar.cpp

Dictionary

Use this with the libFuzzer -dict=DICT.file flag

Fuzzer function priority

Use one of these functions as input to libfuzzer with flag: -focus_function name

-focus_function=['void nlohmann::json_abi_v3_12_0::detail::external_constructor<(nlohmann::json_abi_v3_12_0::detail::value_t)6>::construct, std::__1::allocator >, bool, long, unsigned long, double, std::__1::allocator, nlohmann::json_abi_v3_12_0::adl_serializer, std::__1::vector >, void> >(nlohmann::json_abi_v3_12_0::basic_json, std::__1::allocator >, bool, long, unsigned long, double, std::__1::allocator, nlohmann::json_abi_v3_12_0::adl_serializer, std::__1::vector >, void>&, nlohmann::json_abi_v3_12_0::basic_json, std::__1::allocator >, bool, long, unsigned long, double, std::__1::allocator, nlohmann::json_abi_v3_12_0::adl_serializer, std::__1::vector >, void>::number_unsigned_t)', 'nlohmann::json_abi_v3_12_0::basic_json, std::__1::allocator >, bool, long, unsigned long, double, std::__1::allocator, nlohmann::json_abi_v3_12_0::adl_serializer, std::__1::vector >, void>::end()', '_ZN8nlohmann16json_abi_v3_12_010basic_jsonINSt3__13mapENS2_6vectorENS2_12basic_stringIcNS2_11char_traitsIcEENS2_9allocatorIcEEEEblmdS8_NS0_14adl_serializerENS4_IhNS8_IhEEEEvEC2IRSA_SA_TnNS2_9enable_ifIXaantsr6detail13is_basic_jsonIT0_EE5valuesr6detail18is_compatible_typeISE_SI_EE5valueEiE4typeELi0EEEOT_', 'std::__1::basic_string, std::__1::allocator > nlohmann::json_abi_v3_12_0::detail::concat, std::__1::allocator >, char const (&) [23], std::__1::basic_string, std::__1::allocator > >(char const (&) [23], std::__1::basic_string, std::__1::allocator >&&)', 'nlohmann::json_abi_v3_12_0::basic_json, std::__1::allocator >, bool, long, unsigned long, double, std::__1::allocator, nlohmann::json_abi_v3_12_0::adl_serializer, std::__1::vector >, void>::json_value::json_value(std::__1::basic_string, std::__1::allocator > const&)', 'nlohmann::json_abi_v3_12_0::detail::parse_error::parse_error(int, unsigned long, char const*)', 'nlohmann::json_abi_v3_12_0::byte_container_with_subtype > > const& nlohmann::json_abi_v3_12_0::basic_json, std::__1::allocator >, bool, long, unsigned long, double, std::__1::allocator, nlohmann::json_abi_v3_12_0::adl_serializer, std::__1::vector >, void>::get_ref_impl > > const&, nlohmann::json_abi_v3_12_0::basic_json, std::__1::allocator >, bool, long, unsigned long, double, std::__1::allocator, nlohmann::json_abi_v3_12_0::adl_serializer, std::__1::vector >, void> const>(nlohmann::json_abi_v3_12_0::basic_json, std::__1::allocator >, bool, long, unsigned long, double, std::__1::allocator, nlohmann::json_abi_v3_12_0::adl_serializer, std::__1::vector >, void> const&)', 'nlohmann::json_abi_v3_12_0::basic_json, std::__1::allocator >, bool, long, unsigned long, double, std::__1::allocator, nlohmann::json_abi_v3_12_0::adl_serializer, std::__1::vector >, void>::operator=(nlohmann::json_abi_v3_12_0::basic_json, std::__1::allocator >, bool, long, unsigned long, double, std::__1::allocator, nlohmann::json_abi_v3_12_0::adl_serializer, std::__1::vector >, void>)', '_ZN8nlohmann16json_abi_v3_12_06detail12out_of_range6createIDnTnNSt3__19enable_ifIXsr21is_basic_json_contextIT_EE5valueEiE4typeELi0EEES2_iRKNS4_12basic_stringIcNS4_11char_traitsIcEENS4_9allocatorIcEEEES6_', 'nlohmann::json_abi_v3_12_0::basic_json, std::__1::allocator >, bool, long, unsigned long, double, std::__1::allocator, nlohmann::json_abi_v3_12_0::adl_serializer, std::__1::vector >, void>::data::~data()'] /src/llama.cpp/fuzzers/fuzz_inference.cpp

Dictionary

Use this with the libFuzzer -dict=DICT.file flag

Fuzzer function priority

Use one of these functions as input to libfuzzer with flag: -focus_function name

-focus_function=['llama_model_load(gguf_context*, void (*)(ggml_tensor*, void*), void*, std::__1::basic_string, std::__1::allocator > const&, std::__1::vector, std::__1::allocator >, std::__1::allocator, std::__1::allocator > > >&, _IO_FILE*, llama_model&, llama_model_params&)', 'ggml_compute_fp16_to_fp32', 'llama_model::load_hparams(llama_model_loader&)', 'llama_model_load_from_file_impl(gguf_context*, void (*)(ggml_tensor*, void*), void*, std::__1::basic_string, std::__1::allocator > const&, std::__1::vector, std::__1::allocator >, std::__1::allocator, std::__1::allocator > > >&, _IO_FILE*, llama_model_params)', 'llama_file::impl::init_fp(char const*)', 'llama_model::llama_model(llama_model_params const&)', 'ggml_compute_fp32_to_fp16', 'llama_model_loader::llama_model_loader(gguf_context*, void (*)(ggml_tensor*, void*), void*, std::__1::basic_string, std::__1::allocator > const&, std::__1::vector, std::__1::allocator >, std::__1::allocator, std::__1::allocator > > >&, _IO_FILE*, bool, bool, bool, bool, llama_model_kv_override const*, llama_model_tensor_buft_override const*)', 'llama_file::impl::tell() const', 'GGUFMeta::GKV::get_kv(gguf_context const*, int)'] Runtime coverage analysis

This section shows analysis of runtime coverage data.

For futher technical details on how this section is generated, please see the Glossary .

Complex functions with low coverage

| Func name | Function total lines | Lines covered at runtime | percentage covered | Reached by fuzzers |

|---|---|---|---|---|

| parse_token(llama_vocabconst*,charconst*) | 38 | 15 | 39.47% | ['fuzz_grammar'] |

|

llama_model_load_from_file_impl(gguf_context*,void(*)(ggml_tensor*,void*),void*,std::__1::basic_string

|

177 | 73 | 41.24% | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

|

nlohmann::json_abi_v3_12_0::basic_json

|

60 | 17 | 28.33% | ['fuzz_json_to_grammar'] |

|

nlohmann::json_abi_v3_12_0::detail::parser

|

40 | 16 | 40.0% | ['fuzz_json_to_grammar'] |

|

nlohmann::json_abi_v3_12_0::basic_json

|

60 | 9 | 15.0% | ['fuzz_json_to_grammar'] |

|

nlohmann::json_abi_v3_12_0::detail::iter_impl

|

33 | 14 | 42.42% | ['fuzz_json_to_grammar'] |

|

nlohmann::json_abi_v3_12_0::detail::iter_impl

|

33 | 14 | 42.42% | ['fuzz_json_to_grammar'] |

|

nlohmann::json_abi_v3_12_0::detail::serializer

|

215 | 99 | 46.04% | ['fuzz_json_to_grammar'] |

|

nlohmann::json_abi_v3_12_0::detail::serializer

|

188 | 94 | 50.0% | ['fuzz_json_to_grammar'] |

| llama_model::load_hparams(llama_model_loader&) | 1942 | 87 | 4.479% | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

Files and Directories in report

This section shows which files and directories are considered in this report. The main reason for showing this is fuzz introspector may include more code in the reasoning than is desired. This section helps identify if too many files/directories are included, e.g. third party code, which may be irrelevant for the threat model. In the event too much is included, fuzz introspector supports a configuration file that can exclude data from the report. See the following link for more information on how to create a config file: link

Files in report

| Source file | Reached by | Covered by |

|---|---|---|

| [] | [] | |

| /src/llama.cpp/src/models/cohere2-iswa.cpp | [] | [] |

| /src/llama.cpp/src/models/qwen2moe.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-backend.cpp | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/src/llama-graph.cpp | ['fuzz_inference'] | [] |

| /src/llama.cpp/src/models/baichuan.cpp | [] | [] |

| /src/llama.cpp/src/models/granite.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-impl.h | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/src/models/pangu-embedded.cpp | [] | [] |

| /src/llama.cpp/ggml/src/./ggml-impl.h | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/ggml/src/../include/ggml-cpp.h | [] | [] |

| /src/llama.cpp/src/models/nemotron.cpp | [] | [] |

| /src/llama.cpp/src/llama-kv-cache.cpp | ['fuzz_inference'] | [] |

| /usr/local/bin/../include/c++/v1/__exception/exception.h | ['fuzz_json_to_grammar'] | [] |

| /src/llama.cpp/src/models/deepseek2.cpp | [] | [] |

| /src/llama.cpp/src/llama-context.cpp | ['fuzz_inference'] | [] |

| /src/llama.cpp/src/llama-hparams.cpp | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | [] |

| /src/llama.cpp/src/llama-impl.h | [] | [] |

| /src/llama.cpp/src/llama-model-saver.cpp | [] | [] |

| /src/llama.cpp/src/llama-arch.cpp | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_inference'] |

| /src/llama.cpp/src/models/rwkv7-base.cpp | [] | [] |

| /src/llama.cpp/common/unicode.cpp | [] | [] |

| /src/llama.cpp/src/models/codeshell.cpp | [] | [] |

| /src/llama.cpp/src/models/deci.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-threading.cpp | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/ggml/src/ggml-cpu/arch/x86/repack.cpp | [] | [] |

| /src/llama.cpp/src/models/hunyuan-moe.cpp | [] | [] |

| /src/llama.cpp/src/llama-memory-hybrid-iswa.h | [] | [] |

| /src/llama.cpp/common/sampling.cpp | [] | [] |

| /src/llama.cpp/src/llama-kv-cache-iswa.h | [] | [] |

| /src/llama.cpp/src/models/apertus.cpp | [] | [] |

| /src/llama.cpp/src/models/chatglm.cpp | [] | [] |

| /src/llama.cpp/src/llama-adapter.h | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | [] |

| /src/llama.cpp/src/models/qwen.cpp | [] | [] |

| /src/llama.cpp/vendor/nlohmann/json_fwd.hpp | [] | [] |

| /src/llama.cpp/src/llama-impl.cpp | ['fuzz_grammar', 'fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_grammar', 'fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/src/models/mistral3.cpp | [] | [] |

| /src/llama.cpp/src/models/internlm2.cpp | [] | [] |

| /src/llama.cpp/src/models/step35-iswa.cpp | [] | [] |

| /src/llama.cpp/src/models/paddleocr.cpp | [] | [] |

| /src/llama.cpp/src/models/t5-enc.cpp | [] | [] |

| /src/llama.cpp/src/models/qwen2.cpp | [] | [] |

| /src/llama.cpp/src/llama-kv-cells.h | ['fuzz_inference'] | ['fuzz_inference'] |

| /src/llama.cpp/src/models/hunyuan-dense.cpp | [] | [] |

| /src/llama.cpp/src/llama-memory-hybrid.cpp | ['fuzz_inference'] | [] |

| /src/llama.cpp/src/models/delta-net-base.cpp | [] | [] |

| /src/llama.cpp/src/models/xverse.cpp | [] | [] |

| /src/llama.cpp/src/models/ernie4-5.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-backend-dl.h | [] | [] |

| /src/llama.cpp/src/models/bailingmoe2.cpp | [] | [] |

| /src/llama.cpp/src/models/minimax-m2.cpp | [] | [] |

| /src/llama.cpp/src/llama-chat.cpp | ['fuzz_apply_template'] | ['fuzz_apply_template'] |

| /src/llama.cpp/src/models/bailingmoe.cpp | [] | [] |

| /src/llama.cpp/src/models/command-r.cpp | [] | [] |

| /src/llama.cpp/src/llama-sampler.h | [] | [] |

| /src/llama.cpp/src/llama-memory-hybrid-iswa.cpp | ['fuzz_inference'] | [] |

| /src/llama.cpp/src/models/ernie4-5-moe.cpp | [] | [] |

| /src/llama.cpp/src/llama-hparams.h | ['fuzz_inference'] | [] |

| /src/llama.cpp/src/models/gemma4-iswa.cpp | [] | [] |

| /src/llama.cpp/src/models/llama-iswa.cpp | [] | [] |

| /src/llama.cpp/src/models/mimo2-iswa.cpp | [] | [] |

| /src/llama.cpp/src/models/granite-hybrid.cpp | [] | [] |

| /src/llama.cpp/src/models/olmo.cpp | [] | [] |

| /src/llama.cpp/src/models/grok.cpp | [] | [] |

| /src/llama.cpp/common/../vendor/nlohmann/json_fwd.hpp | [] | [] |

| /src/llama.cpp/common/common.cpp | ['fuzz_json_to_grammar', 'fuzz_inference'] | ['fuzz_json_to_grammar', 'fuzz_inference'] |

| /src/llama.cpp/src/llama-model.cpp | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/src/models/cogvlm.cpp | [] | [] |

| /src/llama.cpp/src/models/mpt.cpp | [] | [] |

| /src/llama.cpp/src/models/olmoe.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-backend-dl.cpp | [] | [] |

| /src/llama.cpp/common/json-schema-to-grammar.cpp | ['fuzz_json_to_grammar'] | ['fuzz_json_to_grammar'] |

| /src/llama.cpp/src/llama-memory-recurrent.cpp | ['fuzz_inference'] | [] |

| /src/llama.cpp/src/./llama-graph.h | [] | [] |

| /src/llama.cpp/src/models/exaone4.cpp | [] | [] |

| /src/llama.cpp/src/models/qwen3moe.cpp | [] | [] |

| /src/llama.cpp/src/llama-sampler.cpp | ['fuzz_inference'] | [] |

| /src/llama.cpp/src/models/refact.cpp | [] | [] |

| /src/llama.cpp/fuzzers/fuzz_inference.cpp | ['fuzz_inference'] | ['fuzz_inference'] |

| /src/llama.cpp/src/models/stablelm.cpp | [] | [] |

| /src/llama.cpp/src/models/minicpm3.cpp | [] | [] |

| /src/llama.cpp/src/models/qwen3vl.cpp | [] | [] |

| /src/llama.cpp/src/models/exaone.cpp | [] | [] |

| /src/llama.cpp/src/models/afmoe.cpp | [] | [] |

| /src/llama.cpp/src/models/jamba.cpp | [] | [] |

| /src/llama.cpp/src/unicode.cpp | ['fuzz_grammar', 'fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_grammar', 'fuzz_inference'] |

| /src/llama.cpp/src/models/chameleon.cpp | [] | [] |

| /src/llama.cpp/common/reasoning-budget.cpp | [] | [] |

| /src/llama.cpp/src/models/gemma3.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/repack.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/traits.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/unary-ops.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/vec.h | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/ggml/src/ggml-opt.cpp | ['fuzz_inference'] | [] |

| /usr/local/bin/../include/c++/v1/string | [] | [] |

| /src/llama.cpp/src/llama-context.h | ['fuzz_inference'] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/quants.c | [] | [] |

| /src/llama.cpp/src/models/phi2.cpp | [] | [] |

| /src/llama.cpp/src/models/qwen3.cpp | [] | [] |

| /src/llama.cpp/src/models/seed-oss.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/llamafile/sgemm.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/vec.cpp | [] | [] |

| /src/llama.cpp/src/models/glm4-moe.cpp | [] | [] |

| /src/llama.cpp/src/llama-batch.cpp | ['fuzz_inference'] | [] |

| /src/llama.cpp/src/models/plm.cpp | [] | [] |

| /src/llama.cpp/src/models/llada-moe.cpp | [] | [] |

| /src/llama.cpp/src/models/llama.cpp | [] | [] |

| /src/llama.cpp/src/models/qwen3vl-moe.cpp | [] | [] |

| /src/llama.cpp/common/json-schema-to-grammar.h | ['fuzz_json_to_grammar'] | [] |

| /usr/local/bin/../include/c++/v1/__exception/exception_ptr.h | [] | [] |

| /src/llama.cpp/src/models/mamba-base.cpp | [] | [] |

| /src/llama.cpp/src/models/smallthinker.cpp | [] | [] |

| /src/llama.cpp/common/common.h | ['fuzz_inference'] | ['fuzz_inference'] |

| /src/llama.cpp/src/models/qwen35.cpp | [] | [] |

| /src/llama.cpp/src/models/bloom.cpp | [] | [] |

| /src/llama.cpp/src/models/gptneox.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-backend-reg.cpp | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/src/llama-kv-cache.h | ['fuzz_inference'] | ['fuzz_inference'] |

| /src/llama.cpp/src/models/rwkv6.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/ggml-cpu.c | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/ggml/src/ggml.c | ['fuzz_grammar', 'fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/ggml/src/ggml-cpu/ops.cpp | [] | [] |

| /src/llama.cpp/src/models/falcon.cpp | [] | [] |

| /src/llama.cpp/src/models/rwkv7.cpp | [] | [] |

| /src/llama.cpp/src/models/kimi-linear.cpp | [] | [] |

| /src/llama.cpp/src/models/plamo.cpp | [] | [] |

| /src/llama.cpp/fuzzers/fuzz_grammar.cpp | ['fuzz_grammar'] | ['fuzz_grammar'] |

| /src/llama.cpp/fuzzers/fuzz_apply_template.cpp | ['fuzz_apply_template'] | ['fuzz_apply_template'] |

| /src/llama.cpp/src/models/glm4.cpp | [] | [] |

| /src/llama.cpp/src/llama-io.h | [] | [] |

| /src/llama.cpp/src/models/wavtokenizer-dec.cpp | [] | [] |

| /src/llama.cpp/src/llama-grammar.cpp | ['fuzz_grammar'] | ['fuzz_grammar'] |

| /src/llama.cpp/src/models/jais2.cpp | [] | [] |

| /src/llama.cpp/src/unicode-data.cpp | [] | [] |

| /src/llama.cpp/src/models/grovemoe.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/simd-gemm.h | [] | [] |

| /src/llama.cpp/src/models/gemma2-iswa.cpp | [] | [] |

| /src/llama.cpp/src/models/maincoder.cpp | [] | [] |

| /src/llama.cpp/src/models/gemma3n-iswa.cpp | [] | [] |

| /src/llama.cpp/src/models/qwen35moe.cpp | [] | [] |

| /src/llama.cpp/src/models/bert.cpp | [] | [] |

| /src/llama.cpp/src/models/llada.cpp | [] | [] |

| /src/llama.cpp/src/llama-grammar.h | ['fuzz_grammar'] | ['fuzz_grammar'] |

| /src/llama.cpp/src/models/arcee.cpp | [] | [] |

| /src/llama.cpp/src/models/starcoder.cpp | [] | [] |

| /src/llama.cpp/src/models/lfm2.cpp | [] | [] |

| /src/llama.cpp/src/llama-vocab.h | [] | [] |

| /src/llama.cpp/src/llama-graph.h | ['fuzz_inference'] | [] |

| /src/llama.cpp/src/models/gemma.cpp | [] | [] |

| /src/llama.cpp/src/llama-memory-hybrid.h | [] | [] |

| /src/llama.cpp/src/models/neo-bert.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/arch/x86/quants.c | [] | [] |

| /src/llama.cpp/src/llama-memory-recurrent.h | [] | [] |

| /src/llama.cpp/src/models/nemotron-h.cpp | [] | [] |

| /src/llama.cpp/src/models/gemma-embedding.cpp | [] | [] |

| /src/llama.cpp/src/models/plamo3.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-backend-meta.cpp | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | [] |

| /src/llama.cpp/common/../vendor/nlohmann/json.hpp | [] | ['fuzz_json_to_grammar'] |

| /src/llama.cpp/src/models/eurobert.cpp | [] | [] |

| /src/llama.cpp/src/models/rwkv6-base.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/binary-ops.cpp | [] | [] |

| /src/llama.cpp/src/models/rwkv6qwen2.cpp | [] | [] |

| /src/llama.cpp/common/log.cpp | [] | [] |

| /src/llama.cpp/src/models/dream.cpp | [] | [] |

| /src/llama.cpp/src/models/openai-moe-iswa.cpp | [] | [] |

| /src/llama.cpp/src/llama-adapter.cpp | [] | [] |

| /src/llama.cpp/src/llama-batch.h | ['fuzz_inference'] | [] |

| /src/llama.cpp/src/llama-memory.h | ['fuzz_inference'] | [] |

| /src/llama.cpp/src/models/phi3.cpp | [] | [] |

| /src/llama.cpp/src/models/dbrx.cpp | [] | [] |

| /src/llama.cpp/src/models/qwen3next.cpp | [] | [] |

| /src/llama.cpp/src/models/olmo2.cpp | [] | [] |

| /src/llama.cpp/src/llama-vocab.cpp | ['fuzz_grammar', 'fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_grammar', 'fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/src/models/mamba.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/common.h | [] | [] |

| /src/llama.cpp/src/models/smollm3.cpp | [] | [] |

| /src/llama.cpp/src/llama-memory.cpp | [] | [] |

| /src/llama.cpp/src/llama-model.h | [] | [] |

| /src/llama.cpp/fuzzers/fuzz_structured.cpp | ['fuzz_structured'] | ['fuzz_structured'] |

| /src/llama.cpp/src/models/t5-dec.cpp | [] | [] |

| /src/llama.cpp/src/../include/llama-cpp.h | [] | [] |

| /src/llama.cpp/src/llama-model-loader.cpp | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_inference'] |

| /src/llama.cpp/src/models/models.h | [] | [] |

| /src/llama.cpp/src/models/gpt2.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-alloc.c | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | [] |

| /src/llama.cpp/src/models/deepseek.cpp | [] | [] |

| /src/llama.cpp/src/models/falcon-h1.cpp | [] | [] |

| /src/llama.cpp/src/models/openelm.cpp | [] | [] |

| /src/llama.cpp/src/models/modern-bert.cpp | [] | [] |

| /src/llama.cpp/src/models/plamo2.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/ggml-cpu.cpp | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/src/models/starcoder2.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-quants.c | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/src/models/qwen2vl.cpp | [] | [] |

| /src/llama.cpp/src/unicode.h | ['fuzz_grammar', 'fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | [] |

| /src/llama.cpp/src/models/dots1.cpp | [] | [] |

| /src/llama.cpp/src/models/orion.cpp | [] | [] |

| /src/llama.cpp/fuzzers/fuzz_json_to_grammar.cpp | ['fuzz_json_to_grammar'] | ['fuzz_json_to_grammar'] |

| /src/llama.cpp/src/models/bitnet.cpp | [] | [] |

| /src/llama.cpp/fuzzers/fuzz_load_model.cpp | ['fuzz_load_model'] | ['fuzz_load_model'] |

| /src/llama.cpp/src/llama.cpp | ['fuzz_apply_template', 'fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_apply_template', 'fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

| /src/llama.cpp/src/models/arctic.cpp | [] | [] |

| /src/llama.cpp/src/models/jais.cpp | [] | [] |

| /src/llama.cpp/common/unicode.h | [] | [] |

| /src/llama.cpp/src/llama-arch.h | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/simd-mappings.h | [] | [] |

| /src/llama.cpp/src/models/rnd1.cpp | [] | [] |

| /src/llama.cpp/ggml/src/gguf.cpp | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_inference'] |

| /src/llama.cpp/common/sampling.h | [] | [] |

| /src/llama.cpp/src/models/exaone-moe.cpp | [] | [] |

| /usr/local/bin/../include/c++/v1/stdexcept | ['fuzz_grammar', 'fuzz_load_model', 'fuzz_structured', 'fuzz_json_to_grammar', 'fuzz_inference'] | [] |

| /src/llama.cpp/src/llama-model-loader.h | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_inference'] |

| /src/llama.cpp/src/llama-io.cpp | [] | [] |

| /src/llama.cpp/ggml/src/ggml-cpu/traits.h | [] | [] |

| /usr/local/bin/../include/c++/v1/istream | [] | [] |

| /src/llama.cpp/src/llama-kv-cache-iswa.cpp | ['fuzz_inference'] | [] |

| /src/llama.cpp/vendor/nlohmann/json.hpp | ['fuzz_json_to_grammar'] | ['fuzz_json_to_grammar'] |

| /src/llama.cpp/src/models/arwkv7.cpp | [] | [] |

| /src/llama.cpp/src/llama-mmap.cpp | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] | ['fuzz_load_model', 'fuzz_structured', 'fuzz_inference'] |

Directories in report

| Directory |

|---|

| /src/llama.cpp/src/ |

| /src//src/models/ |

| /src/llama.cpp/common/../vendor/nlohmann/ |

| /src/llama.cpp/ggml/src/ggml-cpu/llamafile/ |

| /usr/local/bin/../include/c++/v1/ |

| /src/llama.cpp/src/../include/ |

| /src/llama.cpp/fuzzers/ |

| /src/llama.cpp/ggml/src/./ |

| /src/llama.cpp/ggml/src/ggml-cpu/ |

| /src//src/ |

| /src/llama.cpp/vendor/nlohmann/ |

| /src/llama.cpp/ggml/src/ |

| /src/llama.cpp/ggml/src/../include/ |

| /src/llama.cpp/src/./ |

| /src/llama.cpp/src/models/ |

| /usr/local/bin/../include/c++/v1/__exception/ |

| /src/llama.cpp/common/ |

| /src/llama.cpp/ggml/src/ggml-cpu/arch/x86/ |

Metadata section

This sections shows the raw data that is used to produce this report. This is mainly used for further processing and developer debugging.

| Fuzzer | Calltree file | Program data file | Coverage file |

|---|---|---|---|

| fuzz_grammar | fuzzerLogFile-0-Nzh6yaAzh3.data | fuzzerLogFile-0-Nzh6yaAzh3.data.yaml | fuzz_grammar.covreport |

| fuzz_apply_template | fuzzerLogFile-0-ICBDDn9r1E.data | fuzzerLogFile-0-ICBDDn9r1E.data.yaml | fuzz_apply_template.covreport |

| fuzz_load_model | fuzzerLogFile-0-DXcFmeIwdI.data | fuzzerLogFile-0-DXcFmeIwdI.data.yaml | fuzz_load_model.covreport |

| fuzz_structured | fuzzerLogFile-0-aVbZGeqeyl.data | fuzzerLogFile-0-aVbZGeqeyl.data.yaml | fuzz_structured.covreport |

| fuzz_json_to_grammar | fuzzerLogFile-0-gBlJT0k8Gk.data | fuzzerLogFile-0-gBlJT0k8Gk.data.yaml | fuzz_json_to_grammar.covreport |

| fuzz_inference | fuzzerLogFile-0-boCm7nD3TM.data | fuzzerLogFile-0-boCm7nD3TM.data.yaml | fuzz_inference.covreport |