Decision Tree Markov Model: Optimizing Sequential Decision Making in Dynamic Systems

In rapidly evolving systems, making informed sequential decisions requires models that balance structure with adaptability. The decision tree Markov model bridges this gap by integrating discrete decision paths with probabilistic state transitions, enabling smarter, data-driven choices across fields like finance, healthcare, and AI.

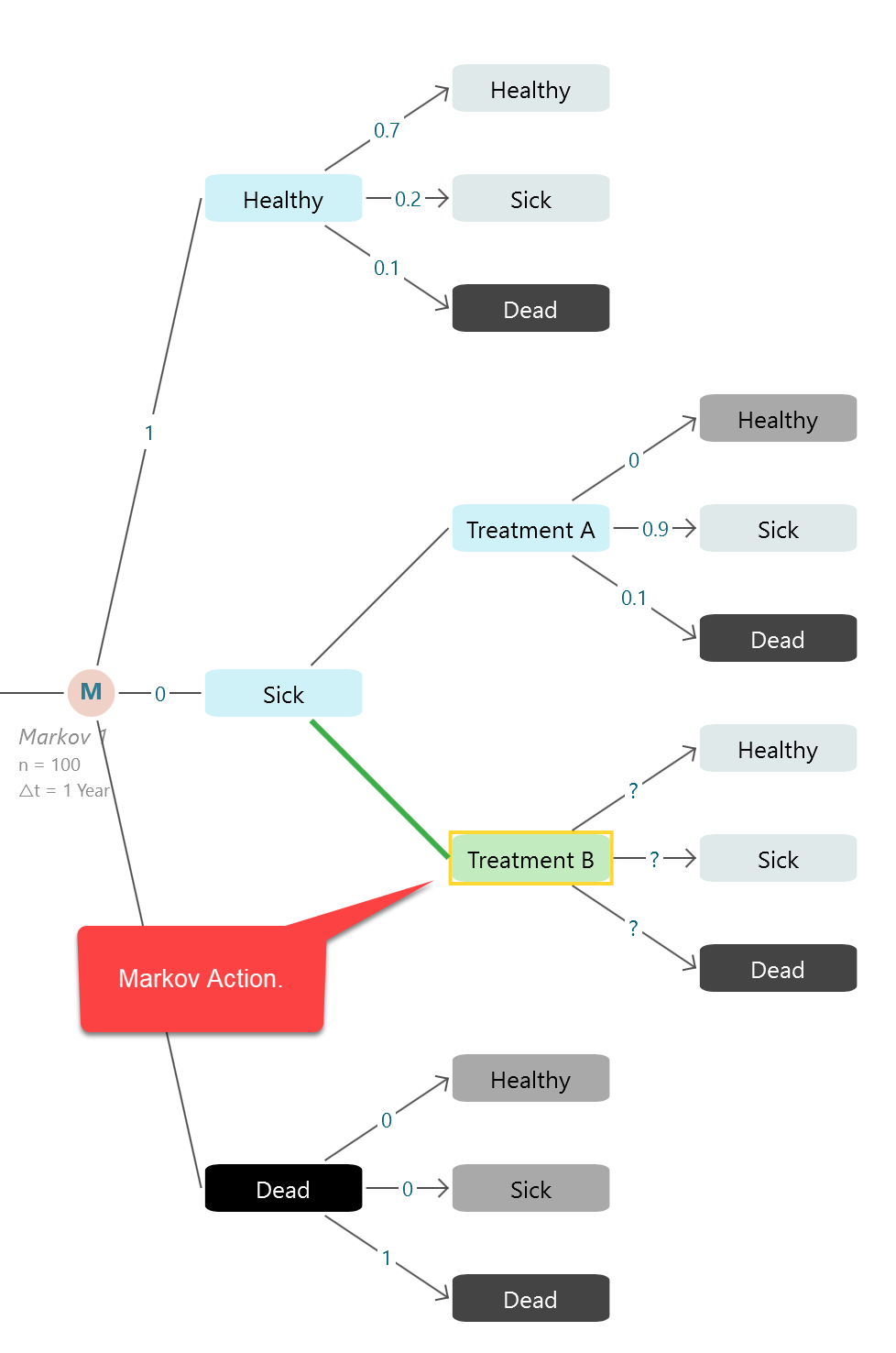

Model structure. (A) Abbreviated decision tree and Markov model; and ...

Source: www.researchgate.net

Understanding the Decision Tree Markov Model

The decision tree Markov model merges traditional decision trees with Markov chains to represent evolving processes where outcomes depend on current states and probabilistic transitions. Unlike static decision trees, it accounts for uncertainty by assigning transition probabilities between states, allowing dynamic adaptation as new data emerges. This hybrid structure enhances predictive modeling by capturing both discrete decisions and continuous state changes in complex environments.

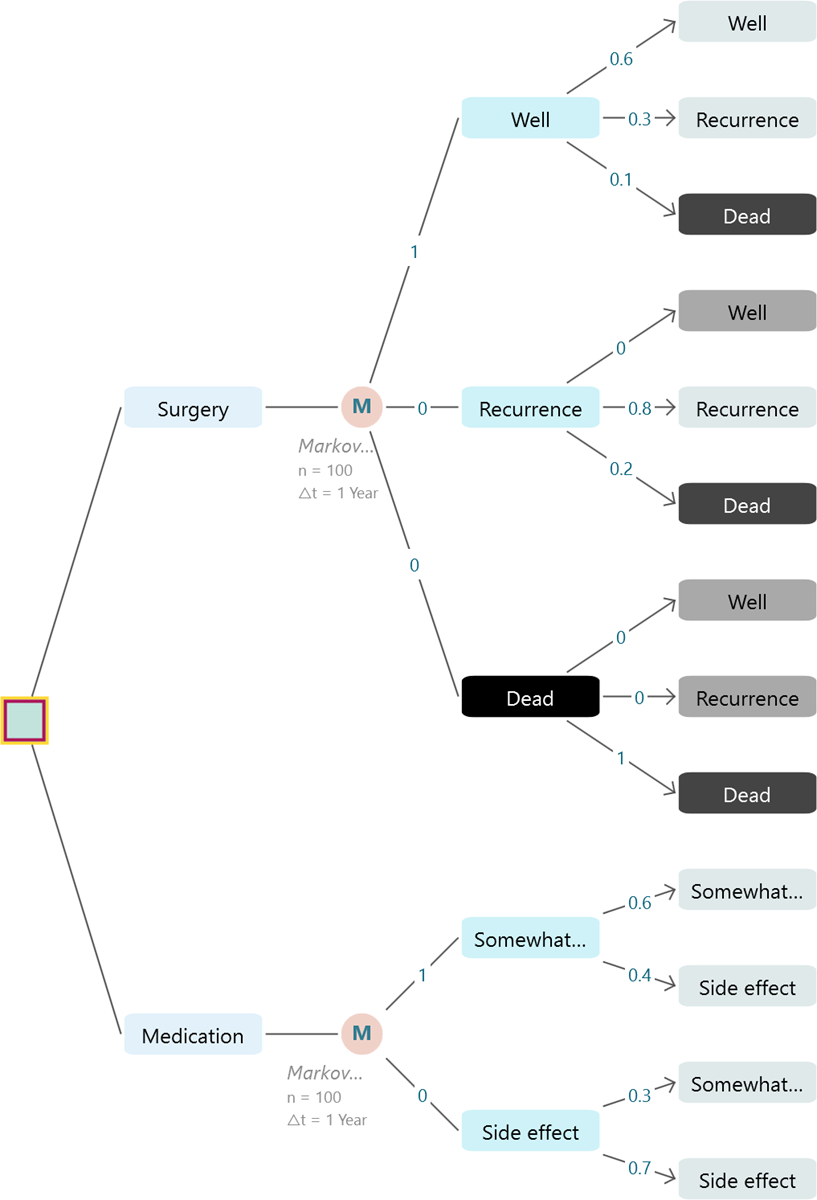

Decision tree and Markov model for estimating cost-effectiveness of ...

Source: www.researchgate.net

Core Components and Operational Mechanics

At its core, the model consists of decision nodes that represent discrete choices, linked by branches reflecting possible outcomes. Each transition between states incorporates a probability matrix derived from historical data or real-time inputs. The model updates its transition probabilities iteratively, ensuring it remains aligned with changing conditions. This adaptability makes it ideal for applications requiring real-time responsiveness, such as customer journey analytics or autonomous system navigation.

Markov Models - Introduction to the Markov Models

Source: www.spicelogic.com

Applications and Real-World Impact

Decision tree Markov models are transforming industries by enabling precise forecasting and strategic planning. In healthcare, they predict patient progression through treatment stages with probabilistic accuracy. In finance, they assess credit risk across dynamic economic conditions. In AI, they power intelligent agents that learn optimal behavior sequences. By merging structured decision logic with probabilistic foresight, this model delivers actionable insights that drive efficiency and innovation.

Markov model with embedded decision tree overview (health states ...

Source: www.researchgate.net

The decision tree Markov model stands at the forefront of intelligent system design, offering a powerful framework for navigating uncertainty. By integrating decision logic with probabilistic transitions, it delivers robust, adaptive predictions essential for modern data-driven decision-making. For organizations seeking to enhance forecasting and automation, mastering this model is a strategic imperative.

Diagram of Decision Tree Markov Model for Each Treatment. Referring to ...

Source: www.researchgate.net

Markov decision process (MDP), also called a stochastic dynamic program or stochastic control problem, is a model for sequential decision making when outcomes are uncertain. [1] Originating from operations research in the 1950s, [2][3] MDPs have since gained recognition in a variety of fields, including ecology, economics, healthcare, telecommunications and reinforcement learning. [4.

Markov Models - Choosing between two treatment plans

Source: www.spicelogic.com

In particular, we combine decision trees with hidden Markov models where, for any time point, an underlying (hidden) Markov chain selects the tree that generates the corresponding observation. We propose an estimation approach that is based on the expectation. Big picture Last week we covered decision trees as they are used in CEA The new element was the introduction of uncertainty into the calculation of ICERs The ICER becomes an expected value We also saw that decision models are not explicit about time and that they get too complicated if events are recurrent Markov models solve these problems Confusion alert: Keep in mind that Markov models can.

Model structure (A) of the decision tree component and (B) of the ...

Source: www.researchgate.net

6.1 Building Markov Models While most decision trees include a simple notion of time (i.e., left to right chronologically), there are no shortcuts in a standard tree structure for representing events that recur over time. A state transition model, also called a Markov model, is designed to do just this. Markov models are used to simulate both short-term processes (e.g., development of a tumor.

a: Abbreviated decision tree and Markov model used to compare 3 ...

Source: www.researchgate.net

Regardless of the way in which one operationalizes a decision analysis (decision tree, state-transition Markov cohort model, state-transition microsimulation, discrete-event simulation), it will be imperative to conduct sensitivity analyses to assess the robustness of model results. For example, simple Markov models can extend the time horizon of a decision tree with the sole purpose of calculating life expectancy with a two-state model ("alive" and "dead" states) using annual probabilities from a life table or adjusted life table. The decision tree software will execute a cohort simulation to solve the Markov Chain or Markov Decision Process.

Decision tree-Markov model | Download Scientific Diagram

Source: www.researchgate.net

You can define the cohort simulation setting by clicking this fly. Learn how Decision Trees and Markov Models underpin health economic evaluations, enabling robust, time. A decision tree was used to capture the first stage of the clinical pathway, with the remainder of the clinical pathway being captured using a Markov model.

| Decision tree combined with Markov model. Model used for evaluating ...

Source: www.researchgate.net

The screening cohort follows a pre. In particular, we combine decision trees with hidden Markov models where, for any time point, an underlying (hidden) Markov chain selects the tree that generates the corresponding observation.

Decision tree and Markov model diagrams. (A) Decision tree diagram. (B ...

Source: www.researchgate.net

Decision tree combined with the Markov model used for evaluating costs ...

Source: www.researchgate.net

| Decision tree combined with Markov model. Model used for evaluating ...

Source: www.researchgate.net

One-year decision tree model and long-term Markov model (adapted from ...

Source: www.researchgate.net

Economic model schematic. A: Decision tree; B: Markov model. The ...

Source: www.researchgate.net