One concern I had after our earlier work on collaborative vibe coding was that the task setup was still cleaner and simpler than many real coding interactions. Real-world vibe coding is messy: users differ a lot in how much they know, how much they trust the model, what kinds of failures they can actually notice, and how clearly they can describe those failures back to the agent.

That is the motivation behind vibe-coding-simulator, a project for turning vibe coding into a controlled multi-round experiment without stripping away the social structure that makes it interesting. The project repository is here: github.com/Haoyu-Hu/vibe-coding-simulator.

Why build this project

This project reuses the multi-turn experimental scaffold from iterative-collapse-detection, but moves it into a more realistic coding loop. Instead of one model repeatedly revising an answer in isolation, the interaction is split into three roles:

- a user simulator that asks for software and gives follow-up feedback,

- a developer that writes code in response to the conversation, and

- a tester that evaluates the code and decides what feedback is visible to the user.

That change matters because many coding failures are not just model failures. They are interaction failures. Sometimes the user cannot inspect the code well enough to diagnose the problem. Sometimes the tester knows more than the user but cannot expose everything directly. Sometimes the developer gets pushed into a bad trajectory because the feedback channel itself is limited or ambiguous.

The user simulator is the interesting part

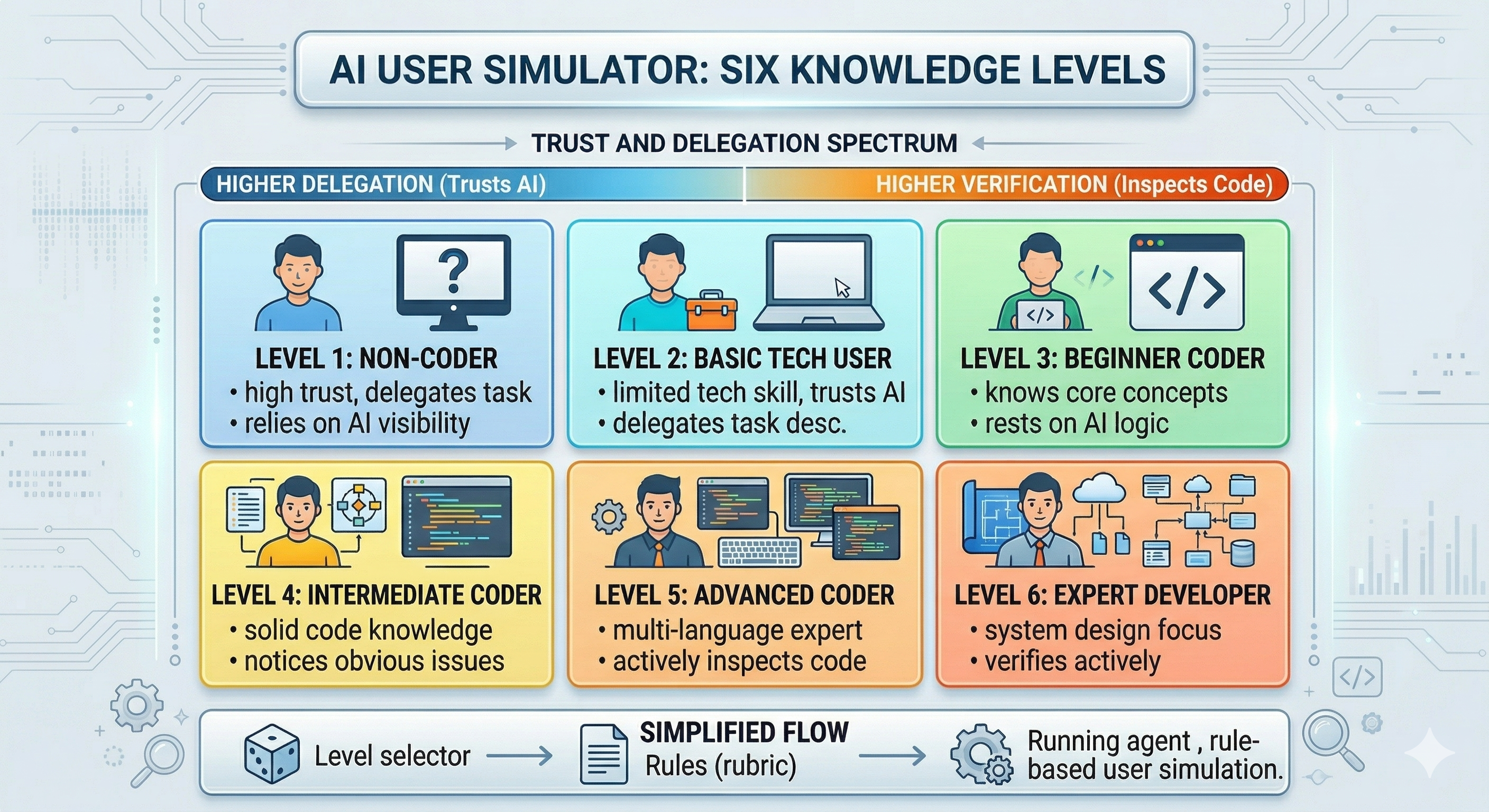

The part I find most exciting in this project is the user simulation. Instead of using one generic prompt for “a user,” the benchmark defines a six-level knowledge spectrum that runs from non-coder to expert developer.

The levels are not only about skill. They are also about trust, delegation, and verification:

- Levels 1-2 mostly delegate to the model and react to visible behavior.

- Levels 3-4 can describe examples, inspect simple code, and notice obvious failures.

- Levels 5-6 actively verify, inspect the implementation, and care about design quality in addition to correctness.

This makes the simulated user closer to a real collaborator than a benchmark oracle. A low-knowledge user does not suddenly become perfect at bug reporting. They describe symptoms, confusing outputs, and what feels wrong. A high-knowledge user behaves very differently: they can mention edge cases, reason about likely causes, and push on maintainability, performance, or security.

How the simulator is implemented

The implementation uses rubric files for each of the six user levels, and one level is sampled once per chain and then kept fixed across all rounds. That matters because it gives each chain a stable user identity instead of letting the same simulated person drift between beginner and expert behavior from turn to turn.

The level distribution is also configurable rather than uniform. By default it is centered around levels 3 and 4, which makes the synthetic population look more like a mixed real-world user base than an all-expert benchmark.

The follow-up loop is also deliberately asymmetric:

- The tester runs both public tests and a broader hidden test suite.

- The user only receives a restricted public summary of those results.

- The user simulator then rewrites that limited feedback into language that matches its knowledge level.

- The developer has to respond to that socially filtered message rather than to the full hidden evaluation.

This is one of the key design choices in the project. It keeps the user from behaving like an omniscient grader. The hidden tests drive the real metric, but the developer only gets the kind of feedback a user could plausibly provide.

There are a few other details I like here. Higher-knowledge users can be shown the latest code and comment on it directly, while lower-knowledge users mostly operate through visible behavior and tester summaries. The simulator also keeps a short memory window over recent rounds so the interaction has continuity without turning into an unbounded transcript.

What this setup can test

Once this benchmark is running at scale, it should let us ask more realistic questions about coding agents:

- Which models are robust to vague, low-information feedback?

- Which ones improve when the user becomes more technical and more skeptical?

- When does repeated interaction help, and when does it trap the developer in a locally consistent but globally wrong solution?

- How large is the gap between what the user can observe and what the hidden tests reveal?

I like this framing because it treats vibe coding as a joint system rather than a one-shot code generation problem.

Preliminary results

This part is still to do. The simulator, user rubric system, and logging pipeline are in place, but I do not want to over-claim before running a broader set of experiments across datasets, developer models, and user levels.

Closing note

This project is meant to push vibe-coding evaluation closer to the real world while still keeping the interaction controlled enough to analyze. If you are interested in this direction and want to discuss it, feel free to reach out.

References

@misc{hu2026vibecodingsimulator,

author = {Haoyu Hu},

title = {vibe-coding-simulator},

year = {2026},

url = {https://github.com/Haoyu-Hu/vibe-coding-simulator}

}

@misc{hu2026whyhumanguidance,

author = {Haoyu Hu and Raja Marjieh and Katherine M. Collins and Chenyi Li and Thomas L. Griffiths and Ilia Sucholutsky and Nori Jacoby},

title = {Why Human Guidance Matters in Collaborative Vibe Coding},

year = {2026},

url = {https://arxiv.org/abs/2602.10473}

}

Project link: https://github.com/Haoyu-Hu/vibe-coding-simulator