Subset with Bounding Boxes (600 classes), Object Segmentations, Visual Relationships, and Localized Narratives

These annotation files cover the 600 boxable object classes, and span the 1,743,042 training images where we annotated bounding boxes, object segmentations, visual relationships, and localized narratives; as well as the full validation (41,620 images) and test (125,436 images) sets.There are two main options to access this data:

- Automatically, using the open-source tool FiftyOne,

- Manually downloading the images and raw annotation files.

Download and Visualize using FiftyOne

We have collaborated with the team at Voxel51 to make downloading and visualizing Open Images a breeze using their open-source tool FiftyOne.As with any other dataset in the FiftyOne Dataset Zoo, downloading it is as easy as calling:

dataset = fiftyone.zoo.load_zoo_dataset("open-images-v6", split="validation")

The function allows you to:

- Choose which split to download.

- Choose which types of annotations to download (image-level labels, boxes, segmentations, etc.).

- Choose which classes of objects to download (e.g. cats and dogs).

- Limit the number of samples, to do a first exploration of the data.

- Download specific images by ID.

dataset = fiftyone.zoo.load_zoo_dataset(

"open-images-v6",

split="validation",

label_types=["detections", "segmentations"],

classes=["Cat", "Dog"],

max_samples=100,

)

FiftyOne also provides native

support for Open Images-style evaluation

to compute mAP, plot PR curves, interact with confusion matrices, and explore

individual label-level results.

results = dataset.evaluate_detections("predictions", gt_field="detections", method="open-images")

Download Manually

Images

If you're interested in downloading the full set of training, test, or validation images (1.7M, 125k, and 42k, respectively; annotated with bounding boxes, etc.), you can download them packaged in various compressed files from CVDF's site:If you only need a certain subset of these images and you'd rather avoid downloading the full 1.9M images, we provide a Python script that downloads images from CVDF.

- Download the file downloader.py

(open and press

Ctrl + S), or directly run:wget https://raw.githubusercontent.com/openimages/dataset/master/downloader.py

- Create a text file containing all the image IDs that you're interested in downloading. It

can come from filtering the annotations with certain classes, those annotated with a certain

type of annotations (e.g., MIAP). Each line should follow

the format

$SPLIT/$IMAGE_ID, where$SPLITis either "train", "test", "validation", or "challenge2018"; and$IMAGE_IDis the image ID that uniquely identifies the image. A sample file could be:train/f9e0434389a1d4dd train/1a007563ebc18664 test/ea8bfd4e765304db

- Run the following script, making sure you have the dependencies installed:

python downloader.py $IMAGE_LIST_FILE --download_folder=$DOWNLOAD_FOLDER --num_processes=5

For help, run:python downloader.py -h

Annotations and metadata

* Please note that in Jan 27th 2021 we removed two entries from the visual relationships

train

file oidv6-train-annotations-vrd.csv because they were on image 634483a6a8a74df4,

which is not in the set of images annotated with bounding boxes.

Subset with Image-Level Labels (19,958 classes)

These annotation files cover all object classes. In the train set, the human-verified labels span 7,337,077 images, while the machine-generated labels span 8,949,445 images. The image IDs below list all images that have human-verified labels. The annotation files span the full validation (41,620 images) and test (125,436 images) sets.Trouble downloading the pixels? Let us know.

Complete Open Images

The full set of 9,178,275 images.Trouble downloading the pixels? Let us know.

Open Images Extended

Data Formats

Bounding boxes

Each row defines one bounding box.

ImageID,Source,LabelName,Confidence,XMin,XMax,YMin,YMax,IsOccluded,IsTruncated,IsGroupOf,IsDepiction,IsInside,XClick1X,XClick2X,XClick3X,XClick4X,XClick1Y,XClick2Y,XClick3Y,XClick4Y

000002b66c9c498e,xclick,/m/04bcr3,1,0.312500,0.578125,0.351562,0.464063,0,0,0,0,0,0.312500,0.578125,0.385937,0.576562,0.454688,0.364063,0.351562,0.464063

3550e6ef5d44d91a,activemil,/m/0220r2,1,0.022461,0.865234,0.234375,0.986979,1,0,0,0,0,-1.000000,-1.000000,-1.000000,-1.000000,-1.000000,-1.000000,-1.000000,-1.000000

880b4b00f75260ec,xclick,/m/0ch_cf,1,0.641875,0.693125,0.818333,0.878333,1,0,0,0,0,0.641875,0.643125,0.678750,0.693125,0.818333,0.842500,0.871667,0.878333

b17e3f11cb77c7c8,xclick,/m/0dzct,1,0.510156,0.664844,0.337632,0.623681,1,0,0,0,0,0.573438,0.510156,0.664844,0.587500,0.337632,0.438453,0.468933,0.623681

[...]

ImageID: the image this box lives in.Source: indicates how the box was made:xclickare manually drawn boxes using the method presented in [1], were the annotators click on the four extreme points of the object. In V6 we release the actual 4 extreme points for all xclick boxes in train (13M), see below.activemilare boxes produced using an enhanced version of the method [2]. These are human verified to be accurate at IoU>0.7.

LabelName: the MID of the object class this box belongs to.Confidence: a dummy value, always 1.XMin,XMax,YMin,YMax: coordinates of the box, in normalized image coordinates. XMin is in [0,1], where 0 is the leftmost pixel, and 1 is the rightmost pixel in the image. Y coordinates go from the top pixel (0) to the bottom pixel (1).XClick1X,XClick2X,XClick3X,XClick4X,XClick1Y,XClick2Y,XClick3Y,XClick4Y: normalized image coordinates (asXMin, etc.) of the four extreme points of the object that produced the box using [1] in the case ofxclickboxes. Dummy values of -1 in the case ofactivemilboxes.

The attributes have the following definitions:

IsOccluded: Indicates that the object is occluded by another object in the image.IsTruncated: Indicates that the object extends beyond the boundary of the image.IsGroupOf: Indicates that the box spans a group of objects (e.g., a bed of flowers or a crowd of people). We asked annotators to use this tag for cases with more than 5 instances which are heavily occluding each other and are physically touching.IsDepiction: Indicates that the object is a depiction (e.g., a cartoon or drawing of the object, not a real physical instance).IsInside: Indicates a picture taken from the inside of the object (e.g., a car interior or inside of a building).

For each of them, value 1 indicates present, 0 not present, and -1 unknown.

Instance segmentation masks

The masks information is stored in two files:

- Individual mask images, with information encoded in the filename.

- A comma-separated-values (CSV) file with additional information (

masks_data.csv).

The masks images are PNG binary images, where non-zero pixels belong to a single object instance and zero pixels are background. The file names look as follows (random 5 examples):

e88da03f2d80f1a1_m019jd_e16d01b9.png

540c5536e95a3282_m014j1m_b00fa52e.png

1c84bdd61fa3b883_m06m11_62ef2388.png

663389d2c9d562d8_m04_sv_7e23f2a5.png

072b8fd82919ab3e_m06mf6_dd70f221.png

The format of .zip archives names is the following: each <subset>_<suffix>.zip contains all masks for all images with the first characted of ImageID equal to <suffix>.

The value of <suffix> is from 0-9 and a-f.

Each row in masks_data.csv describes one instance, using similar conventions as the boxes CSV data file.

MaskPath,ImageID,LabelName,BoxID,BoxXMin,BoxXMax,BoxYMin,BoxYMax,PredictedIoU,Clicks

25adb319ebc72921_m02mqfb_8423aba8.png,25adb319ebc72921,/m/02mqfb,8423aba8,0.000000,0.998438,0.089062,0.770312,0.62821,0.15808 0.26206 1;0.90333 0.41076 0;0.17578 0.66566 1;0.00761 0.23197 1;0.07918 0.26058 0;0.31792 0.47737 1;0.12858 0.59262 0;0.73229 0.34016 1;0.01865 0.20001 1;0.52214 0.31037 0;0.83596 0.28105 1;0.23418 0.60177 0

0a419be97dec2fa3_m02mqfb_8ad2c442.png,0a419be97dec2fa3,/m/02mqfb,8ad2c442,0.057813,0.943750,0.056250,0.960938,0.87836,0.89971 0.08481 1;0.20175 0.90471 0;0.11511 0.89990 0;0.94728 0.28410 0;0.19611 0.85369 0;0.07672 0.87857 1;0.82215 0.62642 0;0.13916 0.92650 1;0.51738 0.48419 1

8eef6e54789ce66d_m02mqfb_83dae39c.png,8eef6e54789ce66d,/m/02mqfb,83dae39c,0.037500,0.978750,0.129688,0.925000,0.70206,0.40219 0.16838 1;0.56758 0.65286 1;0.08311 0.90762 1;0.20840 0.56515 1;0.43336 0.23679 0;0.24689 0.43426 0;0.49292 0.65762 1;0.31383 0.51431 0;0.07137 0.86214 0;0.68160 0.38210 1;0.69462 0.59568 0

...

MaskPath: name of the corresponding mask image.ImageID: the image this mask lives in.LabelName: the MID of the object class this mask belongs to.BoxID: an identifier for the box within the image.BoxXMin,BoxXMax,BoxYMin,BoxYMax: coordinates of the box linked to the mask, in normalized image coordinates. Note that this is not the bounding box of the mask, but the starting box from which the mask was annotated. These coordinates can be used to relate the mask data with the boxes data.PredictedIoU: if present, indicates a predicted IoU value with respect to ground-truth. This quality estimate is machine-generated based on human annotator behaviour. See [3] for details.Clicks: if present, indicates the human annotator clicks, which provided guidance during the annotation process we carried out (See [3] for details). This field is encoded using the following format:X1 Y1 T1;X2 Y2 T2;X3 Y3 T3;....Xi Yiare the coordinates of the click in normalized image coordinates.Tiis the click type, value0indicates the annotator marks the point as background, value1as part of the object instance (foreground). These clicks can be interesting for researchers in the field of interactive segmentation. They are not necessary for users interested in the final masks only.

Visual relationships

Each row in the file corresponds to a single annotation.

ImageID,LabelName1,LabelName2,XMin1,XMax1,YMin1,YMax1,XMin2,XMax2,YMin2,YMax2,RelationLabel

0009fde62ded08a6,/m/0342h,/m/01d380,0.2682927,0.78549093,0.4977778,0.8288889,0.2682927,0.78549093,0.4977778,0.8288889,is

00198353ef684011,/m/01mzpv,/m/04bcr3,0.23779725,0.30162704,0.6500938,0.7335835,0,0.5819775,0.6482176,0.99906194,at

001e341dd7456c72,/m/04yx4,/m/01mzpv,0.07009346,0.2859813,0.2332708,0.5203252,0.14018692,0.31588784,0.32082552,0.48405254,on

001e341dd7456c72,/m/04yx4,/m/01mzpv,0,0.28317758,0.26454034,0.5540963,0.2224299,0.3411215,0.3908693,0.4859287,on

001e341dd7456c72,/m/01599,/m/04bcr3,0.5551402,0.6084112,0.50343966,0.5490932,0.5411215,0.95981306,0.5090682,0.78361475,on

001e341dd7456c72,/m/04bcr3,/m/01d380,0.7392523,0.9990654,0.3889931,0.518449,0.7392523,0.9990654,0.3889931,0.518449,is

...

ImageID: the image this relationship instance lives in.LabelName1: the label of the first object in the relationship triplet.XMin1,XMax1,YMin1,YMax1: normalized bounding box coordinates of the bounding box of the first object.LabelName2: the label of the second object in the relationship triplet, or an attribute.XMin2,XMax2,YMin2,YMax2: If the relationship is between a pair of objects: normalized bounding box coordinates of the bounding box of the second object. For an object-attribute relationship (RelationLabel="is"): normalized bounding box of the first object (repeated). In this case, LabelName2 is an attribute.RelationLabel: the label of the relationship ("is" in case of attributes).

Image Labels

Human-verified and machine-generated image-level labels:

ImageID,Source,LabelName,Confidence

000026e7ee790996,verification,/m/04hgtk,0

000026e7ee790996,verification,/m/07j7r,1

000026e7ee790996,crowdsource-verification,/m/01bqvp,1

000026e7ee790996,crowdsource-verification,/m/0csby,1

000026e7ee790996,verification,/m/01_m7,0

000026e7ee790996,verification,/m/01cbzq,1

000026e7ee790996,verification,/m/01czv3,0

000026e7ee790996,verification,/m/01v4jb,0

000026e7ee790996,verification,/m/03d1rd,0

...

Source: indicates how the annotation was created:

verificationare labels verified by in-house annotators at Google.crowdsource-verificationare labels verified from the Crowdsource app.machineare machine-generated labels.

Confidence: Labels that are human-verified to be present in an image have confidence = 1 (positive labels). Labels that are human-verified to be absent from an image have confidence = 0 (negative labels). Machine-generated labels have fractional confidences, generally >= 0.5. The higher the confidence, the smaller the chance for the label to be a false positive.

Class Names

The class names in MID format can be converted to their short descriptions by looking into class-descriptions.csv:

...

/m/0pc9,Alphorn

/m/0pckp,Robin

/m/0pcm_,Larch

/m/0pcq81q,Soccer player

/m/0pcr,Alpaca

/m/0pcvyk2,Nem

/m/0pd7,Army

/m/0pdnd2t,Bengal clockvine

/m/0pdnpc9,Bushwacker

/m/0pdnsdx,Enduro

/m/0pdnymj,Gekkonidae

...

Note the presence of characters like commas and quotes. The file follows standard CSV escaping rules. e.g.:

/m/02wvth,"Fiat 500 ""topolino"""

/m/03gtp5,Lamb's quarters

/m/03hgsf0,"Lemon, lime and bitters"

Image IDs

It has image URLs, their OpenImages IDs, the rotation information, titles, authors, and license information:

ImageID,Subset,OriginalURL,OriginalLandingURL,License,AuthorProfileURL,Author,Title,

OriginalSize,OriginalMD5,Thumbnail300KURL,Rotation

...

000060e3121c7305,train,https://c1.staticflickr.com/5/4129/5215831864_46f356962f_o.jpg,\

https://www.flickr.com/photos/brokentaco/5215831864,\

https://creativecommons.org/licenses/by/2.0/,\

"https://www.flickr.com/people/brokentaco/","David","28 Nov 2010 Our new house."\

211079,0Sad+xMj2ttXM1U8meEJ0A==,https://c1.staticflickr.com/5/4129/5215831864_ee4e8c6535_z.jpg,0

...

Each image has a unique 64-bit ID assigned. In the CSV files they appear as zero-padded hex integers, such as 000060e3121c7305.

The data is as it appears on the destination websites.

OriginalSizeis the download size of the original image.OriginalMD5is base64-encoded binary MD5, as described here.Thumbnail300KURLis an optional URL to a thumbnail with ~300K pixels (~640x480). It is provided for the convenience of downloading the data in the absence of more convenient ways to get the images. If missing,OriginalURLmust be used (and then resized to the same size, if needed). These thumbnails are generated on the fly and their contents and even resolution might be different every day.Rotationis the number of degrees that the image should be rotated counterclockwise to match the Flickr user intended orientation (0,90,180,270).nanmeans that this information is not available. Check this announcement for more information about the issue.

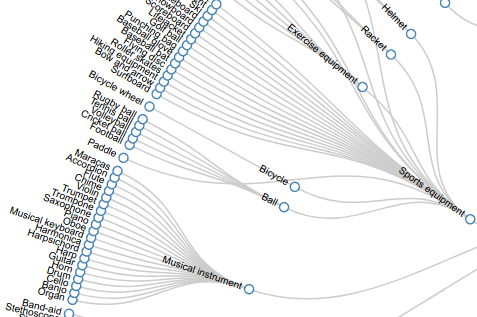

Hierarchy for 600 boxable classes

View the set of boxable classes as a hierarchy here or download it as a JSON file:

References

-

"Extreme clicking for efficient object annotation", Papadopolous et al., ICCV 2017.

-

"We don't need no bounding-boxes: Training object class detectors using only human verification, Papadopolous et al., CVPR 2016.

-

"Large-scale interactive object segmentation with human annotators", Benenson et al., CVPR 2019.